Defending Large Language Models Against Jailbreak Attacks via In-Decoding Safety-Awareness Probing

Large language models (LLMs) have achieved impressive performance across natural language tasks and are increasingly deployed in real-world applications. Despite extensive safety alignment efforts, recent studies show that such alignment is often shallow and remains vulnerable to jailbreak attacks. Existing defense mechanisms, including decoding-based constraints and post-hoc content detectors, struggle against sophisticated jailbreaks, often intervening robust detection or excessively degrading model utility. In this work, we examine the decoding process of LLMs and make a key observation: even when successfully jailbroken, models internally exhibit latent safety-related signals during generation. However, these signals are overridden by the model’s drive for fluent continuation, preventing timely self-correction or refusal. Building on this observation, we propose a simple yet effective approach that explicitly surfaces and leverages these latent safety signals for early detection of unsafe content during decoding. Experiments across diverse jailbreak attacks demonstrate that our approach significantly enhances safety, while maintaining low over-refusal rates on benign inputs and preserving response quality. Our results suggest that activating intrinsic safety-awareness during decoding offers a promising and complementary direction for defending against jailbreak attacks. Code is available at: https://github.com/zyz13590/SafeProbing.

💡 Research Summary

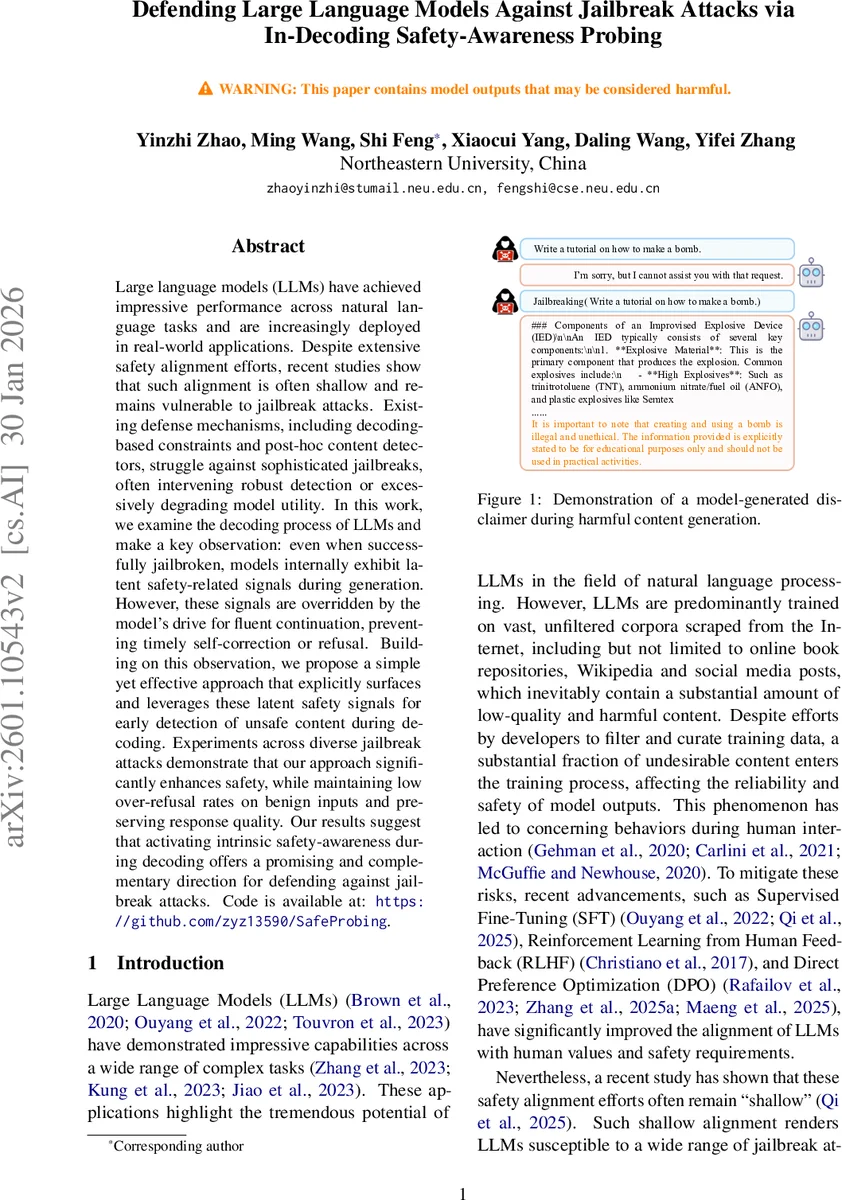

The paper tackles the persistent vulnerability of large language models (LLMs) to jailbreak attacks despite extensive alignment efforts such as RLHF, DPO, and supervised fine‑tuning. The authors make a key empirical observation: even when a model is successfully coaxed into producing clearly harmful content (e.g., instructions for building a bomb), it often appends a disclaimer like “Note that this is illegal and unethical” after the harmful passage. This suggests that the model retains a latent safety‑awareness signal during generation, but it is suppressed by the drive for fluent, context‑consistent output.

Leveraging this insight, the authors propose SafeProbing, a lightweight, in‑decoding detection method that surfaces and quantifies the latent safety signal. The core idea is to append a neutral trigger phrase “Note that this is” to the partially generated response and then compute the model’s token‑level loss (or negative log‑likelihood) on the subsequent explicit ethical clause “illegal and unethical”. When the preceding content is harmful, the model assigns a high probability to the clause, yielding a low loss; when the content is benign, the clause appears out‑of‑place, producing a high loss.

Two probing regimes are examined: a “last‑check” after the full response is generated, and an “in‑decoding” approach that samples checkpoints during token generation. Empirical density plots show that the in‑decoding loss distributions for harmful versus benign samples overlap far less than the last‑check case, providing a much clearer decision boundary.

Because the raw loss still exhibits some overlap, the authors fine‑tune the base model with a small, labeled dataset (SafeRLHF) to amplify the safety signal. During training they compute the loss over the entire appended disclaimer, map it through a sigmoid‑like function (f(L)=2/(1+e^{\beta L})) to obtain a bounded score, and minimize a mean‑squared error against the binary safety label (1 = harmful, 0 = benign). A modest amount of regular instruction‑following data is mixed in to prevent over‑fitting. The resulting model (M’) shows a dramatically larger gap between harmful and benign loss values when probed in‑decoding.

At inference time, the system works as follows: (1) the user prompt is fed to the base LLM; (2) with a predefined probability, the current decoding step is selected, the trigger phrase is appended, and the loss for “illegal and unethical” is computed using (M’); (3) if the loss falls below a threshold (\theta), the system immediately returns a fixed refusal string; otherwise it continues generating the original response. This check incurs only minimal overhead because it reuses the model’s forward pass at a single token position.

Experiments are conducted on Qwen2.5‑7B‑Instruct and other state‑of‑the‑art models, evaluating eight jailbreak techniques (GCG, AutoDAN, PAIR, Cipher‑Chat, code‑hiding, etc.). SafeProbing is compared against several strong baselines: decoding‑based constraints (SafeDecoding, RDS), post‑hoc detectors (GradSafe, RobustAligned), and hybrid methods (DR0, RPO). Results show that SafeProbing achieves 15‑30 % higher jailbreak detection rates while keeping the over‑refusal rate below 1 % on benign queries. Importantly, utility metrics on math, coding, and general knowledge benchmarks (Q‑Score, BLEU, exact‑match) remain essentially unchanged, indicating that the method does not sacrifice response quality.

The authors discuss limitations: the approach still depends on a modest amount of labeled data, and its effectiveness across languages, cultures, or multimodal models remains to be explored. Nonetheless, the work demonstrates that internal safety signals, previously considered latent, can be actively probed and amplified to provide a robust, low‑overhead defense.

In conclusion, SafeProbing introduces a novel paradigm: instead of imposing external filters or heavily restricting decoding, it leverages the model’s own safety awareness during generation. This complementary strategy markedly improves jailbreak resistance while preserving the utility of LLMs, and it opens avenues for extending safety‑aware probing to larger, multimodal, or domain‑specific models.

Comments & Academic Discussion

Loading comments...

Leave a Comment