DeepResearch Bench II: Diagnosing Deep Research Agents via Rubrics from Expert Report

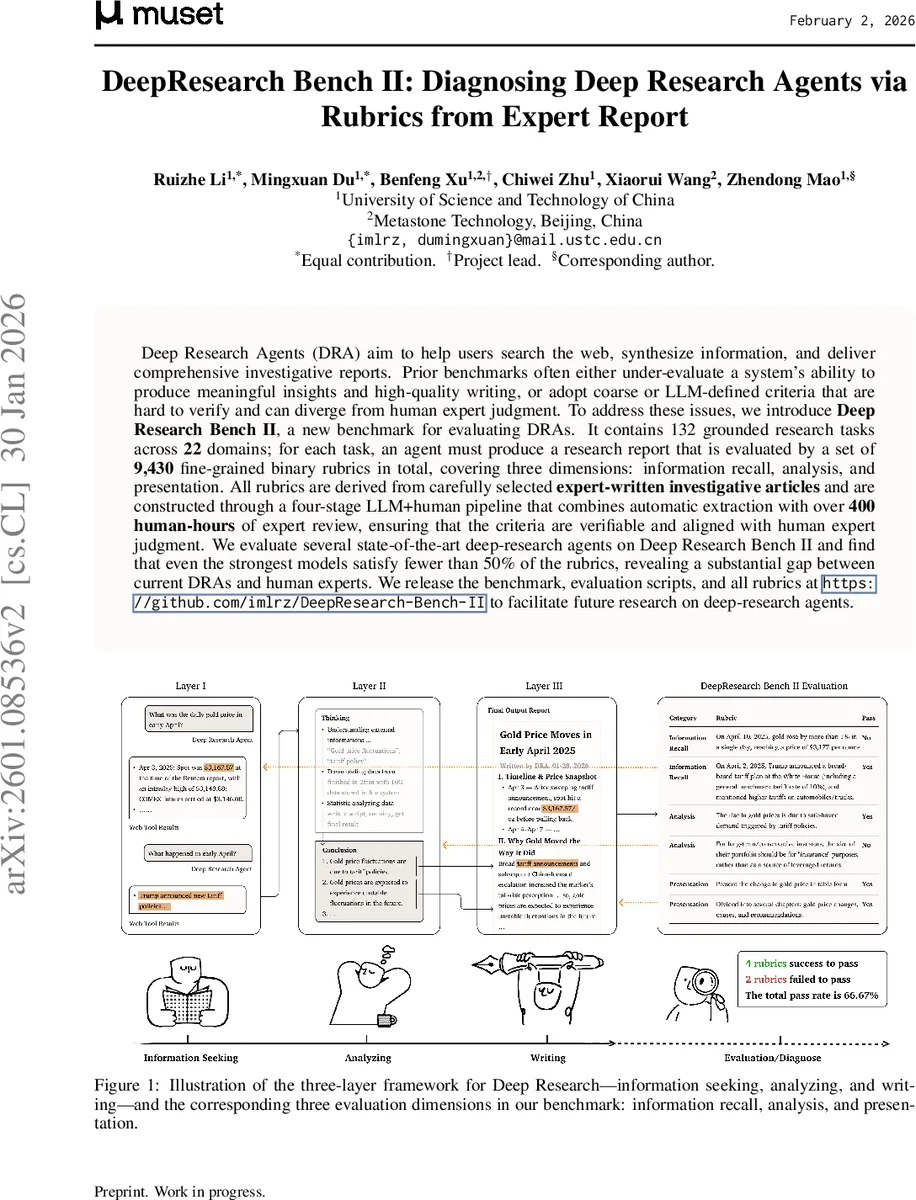

Deep Research Systems (DRS) aim to help users search the web, synthesize information, and deliver comprehensive investigative reports. However, how to rigorously evaluate these systems remains under-explored. Existing deep-research benchmarks often fall into two failure modes. Some do not adequately test a system’s ability to analyze evidence and write coherent reports. Others rely on evaluation criteria that are either overly coarse or directly defined by LLMs (or both), leading to scores that can be biased relative to human experts and are hard to verify or interpret. To address these issues, we introduce Deep Research Bench II, a new benchmark for evaluating DRS-generated reports. It contains 132 grounded research tasks across 22 domains; for each task, a system must produce a long-form research report that is evaluated by a set of 9430 fine-grained binary rubrics in total, covering three dimensions: information recall, analysis, and presentation. All rubrics are derived from carefully selected expert-written investigative articles and are constructed through a four-stage LLM+human pipeline that combines automatic extraction with over 400 human-hours of expert review, ensuring that the criteria are atomic, verifiable, and aligned with human expert judgment. We evaluate several state-of-the-art deep-research systems on Deep Research Bench II and find that even the strongest models satisfy fewer than 50% of the rubrics, revealing a substantial gap between current DRSs and human experts.

💡 Research Summary

The paper introduces Deep Research Bench II, a comprehensive benchmark designed to rigorously evaluate Deep Research Agents (DRAs) that retrieve, synthesize, and report information from the web. Existing benchmarks fall into two problematic categories: (1) fixed‑answer tasks that test only retrieval of specific entities or numbers, and (2) open‑ended report‑generation tasks whose evaluation criteria are either defined by large language models (LLMs) or are overly coarse, leading to misalignment with human expert judgment and limited interpretability. To overcome these issues, the authors construct a new benchmark consisting of 132 grounded research tasks spanning 22 domains. Each task is derived from a real, peer‑reviewed expert investigative article (open‑access, CC‑4.0 licensed) and requires both extensive information gathering and non‑trivial analysis.

A key contribution is the creation of 9,430 fine‑grained binary rubrics (≈ 71 per task) that serve as the ground‑truth evaluation criteria. Rubrics are organized into three dimensions that map directly onto core DRA capabilities: Information Recall (correctly locating and citing relevant evidence), Analysis (synthesizing evidence into higher‑level insights), and Presentation (structuring the report, using tables/figures, and providing clear explanations). The rubrics are generated through a four‑stage LLM‑plus‑human pipeline: (1) LLM extraction from source articles, (2) self‑evaluation iteration where the LLM checks its own rubrics against the source and regenerates if recall/analysis accuracy falls below 90 %, (3) manual revision by annotators to enforce atomicity, clarity, and verifiability, and (4) expert review for final refinement. This process ensures that each rubric encodes a concrete factual or inferential requirement that can be verified independently of the model’s internal knowledge.

Evaluation is performed using an LLM-as‑judge approach. For a given model‑generated report, the judge LLM assesses each binary rubric and returns a pass/fail decision. Dimension‑wise scores are computed as the proportion of passed rubrics within that dimension. The authors validate the reliability of the LLM judge through human‑LLM agreement studies, reporting a high correlation (≈ 0.78) with human expert judgments.

The benchmark is used to assess several state‑of‑the‑art DRAs, including commercial systems such as Gemini Deep Research and OpenAI Deep Research, as well as leading open‑source agents. Across the board, the strongest agents achieve less than 50 % overall rubric compliance. Performance gaps are most pronounced in Information Recall (≈ 38 % pass) and Analysis (≈ 42 % pass), while Presentation scores are relatively higher (≈ 65 %). Error analysis reveals systematic failures: omission of critical sources, inability to reconcile conflicting evidence, and inaccurate quantitative citations. These findings highlight that current DRAs, despite sophisticated planning and tool‑use capabilities, still lack robust evidence‑grounded reasoning and synthesis.

The paper’s contributions are fourfold: (1) a grounded, expert‑derived benchmark with a large set of verifiable rubrics, (2) a novel three‑dimensional rubric‑based evaluation framework coupled with an LLM‑as‑judge protocol, (3) an extensive empirical study quantifying the gap between leading DRAs and human experts, and (4) analysis of human‑LLM alignment and systematic model deficiencies to guide future research. Limitations include reliance on the LLM judge’s domain knowledge (potentially problematic for highly specialized fields such as medicine or law) and the subjectivity inherent in expert‑crafted rubrics. Future work is suggested to develop domain‑specialized judges, enhance automatic quality‑control in rubric generation, and expand the benchmark to cover multimodal evidence sources.

Comments & Academic Discussion

Loading comments...

Leave a Comment