SLIM-Brain: A Data- and Training-Efficient Foundation Model for fMRI Data Analysis

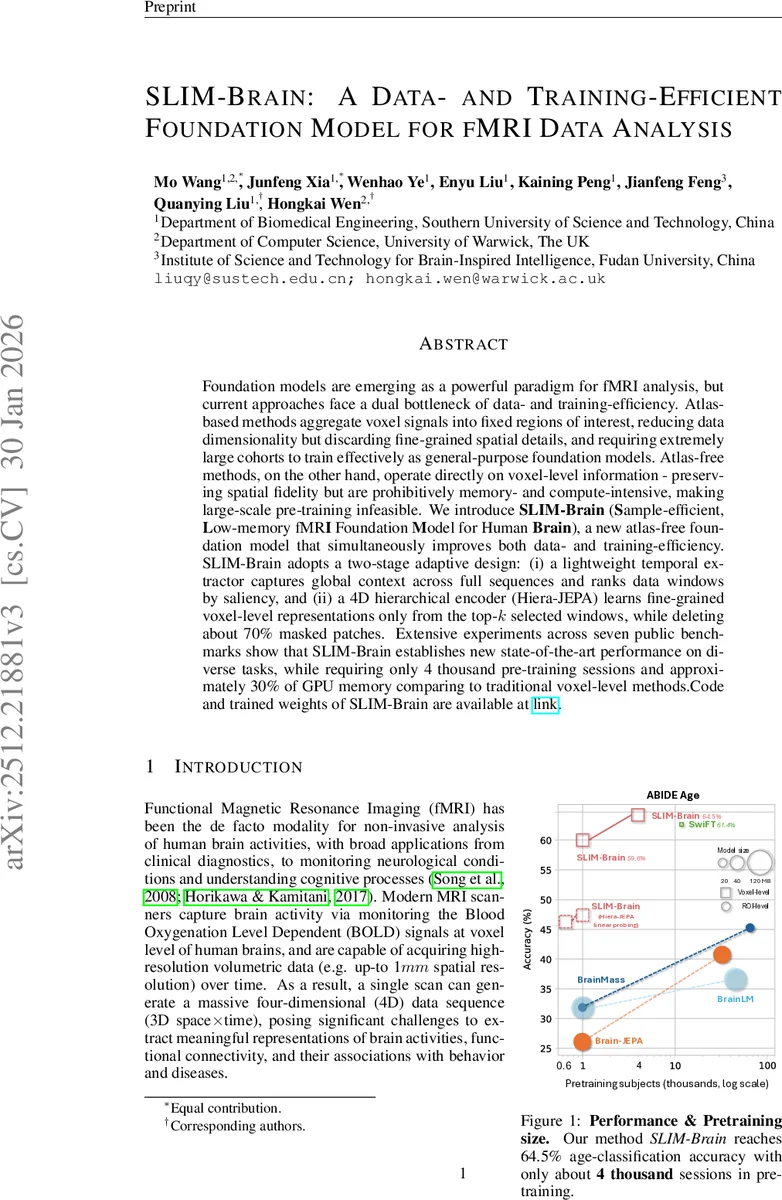

Foundation models are emerging as a powerful paradigm for fMRI analysis, but current approaches face a dual bottleneck of data- and training-efficiency. Atlas-based methods aggregate voxel signals into fixed regions of interest, reducing data dimensionality but discarding fine-grained spatial details, and requiring extremely large cohorts to train effectively as general-purpose foundation models. Atlas-free methods, on the other hand, operate directly on voxel-level information - preserving spatial fidelity but are prohibitively memory- and compute-intensive, making large-scale pre-training infeasible. We introduce SLIM-Brain (Sample-efficient, Low-memory fMRI Foundation Model for Human Brain), a new atlas-free foundation model that simultaneously improves both data- and training-efficiency. SLIM-Brain adopts a two-stage adaptive design: (i) a lightweight temporal extractor captures global context across full sequences and ranks data windows by saliency, and (ii) a 4D hierarchical encoder (Hiera-JEPA) learns fine-grained voxel-level representations only from the top-$k$ selected windows, while deleting about 70% masked patches. Extensive experiments across seven public benchmarks show that SLIM-Brain establishes new state-of-the-art performance on diverse tasks, while requiring only 4 thousand pre-training sessions and approximately 30% of GPU memory comparing to traditional voxel-level methods.

💡 Research Summary

The paper introduces SLIM‑Brain, a novel foundation model for functional magnetic resonance imaging (fMRI) that simultaneously addresses two major bottlenecks in current approaches: the need for massive training cohorts and the prohibitive memory/computational cost of voxel‑level processing. Existing methods fall into two categories. Atlas‑based pipelines aggregate voxel signals into predefined regions of interest (ROIs), drastically reducing dimensionality and memory usage but discarding fine‑grained spatial information and requiring very large subject pools (often >60 K sessions) to achieve competitive performance. Atlas‑free approaches retain voxel‑level detail by operating directly on 4‑D volumes, yet the sheer number of voxels (millions per scan) makes standard Vision Transformers (ViT) infeasible because self‑attention scales quadratically with token count. Even recent efficient variants such as Swin‑Transformer still process the entire brain volume at each time point, wasting resources on background voxels that constitute roughly 70 % of the data.

SLIM‑Brain resolves these issues through a two‑stage adaptive pipeline. In the first stage, a lightweight Vision Transformer processes the whole recording using a masked autoencoding (MAE) objective (SimMIM style). The 4‑D fMRI is first partitioned into non‑overlapping cubic patches; patches containing only background are discarded via a brain mask. Each remaining patch is averaged over space to produce a time series, yielding a 2‑D token matrix (patch × time). Random masking of a fraction of tokens and reconstruction via a simple decoder yields global contextual embeddings that capture long‑range temporal dynamics.

The second stage leverages these global embeddings to rank temporal windows. For each candidate window, the frozen MAE model is fed only that window while all other windows are masked; the reconstruction error on the masked windows is computed. The negative reconstruction error, averaged over all masked windows, defines a “mutual masked reconstruction score.” Windows with the highest scores are deemed most informative and are selected (top‑k, typically 5–10 windows). This selection is performed without additional training, incurring negligible overhead.

Selected windows are then passed to a 4‑D hierarchical encoder called Hiera‑JEPA. Unlike Swin‑Transformer, Hiera‑JEPA employs a high masking ratio (≈70 %) to exclude background patches, and it processes tokens hierarchically: early layers downsample spatial resolution while expanding channel dimensions, thereby reducing memory consumption while preserving fine‑grained detail. The encoder is trained with a MAE‑style reconstruction loss combined with a Joint Embedding Predictive Architecture (JEPA) objective that predicts future token embeddings, encouraging the model to learn rich spatiotemporal relationships.

Extensive experiments on seven public fMRI datasets—including ABIDE, NeuroSTORM, and others—cover downstream tasks such as sex classification, age prediction, and subject fingerprinting. SLIM‑Brain achieves state‑of‑the‑art results while requiring only about 4 K pre‑training sessions, a fraction of the 60 K+ sessions needed by the strongest atlas‑based baselines. GPU memory usage drops to roughly 30 % of that required by Swin‑based atlas‑free models, and training speed improves by 2–3×. Ablation studies show that selecting 5–10 top windows and masking ~70 % of patches yields the best trade‑off between performance and efficiency. Visualization of learned voxel‑level embeddings reveals that the model preserves fine anatomical distinctions (e.g., sub‑nuclei within the amygdala), confirming that the atlas‑free approach does not sacrifice spatial fidelity.

The paper’s contributions are threefold: (1) it formally identifies and analyzes the dual challenges of data and training efficiency in fMRI foundation models; (2) it proposes a novel two‑stage adaptive architecture that couples a global MAE‑based temporal selector with a highly masked hierarchical encoder, dramatically reducing computational demands while retaining voxel‑level detail; (3) it validates the approach across diverse benchmarks, demonstrating superior performance with far less data and hardware resources. The authors release code and pretrained weights, enabling broader adoption. Future directions include refining the window‑selection mechanism, extending the framework to multimodal neuroimaging (e.g., structural MRI, diffusion imaging), and exploring self‑supervised objectives that further exploit the rich temporal structure of fMRI recordings.

Comments & Academic Discussion

Loading comments...

Leave a Comment