RefineBridge: Generative Bridge Models Improve Financial Forecasting by Foundation Models

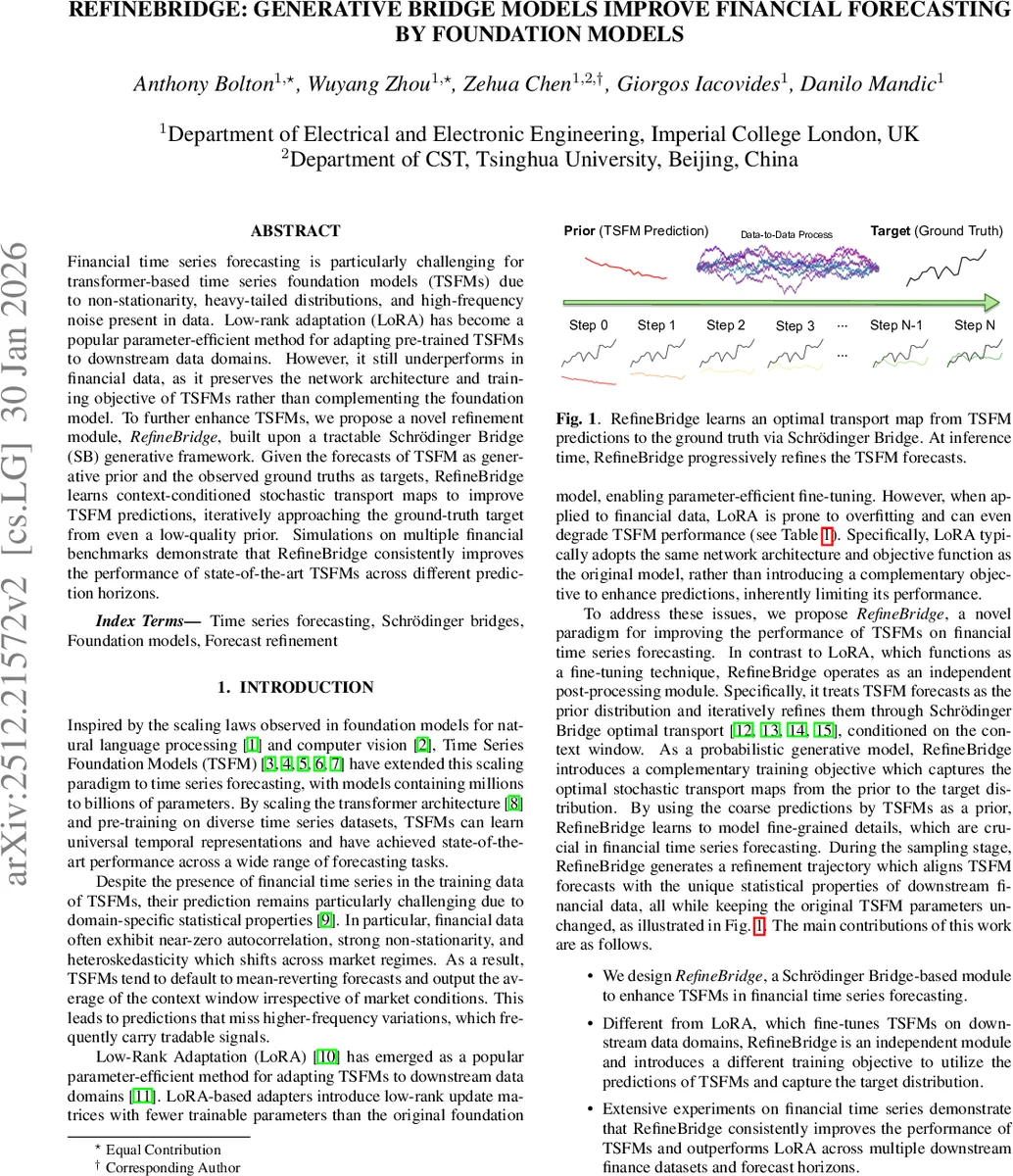

Financial time series forecasting is particularly challenging for transformer-based time series foundation models (TSFMs) due to non-stationarity, heavy-tailed distributions, and high-frequency noise present in data. Low-rank adaptation (LoRA) has become a popular parameter-efficient method for adapting pre-trained TSFMs to downstream data domains. However, it still underperforms in financial data, as it preserves the network architecture and training objective of TSFMs rather than complementing the foundation model. To further enhance TSFMs, we propose a novel refinement module, RefineBridge, built upon a tractable Schrödinger Bridge (SB) generative framework. Given the forecasts of TSFM as generative prior and the observed ground truths as targets, RefineBridge learns context-conditioned stochastic transport maps to improve TSFM predictions, iteratively approaching the ground-truth target from even a low-quality prior. Simulations on multiple financial benchmarks demonstrate that RefineBridge consistently improves the performance of state-of-the-art TSFMs across different prediction horizons.

💡 Research Summary

The paper tackles a pressing problem in modern time‑series forecasting: while large transformer‑based Time‑Series Foundation Models (TSFMs) such as Chronos, Moirai, and Time‑MoE have demonstrated impressive general‑purpose performance, they struggle on financial data that exhibit near‑zero autocorrelation, strong non‑stationarity, heavy‑tailed distributions, and high‑frequency noise. Existing parameter‑efficient adaptation techniques, most notably Low‑Rank Adaptation (LoRA), simply inject low‑rank weight updates into the pre‑trained TSFM but retain the original architecture and loss functions. As a result, LoRA often over‑fits or even degrades performance on financial series because it does not address the fundamental mismatch between the TSFM’s training objective (coarse, mean‑reverting forecasts) and the fine‑grained signals needed for trading.

To overcome this mismatch, the authors introduce RefineBridge, a post‑processing module built on the Schrödinger Bridge (SB) framework. The key idea is to treat the TSFM’s forecast as a prior distribution and the observed ground‑truth series as the target distribution. RefineBridge learns a conditional stochastic transport map that gradually morphs the prior into the target by minimizing the Kullback‑Leibler divergence between the transport path measure and a reference diffusion process. Concretely, they define a zero‑drift SDE with a linear time‑varying diffusion coefficient, adopt the Bridge‑gmax scheduler to simplify scaling factors (αₜ = \barαₜ = 1), and obtain a Gaussian marginal bridge distribution. The model then learns a time‑dependent score function θ via a conditional denoising loss L = 𝔼‖θ(xₜ, t, x_T, z_c) – x₀‖², where z_c is a context embedding produced by a DLLinear encoder that decomposes the input window into trend and seasonal components.

Architecturally, RefineBridge consists of (1) a context encoder that outputs a latent vector z_c and (2) a 1‑D U‑Net denoiser. The U‑Net receives the concatenated tensor

Comments & Academic Discussion

Loading comments...

Leave a Comment