From Tokens to Photons: Test-Time Physical Prompting for Vision-Language Models

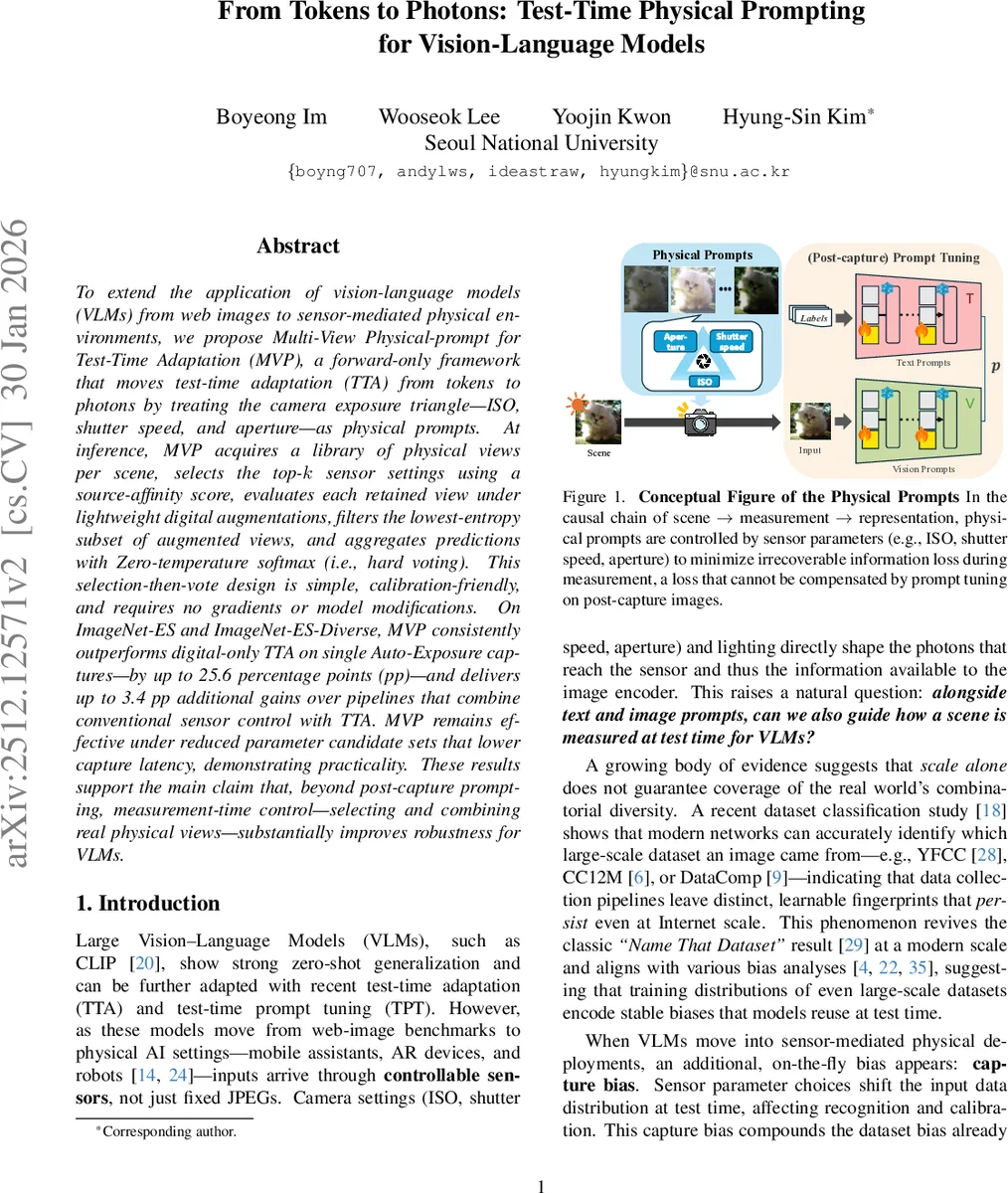

To extend the application of vision-language models (VLMs) from web images to sensor-mediated physical environments, we propose Multi-View Physical-prompt for Test-Time Adaptation (MVP), a forward-only framework that moves test-time adaptation (TTA) from tokens to photons by treating the camera exposure triangle–ISO, shutter speed, and aperture–as physical prompts. At inference, MVP acquires a library of physical views per scene, selects the top-k sensor settings using a source-affinity score, evaluates each retained view under lightweight digital augmentations, filters the lowest-entropy subset of augmented views, and aggregates predictions with Zero-temperature softmax (i.e., hard voting). This selection-then-vote design is simple, calibration-friendly, and requires no gradients or model modifications. On ImageNet-ES and ImageNet-ES-Diverse, MVP consistently outperforms digital-only TTA on single Auto-Exposure captures, by up to 25.6 percentage points (pp), and delivers up to 3.4 pp additional gains over pipelines that combine conventional sensor control with TTA. MVP remains effective under reduced parameter candidate sets that lower capture latency, demonstrating practicality. These results support the main claim that, beyond post-capture prompting, measurement-time control–selecting and combining real physical views–substantially improves robustness for VLMs.

💡 Research Summary

The paper addresses a critical gap in deploying large‑scale vision‑language models (VLMs) such as CLIP in real‑world, sensor‑mediated environments. Existing test‑time adaptation (TTA) and test‑time prompt tuning (TPT) operate only after an image has been captured, leaving the model vulnerable to “capture bias” caused by sub‑optimal camera settings (ISO, shutter speed, aperture). To overcome this, the authors propose Multi‑View Physical‑prompt for Test‑Time Adaptation (MVP), a forward‑only framework that treats the exposure triangle as a set of physical prompts. For each test scene, MVP captures M images under different camera configurations, expands each capture with N lightweight digital augmentations, and evaluates them using two criteria: (1) source‑affinity, measured as the L2 distance between layer‑wise feature statistics of the capture and pre‑computed ImageNet statistics; (2) predictive confidence, quantified by Shannon entropy of the softmax outputs. The top‑k configurations with highest source‑affinity are retained; from their augmentations, the lowest‑entropy γ % are selected. Final predictions are aggregated by zero‑temperature softmax (hard voting), eliminating over‑confidence issues inherent in probability averaging. The entire pipeline requires no gradient updates, no model modifications, and works with gray‑box APIs.

Experiments on the ImageNet‑ES and ImageNet‑ES‑Diverse benchmarks demonstrate that MVP outperforms digital‑only TTA/TPT on single auto‑exposure captures by up to 25.6 percentage points, and adds up to 3.4 points over pipelines that combine conventional sensor control (e.g., Lens) with digital TTA. Moreover, MVP remains effective when the candidate set is reduced to lower capture latency, highlighting its practicality for real‑time robotics or AR applications. Analyses reveal that physical views with smaller source‑affinity distances produce attention patterns more aligned with the original training distribution, and entropy‑based filtering mitigates confidence‑driven selection failures. Hard voting further stabilizes predictions across heterogeneous views.

In summary, MVP shows that test‑time measurement‑level adaptation—selecting and aggregating real physical views—can substantially improve VLM robustness without any changes to the underlying model, opening a new direction for deploying foundation models in physical AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment