Breaking the Adversarial Robustness-Performance Trade-off in Text Classification via Manifold Purification

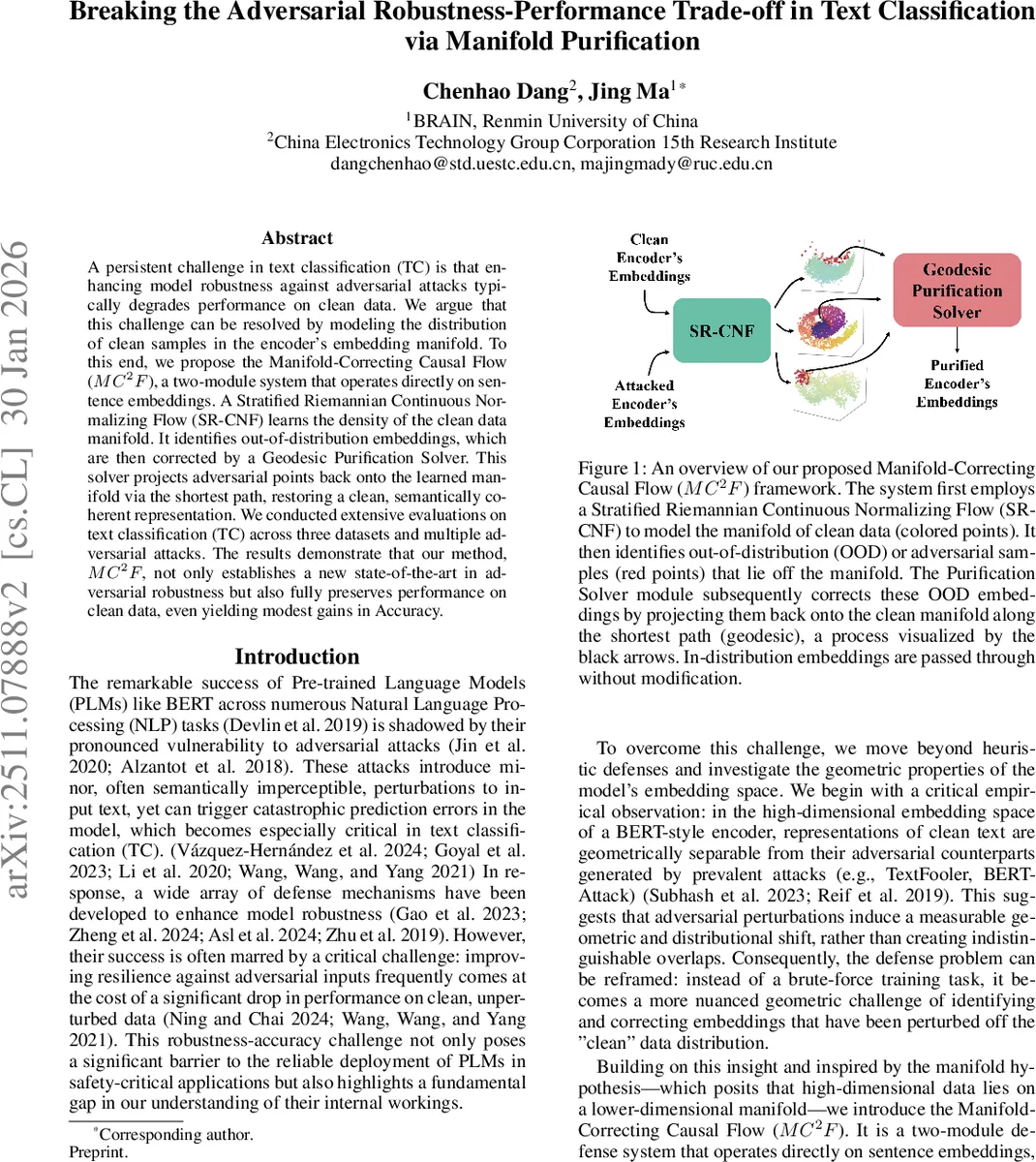

A persistent challenge in text classification (TC) is that enhancing model robustness against adversarial attacks typically degrades performance on clean data. We argue that this challenge can be resolved by modeling the distribution of clean samples in the encoder embedding manifold. To this end, we propose the Manifold-Correcting Causal Flow (MC^2F), a two-module system that operates directly on sentence embeddings. A Stratified Riemannian Continuous Normalizing Flow (SR-CNF) learns the density of the clean data manifold. It identifies out-of-distribution embeddings, which are then corrected by a Geodesic Purification Solver. This solver projects adversarial points back onto the learned manifold via the shortest path, restoring a clean, semantically coherent representation. We conducted extensive evaluations on text classification (TC) across three datasets and multiple adversarial attacks. The results demonstrate that our method, MC^2F, not only establishes a new state-of-the-art in adversarial robustness but also fully preserves performance on clean data, even yielding modest gains in accuracy.

💡 Research Summary

The paper tackles a long‑standing dilemma in text classification: improving adversarial robustness usually comes at the cost of reduced accuracy on clean inputs. The authors begin by empirically demonstrating that clean and adversarial sentence embeddings produced by a BERT‑style encoder occupy distinct low‑dimensional manifolds. Visualizations (PCA, t‑SNE, UMAP) show clear cluster separation; statistical distances (MMD, Jensen‑Shannon, Wasserstein) confirm a significant distributional shift; and Local Intrinsic Dimensionality (LID) analysis reveals that adversarial embeddings reside in regions of higher intrinsic dimensionality, indicating a move to more complex parts of the space.

From these observations the authors formulate two hypotheses: (1) “Manifold Separability” – clean and adversarial embeddings lie on statistically different, geometrically separable manifolds; (2) “Stratified Manifold Structure” – the embedding space consists of multiple strata, each with its own local geometry, and adversarial points tend to fall into higher‑dimensional strata.

Guided by these hypotheses, they propose the Manifold‑Correcting Causal Flow (MC²F), a two‑module defense that operates directly on sentence embeddings. The first module, a Stratified Riemannian Continuous Normalizing Flow (SR‑CNF), learns a probability density over the clean manifold. Unlike standard normalizing flows, SR‑CNF lives on a learnable Riemannian manifold whose metric tensor G(z) is produced by a Mixture‑of‑Experts (MoE) network. The MoE consists of K expert networks that output locally positive‑definite matrices, combined via a gating network to yield a point‑wise metric. This allows the flow to capture piece‑wise smooth curvature and to estimate log‑likelihoods accurately. Training optimizes three terms: negative log‑likelihood (density fitting), a topology loss that penalizes reconstruction error after inverting the flow, and a causal loss that encourages the downstream classifier’s predictions on purified embeddings to match those on clean embeddings.

During inference, SR‑CNF computes the log‑likelihood of each incoming embedding. If the likelihood falls below a validation‑derived threshold τ, the sample is flagged as out‑of‑distribution (i.e., adversarial). The second module, the Geodesic Purification Solver, then projects the flagged embedding back onto the learned manifold by solving a variational problem: find the shortest geodesic (with respect to G(z)) from the adversarial point to a point on the manifold where the density is high. In practice this is solved with a few gradient‑descent steps using automatic differentiation, yielding a purified embedding that lies on the clean manifold while preserving semantic content.

The authors evaluate MC²F on three benchmark classification datasets—SST‑2 (sentiment), AG‑News (topic), and IMDB (sentiment)—against five strong attacks: TextFooler, BAE, TextBugger, BERT‑Attack, and PWWS. Compared with a suite of baselines (standard adversarial training, Free‑AT, TRADES, DAD, and recent MMD‑based OOD defenses), MC²F consistently achieves the highest robust accuracy, reducing attack success rates by 30‑45 % relative to the best prior method. Crucially, clean‑data accuracy is either unchanged or modestly improved (up to +0.7 %). Post‑purification analyses show that the LID of purified embeddings matches that of original clean embeddings, confirming successful manifold restoration.

Key contributions are: (1) a thorough empirical validation that clean and adversarial embeddings are separable both statistically and geometrically; (2) the introduction of a Riemannian‑metric‑aware continuous normalizing flow for high‑fidelity density estimation on stratified manifolds; (3) a geodesic‑based purification step that corrects adversarial perturbations without degrading clean performance; and (4) extensive experiments demonstrating state‑of‑the‑art robustness while preserving accuracy.

The paper also discusses limitations: the MoE‑based metric learning incurs additional memory and compute overhead; the current approach operates at the sentence‑embedding level, leaving token‑level or multimodal extensions for future work; and extremely aggressive attacks may require adaptive thresholding. Future directions include lightweight metric learning, extending the framework to multimodal data, and dynamic online detection mechanisms.

Overall, MC²F offers a principled, geometry‑driven solution that breaks the traditional robustness‑accuracy trade‑off in text classification, opening new avenues for robust NLP systems that can be deployed in safety‑critical environments without sacrificing performance on benign inputs.

Comments & Academic Discussion

Loading comments...

Leave a Comment