Physics-Informed Neural Networks and Neural Operators for Parametric PDEs

PDEs arise ubiquitously in science and engineering, where solutions depend on parameters (physical properties, boundary conditions, geometry). Traditional numerical methods require re-solving the PDE for each parameter, making parameter space exploration prohibitively expensive. Recent machine learning advances, particularly physics-informed neural networks (PINNs) and neural operators, have revolutionized parametric PDE solving by learning solution operators that generalize across parameter spaces. We critically analyze two main paradigms: (1) PINNs, which embed physical laws as soft constraints and excel at inverse problems with sparse data, and (2) neural operators (e.g., DeepONet, Fourier Neural Operator), which learn mappings between infinite-dimensional function spaces and achieve unprecedented generalization. Through comparisons across fluid dynamics, solid mechanics, heat transfer, and electromagnetics, we show neural operators can achieve computational speedups of $10^3$ to $10^5$ times faster than traditional solvers for multi-query scenarios, while maintaining comparable accuracy. We provide practical guidance for method selection, discuss theoretical foundations (universal approximation, convergence), and identify critical open challenges: high-dimensional parameters, complex geometries, and out-of-distribution generalization. This work establishes a unified framework for understanding parametric PDE solvers via operator learning, offering a comprehensive, incrementally updated resource for this rapidly evolving field

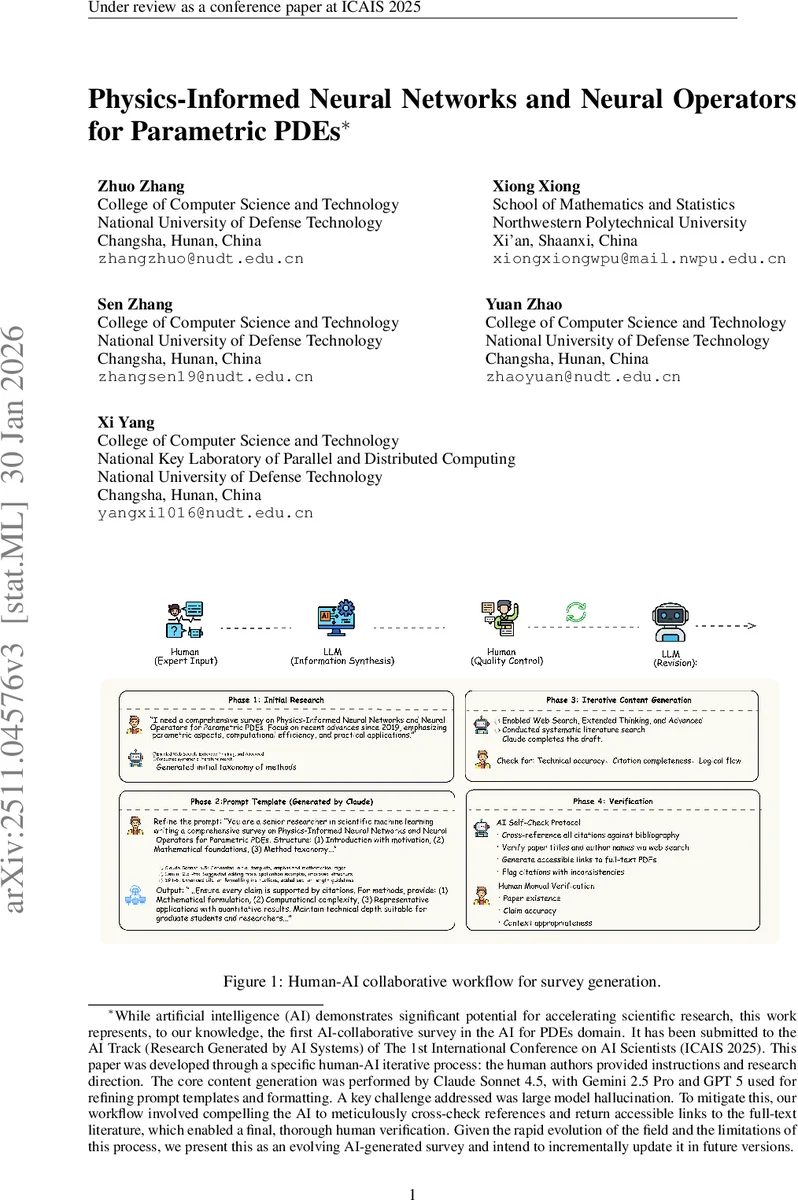

💡 Research Summary

The paper provides a comprehensive review and critical analysis of two emerging machine‑learning paradigms—Physics‑Informed Neural Networks (PINNs) and Neural Operators—for solving parametric partial differential equations (PDEs). It begins by formalizing the parametric PDE as a mapping G from a d‑dimensional parameter space P to a solution space U, and contrasts this with traditional reduced‑order modeling (ROM) techniques such as POD‑Galerkin, Reduced Basis, sparse grids, and multi‑level Monte Carlo. While ROMs excel in low‑dimensional, smooth‑parameter regimes, they suffer from linear‑subspace assumptions, affine‑parameter requirements, and the curse of dimensionality, limiting their applicability to modern high‑dimensional, geometry‑parameterized, or highly nonlinear problems.

The authors then dissect PINNs, which embed the governing PDE as a soft constraint in the loss function. By leveraging automatic differentiation, PINNs can enforce differential operators, boundary, and initial conditions without a mesh. This makes them particularly powerful for inverse problems, data‑scarce scenarios, and problems with complex physics. However, PINNs are sensitive to optimizer settings, can exhibit slow convergence, and their generalization degrades when the parameter space becomes large.

Neural Operators, exemplified by DeepONet and the Fourier Neural Operator (FNO), learn mappings between infinite‑dimensional function spaces directly. DeepONet’s trunk‑branch architecture captures the inner product structure of input and output functions, while FNO performs global convolutions in the spectral domain, enabling efficient learning of translation‑invariant operators. These methods bypass the need for mesh regeneration, handle high‑dimensional parameters (>50) more gracefully, and achieve unprecedented speedups—reported as 10³ to 10⁵ times faster than conventional CFD/FEA solvers—while maintaining test errors in the 10⁻³–10⁻⁴ range across fluid dynamics, solid mechanics, heat transfer, and electromagnetics.

The theoretical foundations are summarized, citing universal approximation theorems for operators and recent convergence guarantees that bound the L² error under sufficient training data. The paper also offers practical guidance: use Neural Operators for forward multi‑query simulations when abundant data are available; prefer PINNs for inverse problems or when physical constraints dominate. Remaining challenges include high‑dimensional parameter spaces, complex geometries, out‑of‑distribution generalization, and interpretability. The authors suggest future research directions such as hybrid PINN‑Operator models, multi‑fidelity training, physics‑based pre‑training, and real‑time control applications. Overall, the work establishes a unified framework for understanding and selecting parametric PDE solvers in the era of operator learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment