Quantifying Data Contamination in Psychometric Evaluations of LLMs

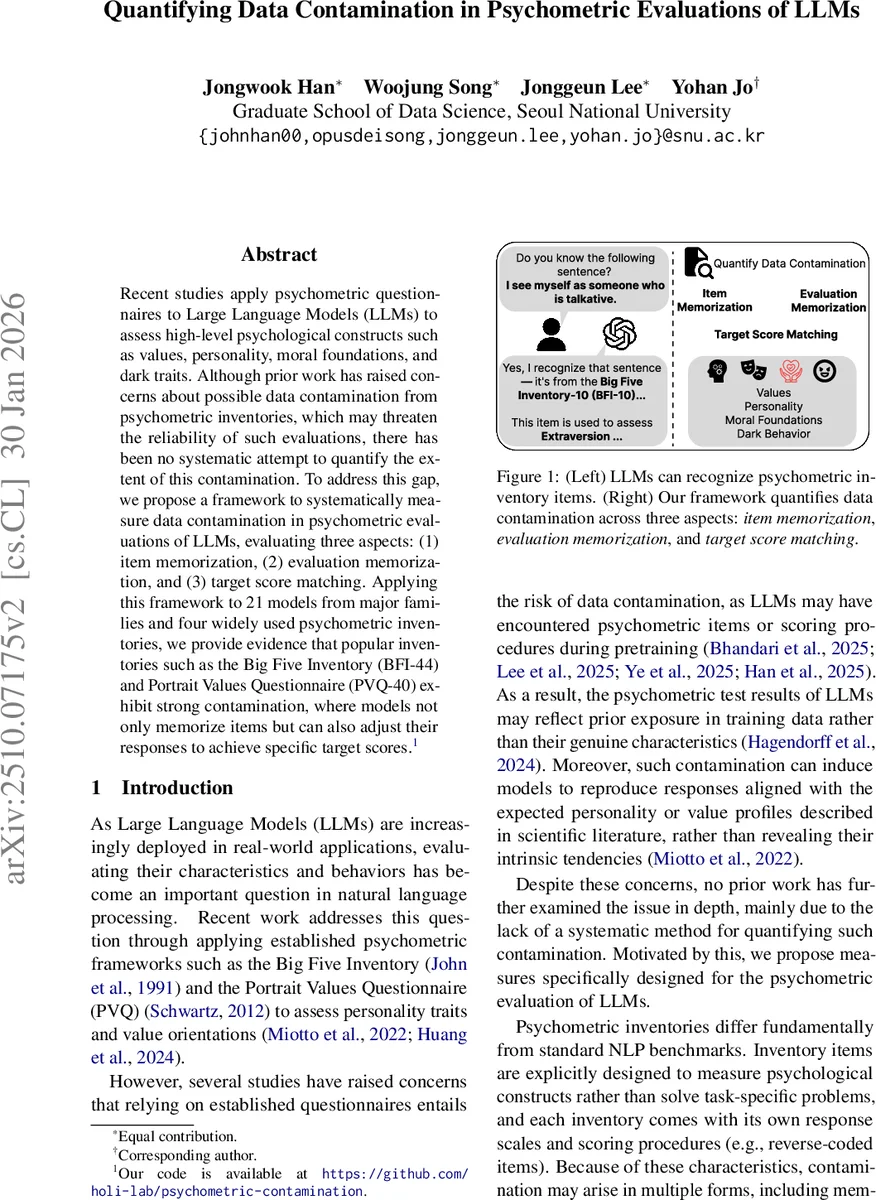

Recent studies apply psychometric questionnaires to Large Language Models (LLMs) to assess high-level psychological constructs such as values, personality, moral foundations, and dark traits. Although prior work has raised concerns about possible data contamination from psychometric inventories, which may threaten the reliability of such evaluations, there has been no systematic attempt to quantify the extent of this contamination. To address this gap, we propose a framework to systematically measure data contamination in psychometric evaluations of LLMs, evaluating three aspects: (1) item memorization, (2) evaluation memorization, and (3) target score matching. Applying this framework to 21 models from major families and four widely used psychometric inventories, we provide evidence that popular inventories such as the Big Five Inventory (BFI-44) and Portrait Values Questionnaire (PVQ-40) exhibit strong contamination, where models not only memorize items but can also adjust their responses to achieve specific target scores.

💡 Research Summary

This paper addresses a critical yet under‑explored problem in the emerging practice of evaluating large language models (LLMs) with human psychometric questionnaires: data contamination. The authors argue that because many widely used inventories (e.g., the Big Five Inventory, the Portrait Values Questionnaire) are publicly available on the internet, LLMs may have encountered the exact item wording, scoring rules, or even typical response patterns during pre‑training. If such exposure occurs, any subsequent “psychological” assessment of an LLM could reflect memorized knowledge rather than the model’s intrinsic tendencies, thereby undermining the validity of the evaluation.

To quantify this risk, the authors propose a systematic framework that decomposes contamination into three measurable dimensions: (1) Item Memorization, (2) Evaluation Memorization, and (3) Target Score Matching. For Item Memorization they design two tasks. The first, “Semantic Memorization,” asks a model to reconstruct an item given only its index and questionnaire name; similarity between the generated text and the original is measured with cosine similarity of embeddings. The second, “Key Information Memorization,” masks a manually annotated keyword in each item and evaluates whether the model can correctly recover it, reporting a success‑rate metric. Evaluation Memorization is split into (a) “Item‑Dimension Mapping,” where the model must assign each item to the correct psychological dimension (e.g., Openness, Agreeableness), evaluated with an averaged F1 score, and (b) “Option‑Score Mapping,” where the model must output the numeric score associated with each response option according to the official scoring protocol, evaluated with mean absolute error (MAE). Finally, Target Score Matching tests whether a model can deliberately produce responses that achieve a pre‑specified target score (minimum, mean, or maximum) on a given questionnaire; performance is again measured by MAE between the target and the achieved score.

The experimental suite includes 21 contemporary LLMs spanning several major families (OpenAI’s GPT‑4o, GPT‑5 series; Anthropic’s Claude‑3.5 and Claude‑4.5; Qwen‑3; GLM‑4.5; Gemini; Llama‑3.1, etc.) and four widely used inventories: the Big Five Inventory (BFI‑44), the Portrait Values Questionnaire (PVQ‑40), the Moral Foundations Questionnaire (MFQ), and the Short Dark Triad (SD‑3). All experiments are run with temperature 0.7, three repetitions per prompt, and results are reported with 95 % confidence intervals.

Key findings are as follows:

-

Ubiquitous Contamination – Across all three dimensions, every evaluated model shows evidence of contamination. Evaluation Memorization is near‑perfect: the average Item‑Dimension F1 score is 0.94, indicating that models reliably know which construct each item measures. High‑performing models (e.g., GPT‑5, Claude‑3.5) achieve MAE as low as 0.01 on Option‑Score Mapping, demonstrating internalization of scoring rules, including reverse‑coded items.

-

Item Memorization – Semantic Memorization yields an average cosine similarity of 0.31, while Key Information Memorization reaches an average success rate of 0.39. Both metrics exceed random baselines (≈0.32 similarity for generic dimension definitions; 10th‑percentile success rates of 0.16–0.41 across inventories), confirming that models retain substantial portions of the original wording and core semantics.

-

Target Score Matching – The most advanced models can deliberately steer their responses to hit specified scores, with MAE values around 0.1–0.2. This suggests that memorized knowledge of both item content and scoring procedures can be leveraged to produce “desired” psychometric profiles, raising serious concerns about the interpretability of any raw score obtained from an LLM.

-

Scaling Effects – Within a given model family, larger parameter counts generally improve performance on Option‑Score Mapping (lower MAE) and slightly boost Item‑Dimension F1, indicating that contamination intensifies with scale. However, Evaluation Memorization appears saturated early, implying a ceiling effect once a model has seen enough data to learn the scoring schema.

-

Inventory‑Specific Differences – BFI‑44 and PVQ‑40 exhibit markedly higher contamination than MFQ and SD‑3. The authors attribute this to the greater online availability and frequent citation of the former inventories in NLP research, which likely increased their presence in pre‑training corpora.

To aid practitioners, the paper proposes simple baselines for each contamination metric: (a) semantic similarity of generic dimension descriptions for Semantic Memorization; (b) 10th‑percentile keyword success rates as a low‑contamination reference; (c) random‑guess F1 (1/N) for Item‑Dimension Mapping; (d) analytically derived MAE for random option‑score assignment; and (e) expected MAE for random target‑score matching. By comparing observed scores against these baselines, researchers can gauge the severity of contamination in a given model‑inventory pair.

The authors conclude that psychometric evaluations of LLMs, as currently practiced, are vulnerable to substantial data contamination, especially for widely disseminated questionnaires. They recommend (i) reporting contamination metrics alongside any psychometric results, (ii) using less‑exposed or synthetically generated inventories when possible, and (iii) developing contamination‑aware evaluation protocols (e.g., prompt randomization, adversarial item generation). Future work should explore methods for de‑contaminating training data, constructing privacy‑preserving psychometric instruments, and establishing community standards for reporting and mitigating contamination.

In sum, this study provides the first systematic, quantitative evidence that LLMs not only memorize psychometric items but also understand and exploit their scoring mechanisms, thereby calling into question the validity of direct personality, values, or moral assessments of language models without explicit contamination controls.

Comments & Academic Discussion

Loading comments...

Leave a Comment