Thoughtbubbles: an Unsupervised Method for Parallel Thinking in Latent Space

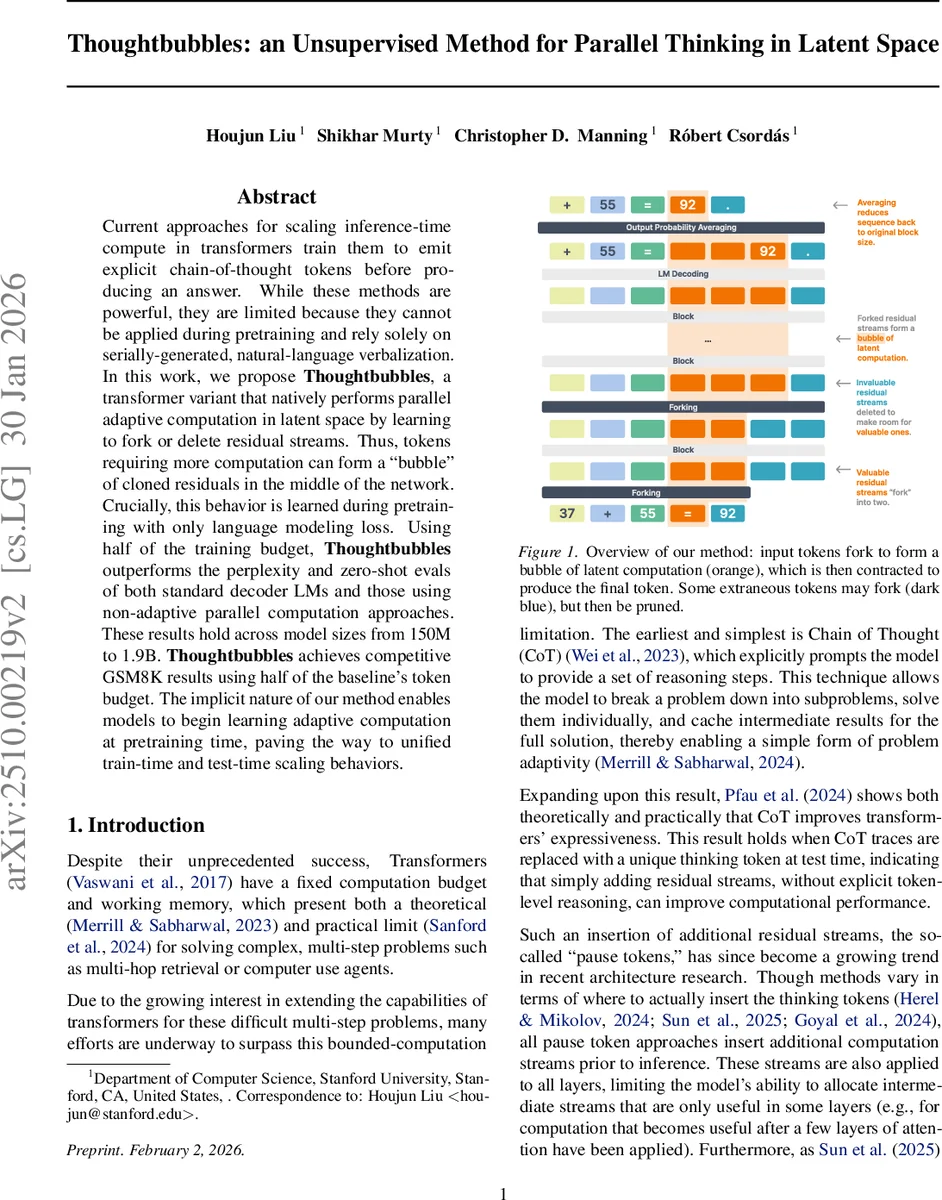

Current approaches for scaling inference-time compute in transformers train them to emit explicit chain-of-thought tokens before producing an answer. While these methods are powerful, they are limited because they cannot be applied during pretraining and rely solely on serially-generated, natural-language verbalization. In this work, we propose Thoughtbubbles, a transformer variant that natively performs parallel adaptive computation in latent space by learning to fork or delete residual streams. Thus, tokens requiring more computation can form a “bubble” of cloned residuals in the middle of the network. Crucially, this behavior is learned during pretraining with only language modeling loss. Using half of the training budget, Thoughtbubbles outperforms the perplexity and zero-shot evals of both standard decoder LMs and those using non-adaptive parallel computation approaches. These results hold across model sizes from 150M to 1.9B. Thoughtbubbles achieves competitive GSM8K results using half of the baseline’s token budget. The implicit nature of our method enables models to begin learning adaptive computation at pretraining time, paving the way to unified train-time and test-time scaling behaviors.

💡 Research Summary

The paper introduces Thoughtbubbles, a novel transformer architecture that enables adaptive parallel computation in latent space without any supervision beyond the standard language‑modeling loss. The key mechanism is a “forking” operation inserted between transformer blocks. At each forking layer, every residual stream receives two scores – a keep score and a fork score – produced by a small feed‑forward network followed by a sigmoid. These scores are multiplied by a cumulative score propagated from previous layers, yielding updated fork and keep probabilities in log‑space for numerical stability. A top‑k selection (with a configurable maximum block size κ) determines which streams are retained and which are duplicated; the original token’s keep score is forced to one, guaranteeing at least one surviving stream.

Forked streams receive a learned fork embedding and are positioned to the left of their parent token. Their positional embeddings are partially rotated proportionally to the number of forks, preserving relative order while reflecting the increased “thinking depth.” Crucially, both attention and residual updates are attenuated by the cumulative scores: the attention logits are biased with log P(k)ᵀ and the value vectors are element‑wise multiplied by P(k). This forces the model to rely more on high‑scoring streams and to assign higher scores to tokens that benefit from extra computation.

After the final transformer layer, each token may be represented by multiple streams. The model decodes each stream independently, then aggregates the resulting probability distributions using the cumulative scores as weights. The principled formulation uses a log‑sum‑exp trick for stability, but for large vocabularies (e.g., the 1.9 B‑parameter model) a cheaper approximation averages the streams first and then applies softmax.

Training follows the standard decoder‑only LM pipeline: cross‑entropy loss, AdamW optimizer, and a two‑stage schedule (40 B tokens warm‑up + constant LR, followed by a cosine decay fine‑tuning on a mix of MMLU, SmolTalk, and synthetic GSM8K data). Forking layers are placed after a few early blocks (layers 3, 7, 11) so that the forking decision sees sufficient context. Experiments span model sizes from 150 M to 1.9 B parameters. Across all scales, Thoughtbubbles trained on only half the token budget achieves lower perplexity and better zero‑shot performance on benchmarks such as MMLU, GSM8K, and various reasoning tasks compared to baseline decoder‑only models and to non‑adaptive parallel computation baselines.

A detailed analysis shows that the model allocates more forks to tokens with higher posterior entropy, indicating that it learns to focus extra computation on uncertain or difficult regions without any explicit supervision. Two inference regimes are explored: fixed forking (κ identical to training) and dynamic forking (κ scaled proportionally to the current sequence length). Dynamic forking, which better matches the training distribution, yields superior autoregressive generation quality and is used for all reported results.

In summary, Thoughtbubbles demonstrates that unsupervised, latent‑space parallelism can be learned during pretraining, eliminating the need for explicit chain‑of‑thought prompts at inference time. By learning to fork and prune residual streams, the model creates “bubbles” of extra computation for challenging tokens, merges them back, and achieves state‑of‑the‑art efficiency‑performance trade‑offs across a wide range of model sizes. This work opens a path toward unified training‑time and test‑time scaling strategies for large language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment