SimulSense: Sense-Driven Interpreting for Efficient Simultaneous Speech Translation

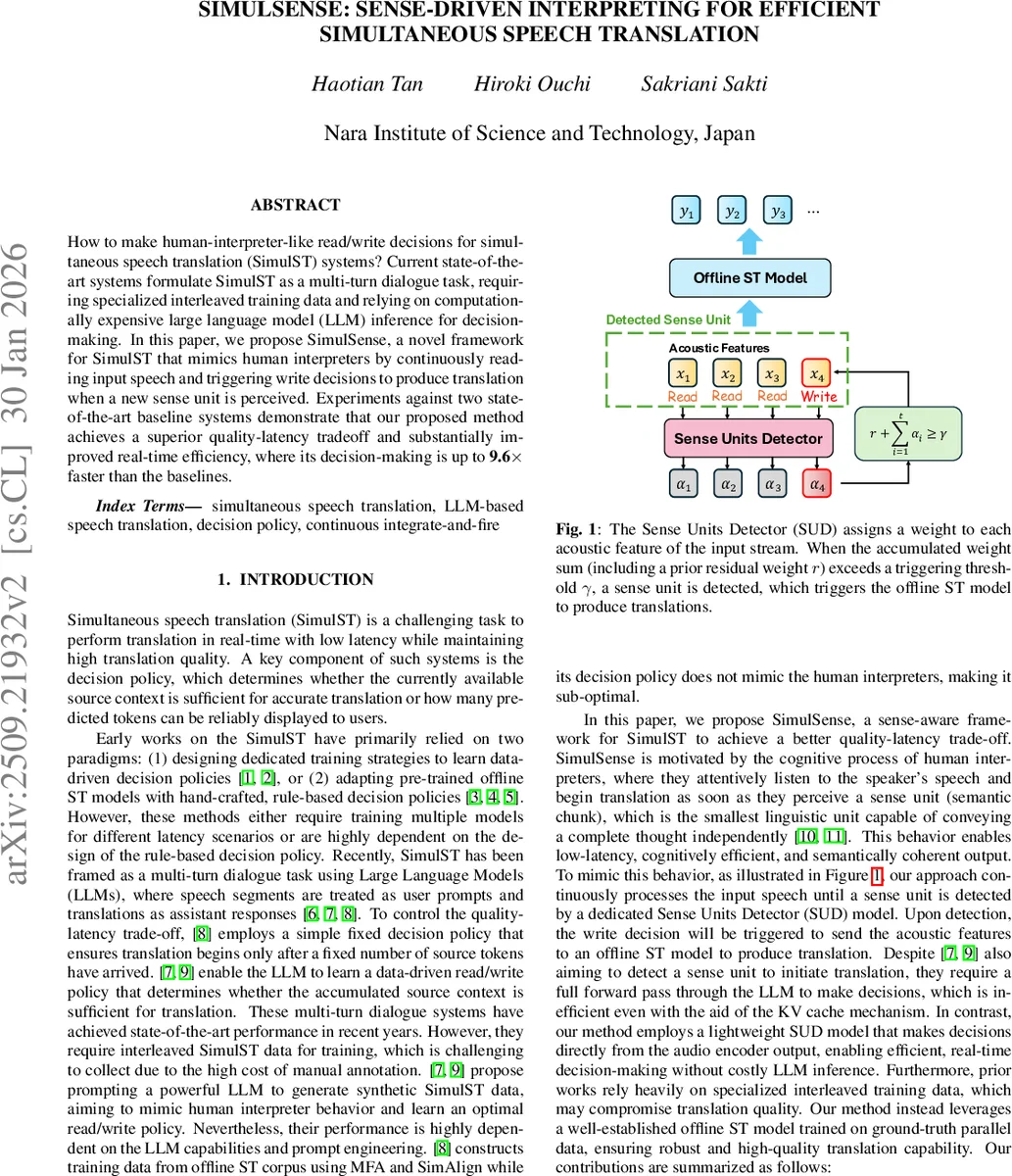

How to make human-interpreter-like read/write decisions for simultaneous speech translation (SimulST) systems? Current state-of-the-art systems formulate SimulST as a multi-turn dialogue task, requiring specialized interleaved training data and relying on computationally expensive large language model (LLM) inference for decision-making. In this paper, we propose SimulSense, a novel framework for SimulST that mimics human interpreters by continuously reading input speech and triggering write decisions to produce translation when a new sense unit is perceived. Experiments against two state-of-the-art baseline systems demonstrate that our proposed method achieves a superior quality-latency tradeoff and substantially improved real-time efficiency, where its decision-making is up to 9.6x faster than the baselines.

💡 Research Summary

SimulSense introduces a sense‑driven approach to simultaneous speech translation (SimulST) that emulates the read/write behavior of human interpreters. Traditional SimulST systems either train dedicated decision‑policy models for each latency setting or rely on handcrafted, rule‑based policies. More recent work reframes SimulST as a multi‑turn dialogue with large language models (LLMs), treating speech segments as user prompts and translations as assistant responses. While these LLM‑based methods achieve strong performance, they require interleaved SimulST training data—expensive to collect—and incur substantial inference cost because every read/write decision must be generated by the LLM.

SimulSense addresses these limitations with three core components. First, it defines a “sense unit” as the smallest semantic chunk that can convey a complete thought independently. To detect such units in real time, the authors design a lightweight Sense Units Detector (SUD) that operates directly on the acoustic encoder outputs. The SUD predicts two sets of weights (α and β) for each audio frame. The α‑weights are accumulated continuously; when their sum exceeds a fixed threshold γ (set to 1.0 during training), a sense‑unit boundary is triggered. This mechanism is inspired by Continuous Integrate‑and‑Fire (CIF) but is used only for boundary detection, not for generating output tokens. Because the SUD is a small convolution‑MLP network, its inference is orders of magnitude faster than running an LLM.

Second, the authors create training data for sense‑unit detection without manual annotation. They start from existing speech‑to‑text corpora (CoVoST‑2) and use a powerful LLM (Qwen‑3‑32B) to segment the source transcription into sense units under three latency tags (low, medium, high). Each sample is labeled with the appropriate tag, enabling the model to learn latency‑aware segmentation. To guide the SUD’s learning, they introduce a Sense‑Aware Transducer (SA‑T) training pipeline. In SA‑T, the α‑weights are constrained to produce exactly N‑1 boundaries for a sample containing N sense units, while the β‑weights are scaled so that the number of CIF‑integrated acoustic features matches the token count of each sense unit. Two quantitative losses (L_Qua1 and L_Qua2) enforce these constraints, and a standard cross‑entropy loss trains the transducer’s predictor. The SA‑T also includes a Conformer context block and a UGBP joiner, but its primary role is to provide a supervisory signal for the SUD.

Third, SimulSense couples the trained SUD with an off‑the‑shelf offline speech‑translation model. The offline model shares the same Whisper‑large‑v3 acoustic encoder as the SUD, and its decoder is a Qwen‑3‑8B LLM fine‑tuned with LoRA. During inference, the audio stream is processed chunk‑by‑chunk; the SUD monitors the accumulated α‑weights and, upon detecting a sense unit, immediately forwards the corresponding acoustic segment to the offline ST model, which generates the translation. This design eliminates the need for the LLM to make read/write decisions, dramatically reducing latency and computational load.

Experiments follow the IWSLT 2025 SimulST track setup, using CoVoST‑2 for training and ACL 60/60 for validation and testing across three language pairs: English→German, English→Japanese, and English→Chinese. The authors compare SimulSense against two strong baselines: NAIST‑2025 (an LLM‑based offline ST system with a Local Agreement policy) and Dialogue‑LLM (the multi‑turn dialogue approach). Evaluation metrics include BLEU for translation quality, LAAL for latency, and Real‑Time Factor (RTF) for computational efficiency. By varying the triggering threshold γ from 0.5 to 5.0, the system can trade off latency against quality.

Results show that SimulSense consistently outperforms both baselines on all language pairs. For En→De, it achieves up to 6.9 BLEU improvement at ~3.5 s latency and 11.4 BLEU at ~5.0 s latency. Similar gains are observed for En→Zh, and for En→Ja the system surpasses baselines once latency exceeds 3 s. Moreover, the decision module (audio encoder + SUD) is 3.0× faster than Dialogue‑LLM and 9.6× faster than NAIST‑2025, with an average inference time per decision of only 38.6 ms and an RTF of 0.016—substantially lower than the baselines (0.130 and 0.180). Ablation with different latency tags reveals that the tags mainly cap the maximum latency and have little impact on the overall quality‑latency curve, so a high‑latency tag is used by default.

Although the SA‑T itself exhibits a relatively high word error rate (67.7 % for the chosen SA‑T‑Small configuration), this does not degrade the final translation performance because the SUD learns to detect sense‑unit boundaries effectively even when transcription quality is low. This separation of boundary detection from transcription underscores the advantage of modular design.

In conclusion, SimulSense demonstrates that a sense‑driven, lightweight detector can replace costly LLM inference for read/write decisions in SimulST, achieving both superior quality‑latency trade‑offs and dramatic efficiency gains. The work opens avenues for further research on multilingual sense‑unit detection, more sophisticated latency‑aware tagging, and integration with other streaming translation architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment