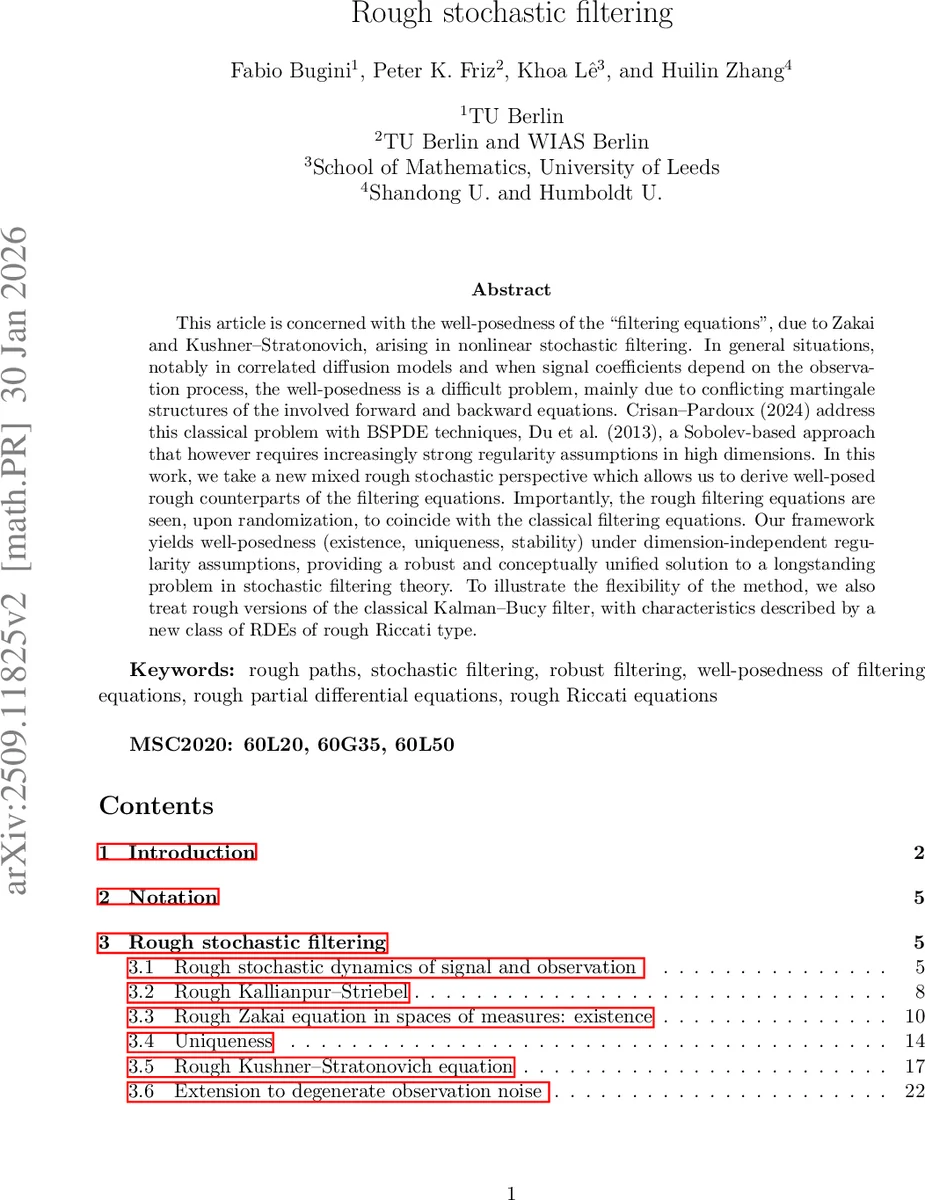

Rough stochastic filtering

This article is concerned with the well-posedness of the “filtering equations”, due to Zakai and Kushner-Stratonovich, arising in nonlinear stochastic filtering. In general situations, notably in correlated diffusion models and when signal coefficients depend on the observation process, the well-posedness is a difficult problem, mainly due to conflicting martingale structures of the involved forward and backward equations. Crisan-Pardoux (2024) address this classical problem with BSPDE techniques, Du et al. (2013), a Sobolev-based approach that however requires increasingly strong regularity assumptions in high dimensions. In this work, we take a new mixed rough stochastic perspective which allows us to derive well-posed rough counterparts of the filtering equations. Importantly, the rough filtering equations are seen, upon randomization, to coincide with the classical filtering equations. Our framework yields well-posedness (existence, uniqueness, stability) under dimension-independent regularity assumptions, providing a robust and conceptually unified solution to a longstanding problem in stochastic filtering theory. To illustrate the flexibility of the method, we also treat rough versions of the classical Kalman-Bucy filter, with characteristics described by a new class of RDEs of rough Riccati type.

💡 Research Summary

The paper tackles the long‑standing problem of establishing well‑posedness (existence, uniqueness, and stability) for the nonlinear stochastic filtering equations of Zakai and Kushner–Stratonovich, especially in the most challenging settings where the observation and signal processes are correlated and the signal coefficients may depend on the observation. Classical approaches either rely on backward stochastic partial differential equations (BSPDEs) with heavy Sobolev regularity requirements that grow with the signal dimension (as in Du et al., 2013) or on sophisticated forward‑backward stochastic calculus (Crisan–Pardoux, 2024) that still demands strong smoothness assumptions.

The authors introduce a fundamentally different perspective: they replace the stochastic observation process by a deterministic rough path. Concretely, the observation Y is lifted to a level‑2 rough path (Y,𝔜) belonging to a Hölder space C^{0,α}_1, where the second component encodes the Lévy‑area (or bracket) of Y. By treating Y as a fixed rough input, the stochastic filtering equations become deterministic rough partial differential equations (RPDEs). This “removal of probability” eliminates the incompatibility between forward and backward martingale structures that traditionally obstructs duality arguments.

The main contributions are as follows.

-

Rough Signal and Likelihood Dynamics. For any fixed rough observation Y, the authors define a rough signal X^Y and a rough likelihood Z^Y via the rough stochastic differential equations

dX^Y_t = b(t,X^Y_t;Y) dt + σ(t,X^Y_t;Y) dB_t + f(t,X^Y_t;Y) dY_t,

dZ^Y_t = Z^Y_t h(t,X^Y_t;Y) dY_t, Z^Y_0 = 1,

where B is a standard Brownian motion independent of Y. The coefficients satisfy uniform boundedness and Lipschitz conditions in the state variable, uniformly over all admissible Y, and f possesses a second‑order derivative that is Hölder‑continuous with exponent β > 1−α. These assumptions are dimension‑independent. -

Rough Conditional Expectations. The authors prove rough analogues of the conditional Feynman–Kac and Kallianpur–Striebel formulas:

µ^Y_t(φ) = 𝔼

Comments & Academic Discussion

Loading comments...

Leave a Comment