A Unified Evaluation Framework for Multi-Annotator Tendency Learning

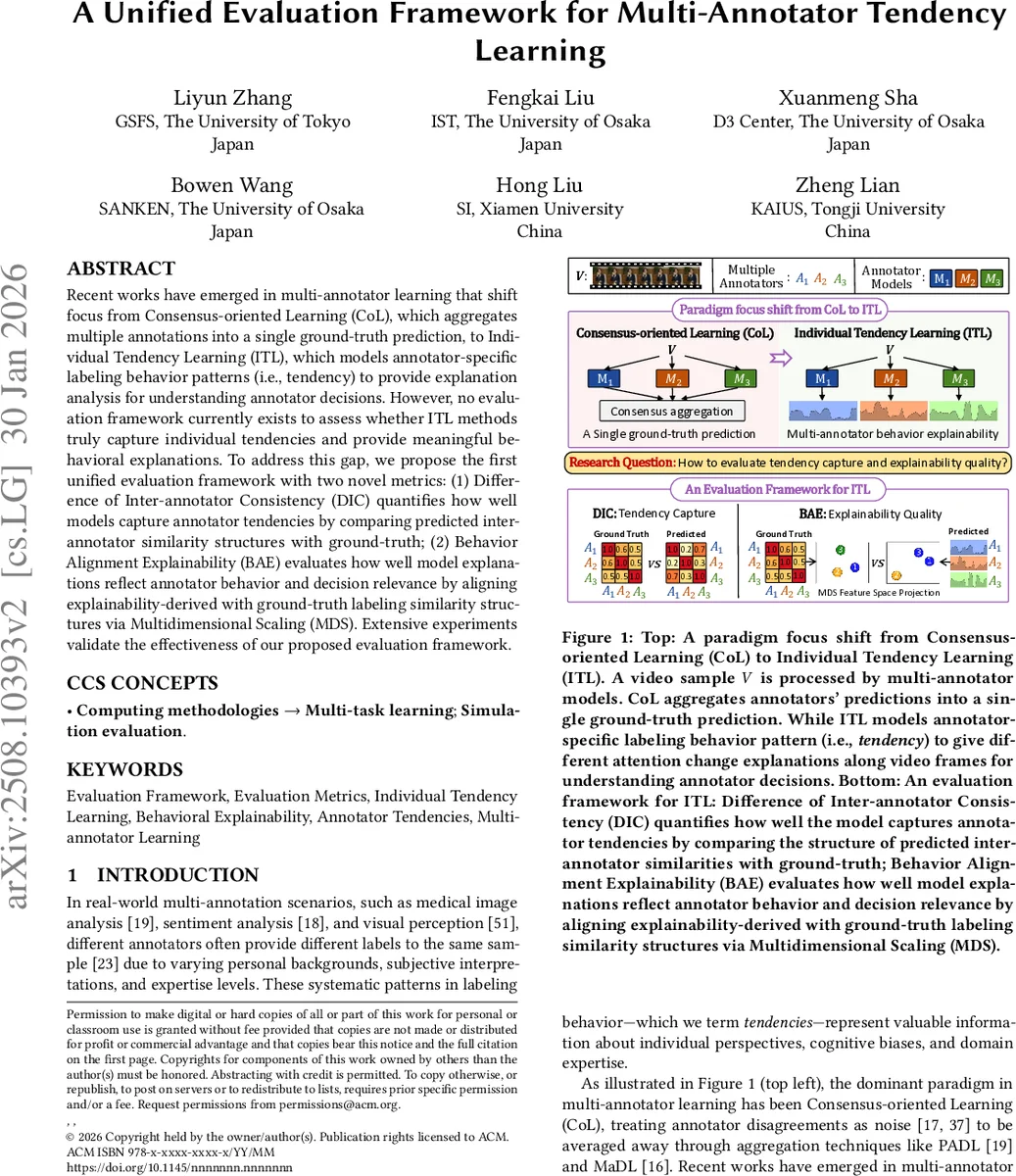

Recent works have emerged in multi-annotator learning that shift focus from Consensus-oriented Learning (CoL), which aggregates multiple annotations into a single ground-truth prediction, to Individual Tendency Learning (ITL), which models annotator-specific labeling behavior patterns (i.e., tendency) to provide explanation analysis for understanding annotator decisions. However, no evaluation framework currently exists to assess whether ITL methods truly capture individual tendencies and provide meaningful behavioral explanations. To address this gap, we propose the first unified evaluation framework with two novel metrics: (1) Difference of Inter-annotator Consistency (DIC) quantifies how well models capture annotator tendencies by comparing predicted inter-annotator similarity structures with ground-truth; (2) Behavior Alignment Explainability (BAE) evaluates how well model explanations reflect annotator behavior and decision relevance by aligning explainability-derived with ground-truth labeling similarity structures via Multidimensional Scaling (MDS). Extensive experiments validate the effectiveness of our proposed evaluation framework.

💡 Research Summary

The paper addresses a critical gap in the emerging field of Individual Tendency Learning (ITL), which aims to model the distinct labeling behaviors of each annotator rather than aggregating them into a single consensus label as done in traditional Consensus‑oriented Learning (CoL). While recent ITL architectures such as QuMAB and TAX have introduced mechanisms to capture annotator‑specific patterns and provide attention‑based explanations, there has been no principled way to evaluate whether these models truly preserve annotator tendencies and generate meaningful behavioral explanations.

To fill this void, the authors propose the first unified evaluation framework for ITL, consisting of two novel metrics:

-

Difference of Inter‑annotator Consistency (DIC) – DIC quantifies how well a model reproduces the relational structure among annotators. For each pair of annotators (k, l) the ground‑truth inter‑annotator consistency mₖₗ is computed using Cohen’s κ over their overlapping labeled samples. The model’s predicted consistency m′ₖₗ is obtained analogously from the model’s predicted labels. The two M × M matrices (M = number of annotators) are compared via a normalized Frobenius norm:

DIC = ‖M − M′‖_F / ‖M‖_F.

A lower DIC indicates that the model’s predictions retain the same patterns of agreement and disagreement as the real annotations. The formulation is agnostic to the specific similarity measure and can be extended to ordinal or continuous labels by replacing κ with appropriate correlation metrics. -

Behavior Alignment Explainability (BAE) – BAE assesses whether the explanations produced by an ITL model reflect the true behavioral relationships among annotators. Two complementary levels are considered:

Feature‑level: For each annotator i, the model’s penultimate‑layer representation is averaged across that annotator’s samples, yielding F̄_i. Pairwise cosine similarity between F̄_i and F̄_j forms a similarity matrix S_feature.

Region‑level: For attention‑based models, average attention maps Ā_i are computed and cosine‑similarity yields S_region.

Both S_feature and S_region are projected into a 2‑D space using Multidimensional Scaling (MDS). The projected structures are then aligned with the ground‑truth behavioral similarity matrix S_true (also κ‑based) using correlation or Procrustes analysis. Higher BAE scores mean that the explanation space mirrors the true annotator relationships, indicating that the model’s explanations are behaviorally faithful.

The framework is validated on several benchmark datasets spanning images, videos, and text, and on a range of models including CoL baselines (PADL, MaDL) and ITL methods (QuMAB, TAX). Results show that CoL models achieve high DIC values (≈0.5–0.6) and low BAE scores, confirming that they fail to preserve inter‑annotator structure or produce behaviorally aligned explanations. In contrast, ITL models obtain substantially lower DIC (≈0.12–0.28) and higher BAE (feature‑level 0.68–0.81, region‑level up to 0.84 for TAX), demonstrating effective tendency capture and explanation quality. Notably, some models exhibit low DIC but also low BAE, revealing that preserving annotator consistency does not automatically guarantee interpretable explanations—a nuance that the two metrics together can uncover.

The authors discuss limitations: (i) reliance on κ makes DIC sensitive to label imbalance; (ii) MDS dimensionality reduction may introduce distortion, affecting BAE; (iii) computational cost grows quadratically with the number of annotators. Future work is suggested to explore weighted κ variants, alternative embedding techniques (t‑SNE, UMAP), and matrix approximation methods to improve scalability.

In summary, this work provides the first systematic, quantitative toolkit for assessing both the fidelity of annotator‑specific tendency modeling and the behavioral relevance of explanations in multi‑annotator learning. By establishing DIC and BAE as standard evaluation criteria, the paper paves the way for more rigorous comparison, development, and deployment of ITL systems across diverse domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment