ARC: Argument Representation and Coverage Analysis for Zero-Shot Long Document Summarization with Instruction Following LLMs

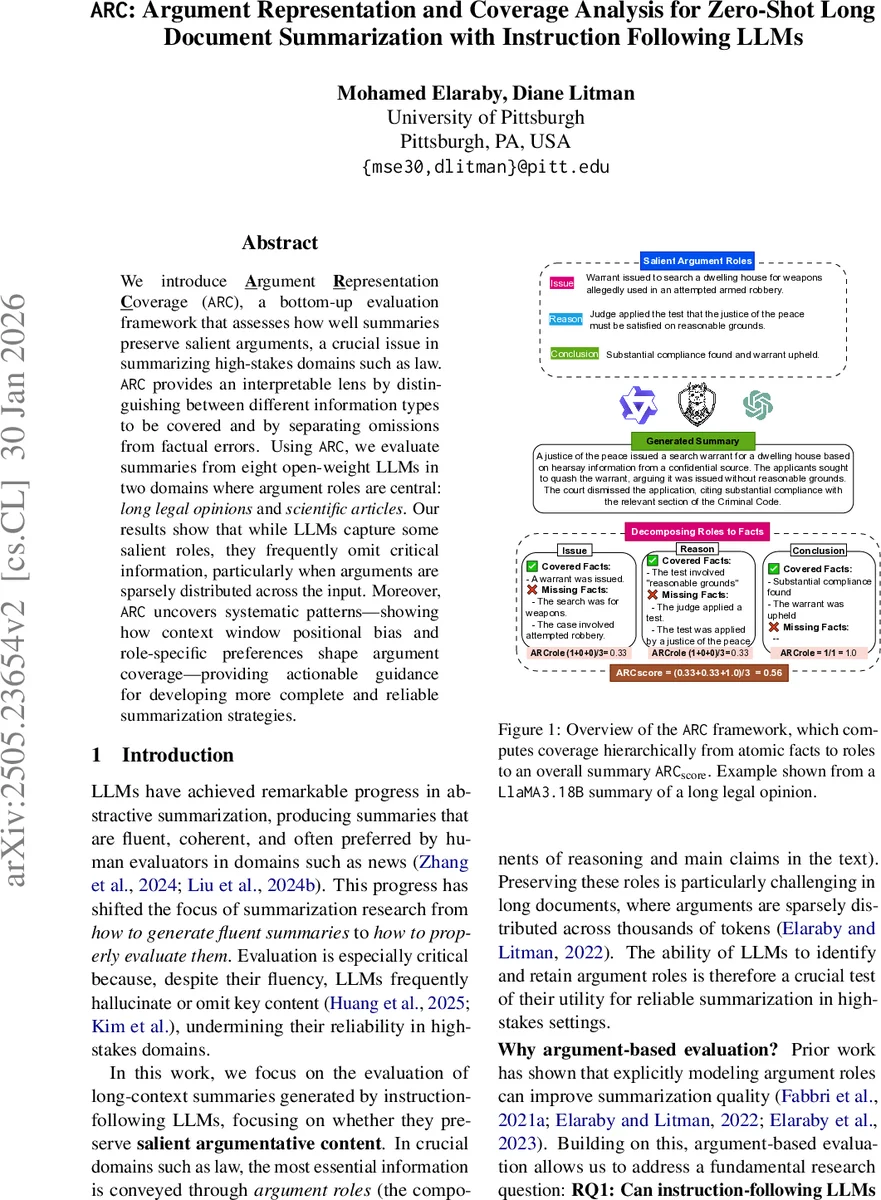

We introduce Argument Representation Coverage (ARC), a bottom-up evaluation framework that assesses how well summaries preserve salient arguments, a crucial issue in summarizing high-stakes domains such as law. ARC provides an interpretable lens by distinguishing between different information types to be covered and by separating omissions from factual errors. Using ARC, we evaluate summaries from eight open-weight large language models in two domains where argument roles are central: long legal opinions and scientific articles. Our results show that while these models capture some salient roles, they frequently omit critical information, particularly when arguments are sparsely distributed across the input. Moreover, ARC uncovers systematic patterns, showing how context window positional bias and role-specific preferences shape argument coverage, and provides actionable guidance for developing more complete and reliable summarization strategies.

💡 Research Summary

The paper introduces Argument Representation Coverage (ARC), a novel, bottom‑up evaluation framework designed to measure how well long‑form summaries preserve salient argumentative content, a critical requirement in high‑stakes domains such as law and scientific reporting. ARC operates in two stages. First, each annotated argumentative role (e.g., Issue, Reason, Conclusion in legal texts; Own Claim, Background Claim, Data in scientific articles) is automatically decomposed into a set of atomic facts using a strong language model (GPT‑4‑o). An entailment check filters out over‑generated facts, ensuring a concise, faithful fact set for each role. Second, a separate LLM judge evaluates whether each atomic fact is correctly covered in a generated summary. The judge returns a binary indicator (covered vs. not covered) together with a categorical error label (no error, missing, non‑factual), thereby distinguishing omissions from hallucinations. Role‑level coverage (ARC_role) is computed as the average of these binary indicators across all facts belonging to a role, and the overall summary coverage (ARC_score) is the mean of ARC_role values across all salient roles.

The authors apply ARC to two benchmark corpora that provide both argumentative role annotations and reference summaries: CANLII, a collection of 1,049 Canadian legal opinions annotated with the IRC scheme (Issue, Reason, Conclusion), and DRI, a set of 40 computer‑graphics scientific articles enriched with argument‑role annotations (Own Claim, Background Claim, Data). In CANLII, only 7.66 % of the source tokens are labeled with argumentative roles, yet these sentences constitute 66.51 % of the reference summaries, illustrating a “haystack” problem where models must locate sparse but crucial information. In DRI, argumentative content is more uniformly distributed (≈ 74 % of tokens), shifting the challenge toward selective compression.

Eight open‑weight instruction‑following LLMs (including Qwen‑2.5‑7B‑Instruct, Qwen‑2.5‑14B‑Instruct, Llama‑3.1‑8B‑Instruct, Mistral‑8B‑Instruct, QwQ‑32B‑AwQ, DeepSeek‑R1‑Distill‑Qwen‑14B) and two proprietary models (GPT‑4‑o, GPT‑4‑o‑mini) are evaluated. Correlation with a small expert‑annotated gold standard (87 legal opinion summaries, κ = 0.605) shows that ARC_score achieves the highest Kendall’s τ (0.465) and Pearson’s ρ (0.593) among all tested metrics, surpassing traditional ROUGE‑1/2/L, BERTScore, and even entailment‑based SummaC variants. Notably, FactScore—a prior fact‑level LLM‑judge metric—produces lower correlations, highlighting ARC’s advantage of aggregating fact judgments into interpretable role‑level scores.

Beyond overall performance, ARC enables fine‑grained diagnostic analysis. Role‑specific coverage reveals systematic biases: “Issue” statements attain an average ARC_role of 0.78, whereas “Reason” statements drop to 0.62, indicating that models more readily preserve problem statements and conclusions than the supporting logical chain. Positional bias analysis confirms a U‑shaped effect: facts located near the beginning or end of a document are 1.8 × more likely to be covered than those in the middle, mirroring findings from prior long‑document summarization work. A derived “bias score” quantifies the preference for certain roles, showing that all models consistently favor Conclusions and Issues while under‑representing Reasons, especially in the legal domain.

The paper also details the full reproducibility package: code for role decomposition, LLM‑judge prompting, and aggregation scripts are released on GitHub. The authors discuss limitations, such as reliance on a single LLM for fact decomposition and the need for human‑validated fact sets in new domains, and propose future extensions to multimodal or multilingual settings.

In summary, ARC provides a transparent, hierarchical metric that separates omission from hallucination, quantifies role‑level fidelity, and uncovers systematic biases in LLM‑generated long‑form summaries. By doing so, it offers actionable insights for improving model alignment, prompt engineering, and post‑processing strategies, ultimately advancing the reliability of automatic summarization in domains where argumentative completeness is non‑negotiable.

Comments & Academic Discussion

Loading comments...

Leave a Comment