Rethinking the Sampling Criteria in Reinforcement Learning for LLM Reasoning: A Competence-Difficulty Alignment Perspective

Reinforcement learning exhibits potential in enhancing the reasoning abilities of large language models, yet it is hard to scale for the low sample efficiency during the rollout phase. Existing methods attempt to improve efficiency by scheduling problems based on problem difficulties. However, these approaches suffer from unstable and biased estimations of problem difficulty and fail to capture the alignment between model competence and problem difficulty in RL training, leading to suboptimal results. To tackle these limitations, this paper introduces $\textbf{C}$ompetence-$\textbf{D}$ifficulty $\textbf{A}$lignment $\textbf{S}$ampling ($\textbf{CDAS}$), which enables accurate and stable estimation of problem difficulties by aggregating historical performance discrepancies of problems. Then the model competence is quantified to adaptively select problems whose difficulty is in alignment with the model’s current competence using a fixed-point system. Experimental results across a range of challenging mathematical benchmarks show that CDAS achieves great improvements in both accuracy and efficiency. CDAS attains the highest average accuracy against baselines and exhibits significant speed advantages compared to Dynamic Sampling, a competitive strategy in DAPO, which is 2.33 times slower than CDAS.

💡 Research Summary

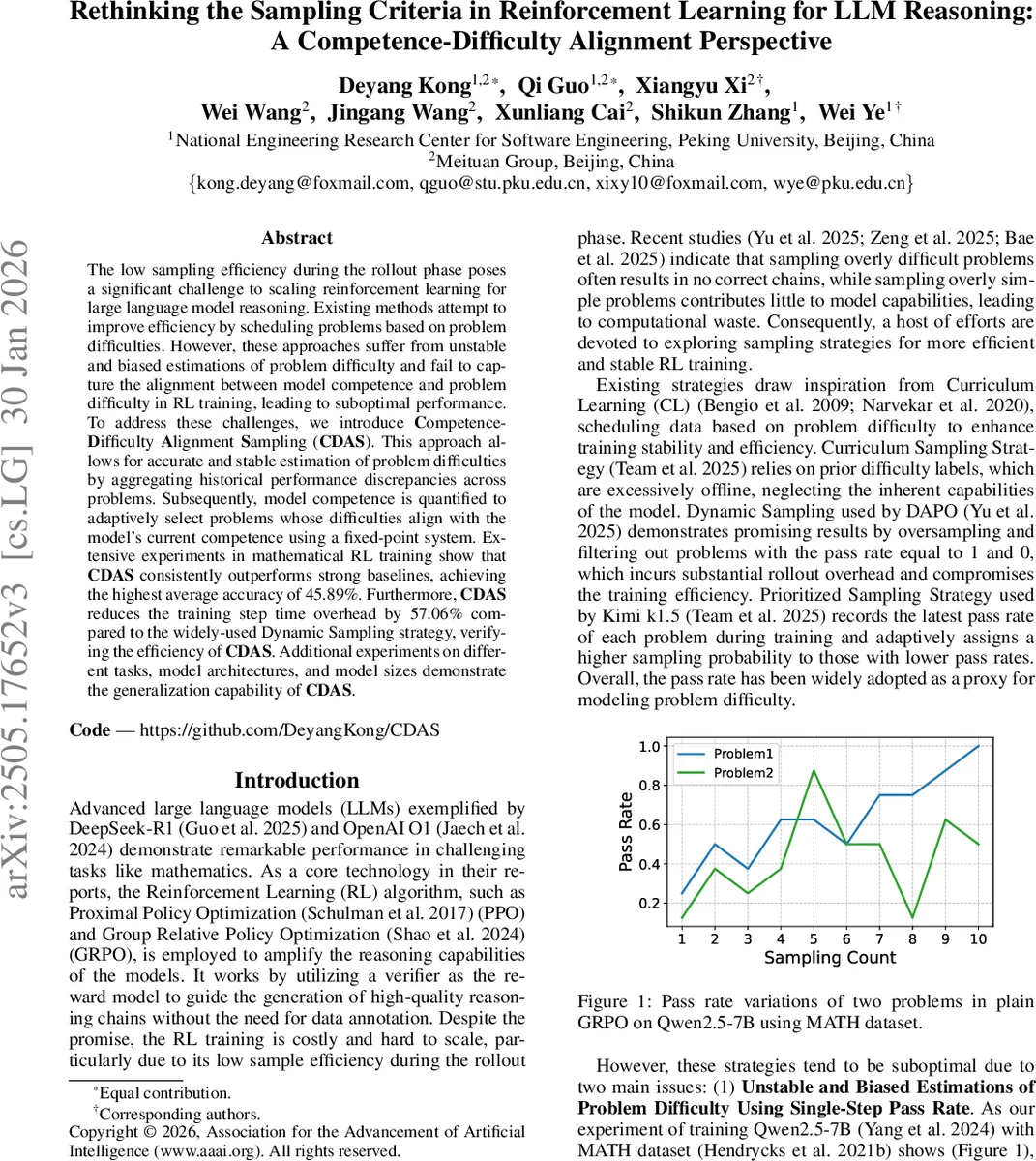

The paper addresses a critical bottleneck in reinforcement‑learning (RL) based fine‑tuning of large language models (LLMs) for reasoning tasks: the low sample efficiency of the rollout phase. Existing curriculum‑style or dynamic sampling methods rely on a single‑step pass‑rate as a proxy for problem difficulty. The authors demonstrate that this proxy is both unstable—exhibiting large fluctuations during training—and biased, leading to mis‑ranking of problem hardness. Moreover, most prior approaches simply prioritize harder problems, ignoring the need to match problem difficulty to the model’s current competence; this results in many zero‑gradient updates (overly hard samples) or wasted computation on trivially easy samples.

To overcome these issues, the authors propose Competence‑Difficulty Alignment Sampling (CDAS). CDAS introduces two formally defined quantities:

- Model competence (Cₙ) – the negative expectation of problem difficulty over the whole dataset at training step n, i.e., Cₙ = −Eₓ

Comments & Academic Discussion

Loading comments...

Leave a Comment