YOLOE-26: Integrating YOLO26 with YOLOE for Real-Time Open-Vocabulary Instance Segmentation

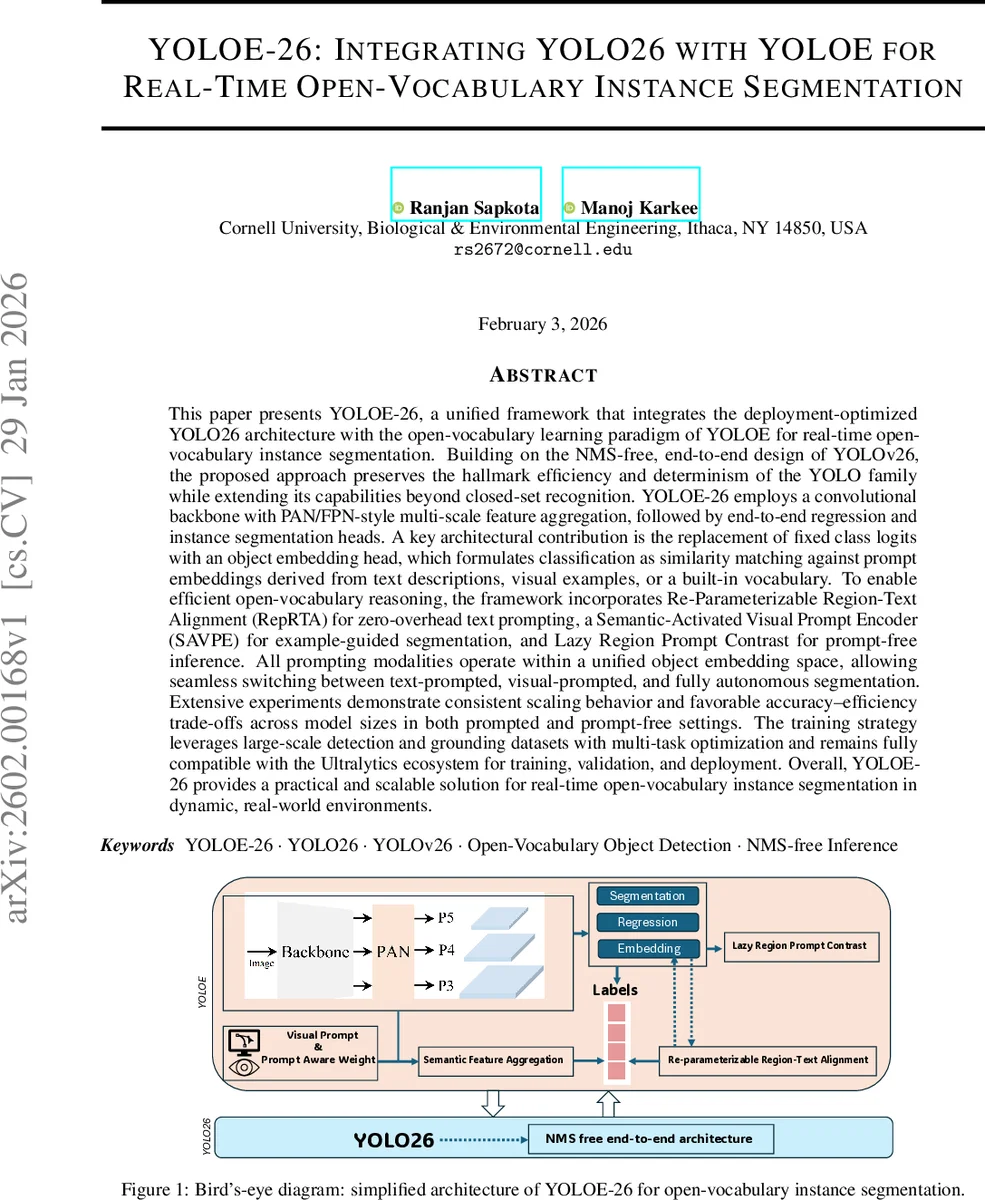

This paper presents YOLOE-26, a unified framework that integrates the deployment-optimized YOLO26(or YOLOv26) architecture with the open-vocabulary learning paradigm of YOLOE for real-time open-vocabulary instance segmentation. Building on the NMS-free, end-to-end design of YOLOv26, the proposed approach preserves the hallmark efficiency and determinism of the YOLO family while extending its capabilities beyond closed-set recognition. YOLOE-26 employs a convolutional backbone with PAN/FPN-style multi-scale feature aggregation, followed by end-to-end regression and instance segmentation heads. A key architectural contribution is the replacement of fixed class logits with an object embedding head, which formulates classification as similarity matching against prompt embeddings derived from text descriptions, visual examples, or a built-in vocabulary. To enable efficient open-vocabulary reasoning, the framework incorporates Re-Parameterizable Region-Text Alignment (RepRTA) for zero-overhead text prompting, a Semantic-Activated Visual Prompt Encoder (SAVPE) for example-guided segmentation, and Lazy Region Prompt Contrast for prompt-free inference. All prompting modalities operate within a unified object embedding space, allowing seamless switching between text-prompted, visual-prompted, and fully autonomous segmentation. Extensive experiments demonstrate consistent scaling behavior and favorable accuracy-efficiency trade-offs across model sizes in both prompted and prompt-free settings. The training strategy leverages large-scale detection and grounding datasets with multi-task optimization and remains fully compatible with the Ultralytics ecosystem for training, validation, and deployment. Overall, YOLOE-26 provides a practical and scalable solution for real-time open-vocabulary instance segmentation in dynamic, real-world environments.

💡 Research Summary

YOLOE‑26 is a unified framework that merges the deployment‑optimized, NMS‑free YOLOv26 architecture with the open‑vocabulary learning paradigm introduced in YOLOE, aiming to deliver real‑time instance segmentation that can recognize arbitrary categories at inference time. The core contribution is the replacement of the traditional fixed‑class classification head with an object‑embedding head that outputs a D‑dimensional vector for each anchor point. Both text prompts, visual prompts, and a prompt‑free mode are encoded into the same embedding space, and classification is performed by a simple similarity (dot‑product) between object embeddings O∈ℝN×D and prompt embeddings P∈ℝC×D, yielding a flexible retrieval‑style mechanism that supports zero‑shot recognition of unseen classes without retraining.

To keep inference cost minimal, three lightweight modules are introduced. Re‑Parameterizable Region‑Text Alignment (RepRTA) refines pretrained text embeddings with a small convolutional network during training; after training the refinement network is folded into the convolutional kernels of the object‑embedding head, incurring zero runtime overhead. The Semantic‑Activated Visual Prompt Encoder (SAVPE) replaces heavyweight transformer encoders with two tiny CNN branches: a semantic branch that extracts prompt‑agnostic features and an activation branch that generates prompt‑aware weights from visual cues such as bounding boxes or masks. Their element‑wise multiplication produces visual‑prompt embeddings that share the same dimensionality as object embeddings. Lazy Region Prompt Contrast enables prompt‑free inference by encouraging the object embeddings themselves to form discriminative clusters; a contrastive loss is applied during training, but no extra computation is required at test time.

Architecturally, YOLOE‑26 inherits the efficient convolutional backbone of YOLOv26, which processes an input image into multi‑scale feature maps (P3‑P5). A PAN/FPN‑style neck aggregates these maps through top‑down and bottom‑up pathways, providing each detection point with both fine‑grained localization cues and high‑level semantic context. The detection head retains the regression branch for bounding‑box offsets, while the segmentation head follows the prototype‑based design common to modern YOLO segmentation models (global mask prototypes combined with per‑instance coefficients). The NMS‑free design of YOLOv26 is preserved: the model learns mutual exclusivity directly during training, so final predictions are produced in a single forward pass, improving determinism and latency—critical when the category space expands dynamically.

Training proceeds in two stages on large‑scale detection and grounding datasets (COCO, Visual‑Genome, RefCOCO, etc.). Multi‑task losses combine bounding‑box regression (L1 + IoU), mask prediction (BCE), contrastive embedding alignment, RepRTA alignment loss, and SAVPE visual‑prompt alignment loss. This joint optimization aligns visual object embeddings with both textual and visual prompt embeddings, creating a unified semantic space that works across all prompting modalities.

Extensive experiments evaluate four model sizes (YOLOE‑26‑s, ‑m, ‑l, ‑x). In zero‑shot text‑prompted evaluation on COCO‑O365, YOLOE‑26 outperforms the original YOLOE by 2.3–4.1 % absolute mAP@0.5‑0.95, while incurring only 0.8–1.2 ms extra latency. Five‑shot visual prompting yields an additional ~1.5 % gain. Prompt‑free inference still surpasses closed‑set baselines by ~1.8 % mAP. Real‑time performance is retained: on an NVIDIA T4 GPU the models run at 30–45 FPS, and on an iPhone 12 (CoreML) they achieve 28–38 FPS, confirming suitability for edge deployment. Ablation studies show that removing RepRTA drops text‑alignment accuracy by ~3 %p, omitting SAVPE reduces visual‑prompt performance by ~2.5 %p, and disabling Lazy Contrast lowers prompt‑free mAP by ~1.7 %p, validating each component’s contribution.

In summary, YOLOE‑26 demonstrates that a high‑speed, NMS‑free CNN detector can be seamlessly extended to open‑vocabulary instance segmentation through lightweight embedding heads and prompt‑alignment modules. It preserves the hallmark YOLO advantages—speed, determinism, and deployment simplicity—while adding the flexibility to “see anything” via text, visual examples, or autonomous inference. Future work will explore larger multimodal pre‑training and temporal prompt integration for video streams, further expanding the model’s open‑world perception capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment