MERMAID: Memory-Enhanced Retrieval and Reasoning with Multi-Agent Iterative Knowledge Grounding for Veracity Assessment

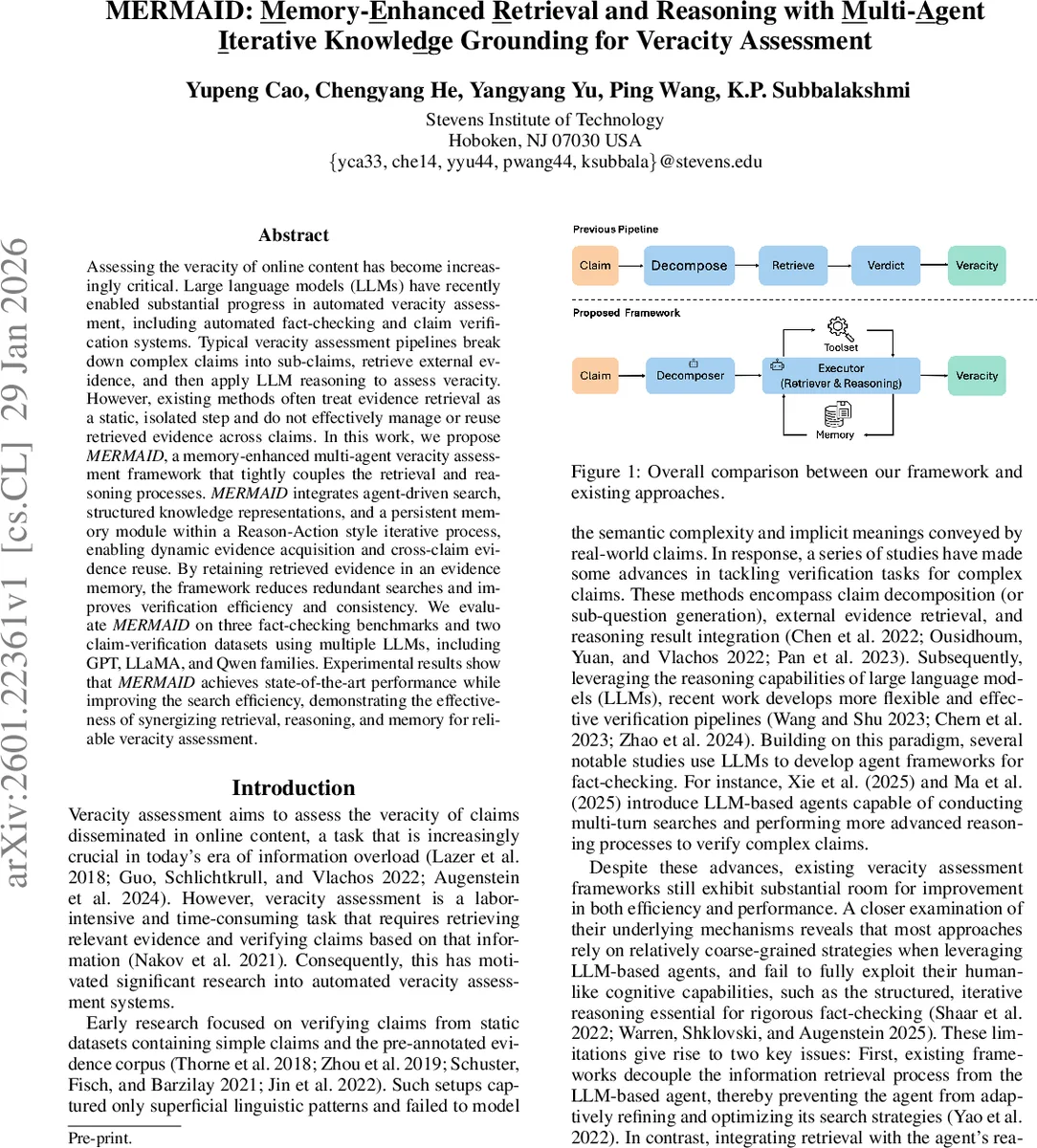

Assessing the veracity of online content has become increasingly critical. Large language models (LLMs) have recently enabled substantial progress in automated veracity assessment, including automated fact-checking and claim verification systems. Typical veracity assessment pipelines break down complex claims into sub-claims, retrieve external evidence, and then apply LLM reasoning to assess veracity. However, existing methods often treat evidence retrieval as a static, isolated step and do not effectively manage or reuse retrieved evidence across claims. In this work, we propose MERMAID, a memory-enhanced multi-agent veracity assessment framework that tightly couples the retrieval and reasoning processes. MERMAID integrates agent-driven search, structured knowledge representations, and a persistent memory module within a Reason-Action style iterative process, enabling dynamic evidence acquisition and cross-claim evidence reuse. By retaining retrieved evidence in an evidence memory, the framework reduces redundant searches and improves verification efficiency and consistency. We evaluate MERMAID on three fact-checking benchmarks and two claim-verification datasets using multiple LLMs, including GPT, LLaMA, and Qwen families. Experimental results show that MERMAID achieves state-of-the-art performance while improving the search efficiency, demonstrating the effectiveness of synergizing retrieval, reasoning, and memory for reliable veracity assessment.

💡 Research Summary

MERMAID (Memory‑Enhanced Retrieval and Reasoning with Multi‑Agent Iterative Knowledge Grounding) introduces a novel architecture for automated fact‑checking that tightly integrates evidence retrieval with large language model (LLM) reasoning and adds a persistent evidence memory for cross‑claim reuse. The system consists of four core components: (1) a Decomposer agent that transforms an input claim into a structured knowledge representation—a set of rational triplets (subject, relation, object, attributes) together with topical keywords; (2) an Evidence Memory that stores retrieved passages indexed by the entities extracted from the triplets; (3) an Executor agent that follows the ReAct (Reason‑Act) paradigm, alternating between “thought” generation and tool‑driven “action” (search) steps; and (4) a flexible Toolset exposing APIs such as Wikipedia lookup, web search, scholarly search, and PDF parsing.

The workflow begins by feeding a claim c into the Decomposer, yielding D₍c₎ = (G₍c₎, k₍c₎). Entities from G₍c₎ form a query set E₍c₎, which is used to retrieve any previously stored evidence M₍c₎ from the memory. An initial prompt P₀ combines the raw claim, the structured knowledge, the retrieved memory evidence, and an empty chat history. The Executor then iteratively executes a policy π (parameterized by an LLM) that produces a thought thₜ and an action aₜ at each step. If aₜ is a retrieval action, the corresponding tool τ is invoked, returning an observation oₜ that is appended to the conversation history Hₜ. The prompt is updated with the new history and the loop continues until the model emits an “Answer” action or a maximum step count is reached. The final output consists of a veracity label ŷ, a human‑readable reasoning trace, and any newly gathered evidence, which is written back into the memory for future reuse.

Experiments were conducted on five fact‑checking benchmarks—including FEVER, SciFact, ClaimDecompTest, and two recent claim‑verification datasets—using three state‑of‑the‑art LLM backbones (GPT‑4, LLaMA‑2‑70B, and Qwen‑1.8B). MERMAID consistently outperformed prior strong baselines (e.g., FIRE, Local, and other ReAct‑style agents) by 4–9 percentage points in F1 score, especially on complex multi‑evidence claims. Importantly, the average number of search calls per claim dropped by over 30 % thanks to evidence reuse via the memory module. Ablation studies showed that removing any of the three pillars—structured decomposition, the memory, or the iterative ReAct loop—significantly degrades performance, confirming their synergistic contribution.

A detailed case study on two related claims about Jack Dorsey illustrates the system’s behavior: the first claim triggers a Wikipedia search, stores the retrieved paragraph in memory, and yields a “True” verdict. The second claim, which shares the same entities, finds sufficient evidence directly from memory and avoids an additional search, mirroring how human fact‑checkers leverage prior knowledge before conducting new investigations.

The paper also discusses future directions: incorporating automatic evidence credibility scoring, summarizing and deduplicating large memory stores, extending the toolset to multimodal sources (images, tables, video), and optimizing latency for real‑time deployment on news streams or social‑media platforms.

In summary, MERMAID demonstrates that (i) tightly coupling retrieval with LLM reasoning, (ii) grounding reasoning in a structured knowledge graph, and (iii) maintaining a persistent evidence memory together yield a more accurate, efficient, and interpretable fact‑checking system. This work marks a significant step toward scalable, trustworthy automated veracity assessment in real‑world information ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment