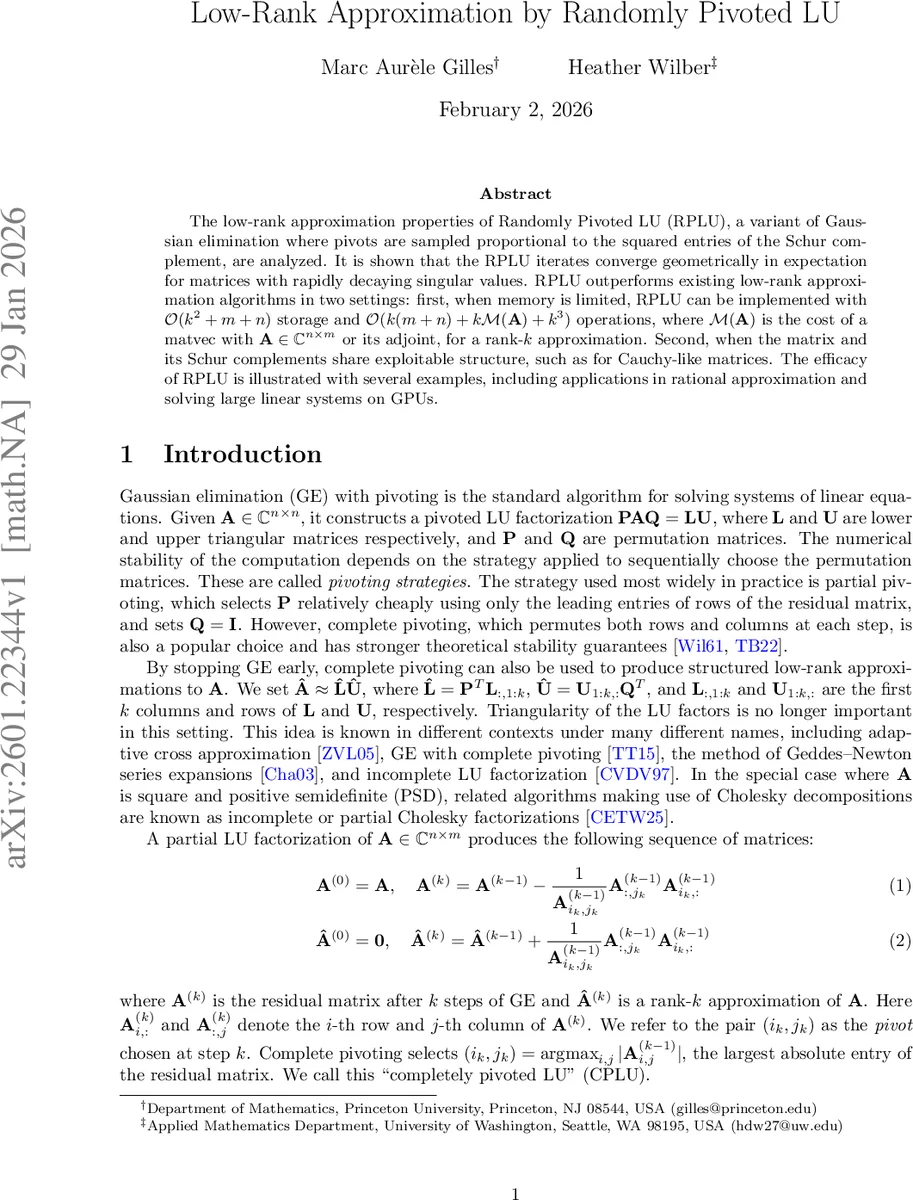

Low-Rank Approximation by Randomly Pivoted LU

The low-rank approximation properties of Randomly Pivoted LU (RPLU), a variant of Gaussian elimination where pivots are sampled proportional to the squared entries of the Schur complement, are analyzed. It is shown that the RPLU iterates converge geometrically in expectation for matrices with rapidly decaying singular values. RPLU outperforms existing low-rank approximation algorithms in two settings: first, when memory is limited, RPLU can be implemented with $\mathcal{O}(k^2 + m + n)$ storage and $\mathcal{O}( k(m + n)+ k\mathcal{M}(\mat{A}) + k^3)$ operations, where $\mathcal{M}(\mat{A})$ is the cost of a matvec with $\mat{A}\in\mathbb{C}^{n\times m}$ or its adjoint, for a rank-$k$ approximation. Second, when the matrix and its Schur complements share exploitable structure, such as for Cauchy-like matrices. The efficacy of RPLU is illustrated with several examples, including applications in rational approximation and solving large linear systems on GPUs.

💡 Research Summary

The paper introduces Randomly Pivoted LU (RPLU), a novel low‑rank approximation algorithm that modifies the classic Gaussian elimination with complete pivoting by selecting pivots at random, with probabilities proportional to the squared magnitude of the entries of the current Schur complement. At each iteration k, a pivot (iₖ, jₖ) is drawn from the distribution

P(i,j | A^{(k‑1)}) = |A^{(k‑1)}_{ij}|² / ‖A^{(k‑1)}‖_F².

The selected column and row are then normalized to form the k‑th column of L and the k‑th row of U, and the residual matrix is updated by subtracting their outer product. The process stops after r steps, yielding a rank‑r factorization  = L U.

Theoretical contribution.

The authors prove that if the singular values of A decay geometrically, σₖ = O(ρᵏ) with ρ < ½, then the expected Frobenius error of the RPLU approximation satisfies

E

Comments & Academic Discussion

Loading comments...

Leave a Comment