SCALAR: Quantifying Structural Hallucination, Consistency, and Reasoning Gaps in Materials Foundation Models

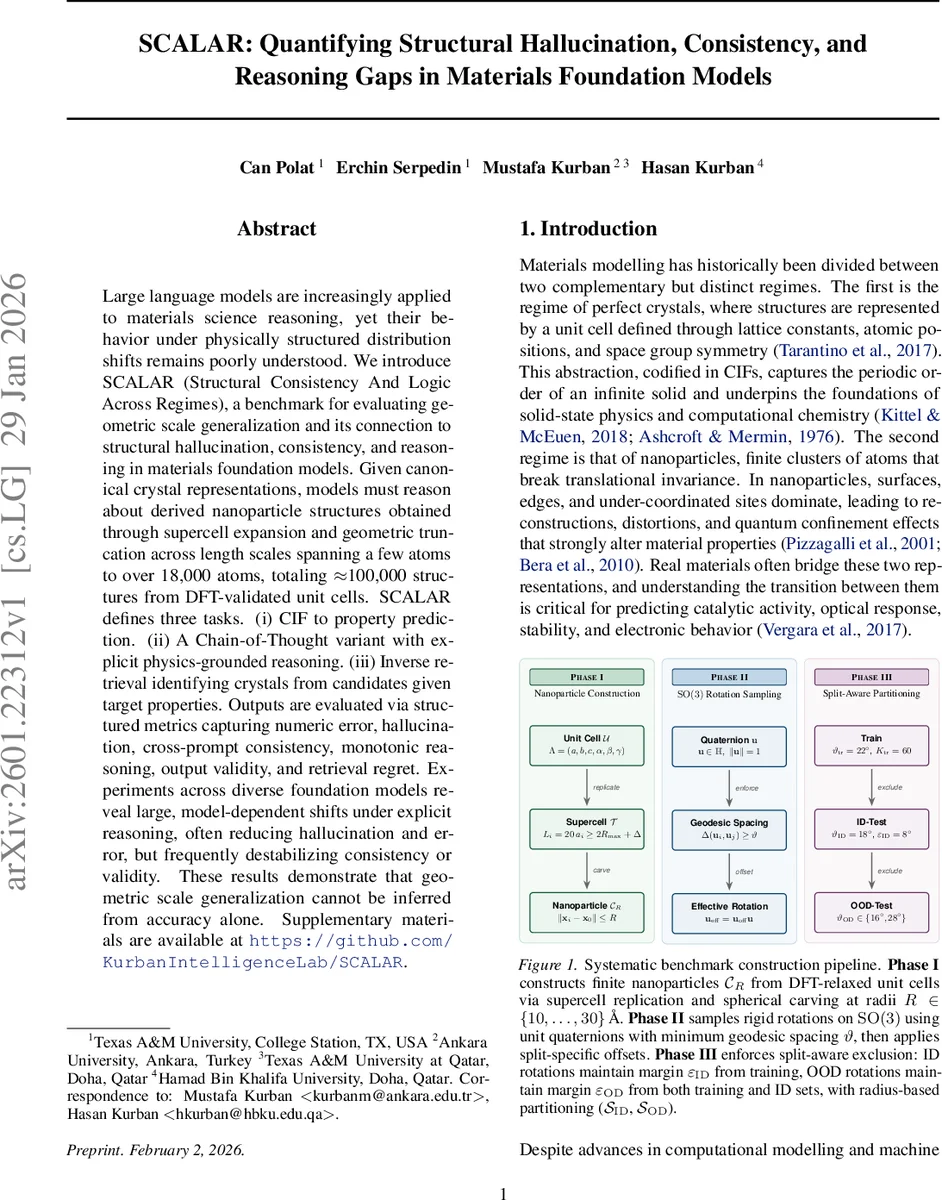

Large language models are increasingly applied to materials science reasoning, yet their behavior under physically structured distribution shifts remains poorly understood. We introduce SCALAR (Structural Consistency And Logic Across Regimes), a benchmark for evaluating geometric scale generalization and its connection to structural hallucination, consistency, and reasoning in materials foundation models. Given canonical crystal representations, models must reason about derived nanoparticle structures obtained through supercell expansion and geometric truncation across length scales spanning a few atoms to over 18,000 atoms, totaling $\approx$100,000 structures from DFT-validated unit cells. SCALAR defines three tasks. (i) CIF to property prediction. (ii) A Chain-of-Thought variant with explicit physics-grounded reasoning. (iii) Inverse retrieval identifying crystals from candidates given target properties. Outputs are evaluated via structured metrics capturing numeric error, hallucination, cross-prompt consistency, monotonic reasoning, output validity, and retrieval regret. Experiments across diverse foundation models reveal large, model-dependent shifts under explicit reasoning, often reducing hallucination and error, but frequently destabilizing consistency or validity. These results demonstrate that geometric scale generalization cannot be inferred from accuracy alone. Supplementary materials are available at https://github.com/KurbanIntelligenceLab/SCALAR.

💡 Research Summary

The paper introduces SCALAR (Structural Consistency And Logic Across Regimes), a benchmark designed to probe how materials‑focused foundation models handle physically structured distribution shifts that arise when moving from bulk crystal representations to nanoscale particles. Existing materials datasets largely contain a single scale—either infinite periodic unit cells or isolated molecules—making it difficult to assess whether models preserve global invariants such as symmetry, atom‑count, and compositional identity across resolutions. SCALAR addresses this gap by constructing a dataset of approximately 100 000 structures derived from DFT‑relaxed unit cells of 41 elements. For each material, a 20 × 20 × 20 supercell is generated, and spherical carving produces finite nanoparticles with radii ranging from 10 Å to 30 Å, yielding structures that span four orders of magnitude in atom count (4 – 18 123 atoms).

To prevent orientation bias and to create well‑defined train/ID/OOD splits, the authors sample rigid rotations on SO(3) using unit quaternions. A greedy algorithm enforces a minimum geodesic spacing ϑ, while exclusion margins ε guarantee that rotations in the training set are distinct from those in the ID and OOD sets. Fixed quaternion offsets further decorrelate the orientation distributions across splits. Radii are partitioned into ID (13, 15, 17, 20, 24, 27 Å) and OOD (10, 11, 29, 30 Å) groups, and augmentation budgets are capped to keep dataset size manageable. Symmetry‑equivalent rotations are deduplicated, ensuring each structure is unique up to numerical tolerance.

SCALAR defines three complementary tasks. (1) CIF‑to‑property prediction evaluates standard regression accuracy (e.g., formation energy, band gap) while also measuring “structural hallucination” – cases where a model’s confident prediction violates a known invariant such as atom count or space‑group symmetry. (2) A Chain‑of‑Thought (CoT) variant asks models to produce explicit physics‑grounded reasoning steps (e.g., surface‑to‑volume ratios, coordination changes) before delivering the final property value. This variant reveals whether explicit reasoning reduces hallucination and error, and how it impacts cross‑prompt consistency. (3) Inverse retrieval presents a target property and a candidate list; the model must identify the correct crystal, with performance quantified by “retrieval regret,” i.e., the gap between the chosen candidate’s property and the optimal one.

Experiments span a range of foundation models: general‑purpose large language models (e.g., GPT‑4), materials‑specialized LLMs (e.g., MatBERT), and geometry‑aware graph neural networks (GNNs). Results show model‑dependent shifts when CoT reasoning is introduced. Across most models, hallucination rates drop by 30–40 % and mean absolute errors improve (e.g., MAE from 0.12 eV to 0.08 eV). However, the same explicit reasoning often destabilizes cross‑prompt consistency, with answer variance increasing by up to 15 % for identical inputs phrased differently. In OOD regimes (unseen rotations or extreme radii), errors surge dramatically, indicating limited robustness to geometric distribution shifts. Retrieval experiments demonstrate high ID accuracy (>90 %) but markedly higher regret on OOD sets, confirming that models struggle to infer the correct bulk identity from highly surface‑dominated particles.

The authors argue that accuracy alone is insufficient to assess scale generalization. Structured metrics that capture invariance preservation, hallucination, consistency, monotonic reasoning, output validity, and retrieval regret are essential for a holistic view. They suggest future work should explore richer data augmentations (e.g., surface reconstructions, ligand attachments), multimodal integration (combining textual descriptors with 3D point clouds), and architectures that embed physical constraints directly (e.g., equivariant GNNs). By providing a systematic cross‑scale evaluation framework, SCALAR sets a new benchmark for diagnosing and improving the physical fidelity of materials foundation models.

Comments & Academic Discussion

Loading comments...

Leave a Comment