Coarse-to-Real: Generative Rendering for Populated Dynamic Scenes

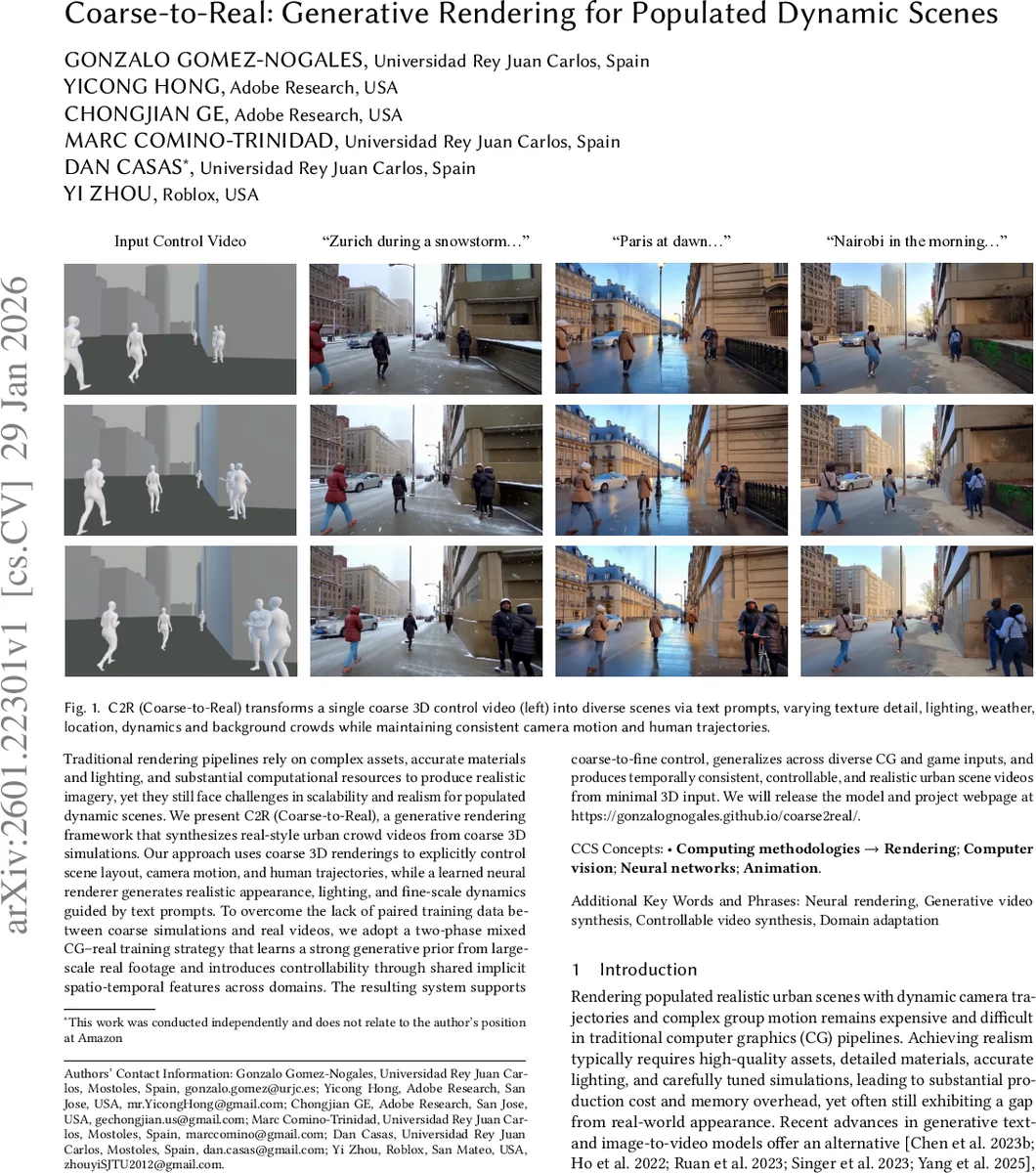

Traditional rendering pipelines rely on complex assets, accurate materials and lighting, and substantial computational resources to produce realistic imagery, yet they still face challenges in scalability and realism for populated dynamic scenes. We present C2R (Coarse-to-Real), a generative rendering framework that synthesizes real-style urban crowd videos from coarse 3D simulations. Our approach uses coarse 3D renderings to explicitly control scene layout, camera motion, and human trajectories, while a learned neural renderer generates realistic appearance, lighting, and fine-scale dynamics guided by text prompts. To overcome the lack of paired training data between coarse simulations and real videos, we adopt a two-phase mixed CG-real training strategy that learns a strong generative prior from large-scale real footage and introduces controllability through shared implicit spatio-temporal features across domains. The resulting system supports coarse-to-fine control, generalizes across diverse CG and game inputs, and produces temporally consistent, controllable, and realistic urban scene videos from minimal 3D input. We will release the model and project webpage at https://gonzalognogales.github.io/coarse2real/.

💡 Research Summary

The paper introduces Coarse‑to‑Real (C2R), a generative rendering framework that converts inexpensive, coarse 3D simulations into photorealistic urban crowd videos guided by textual prompts. Traditional graphics pipelines demand high‑quality assets, detailed materials, accurate lighting, and costly simulations to achieve realism, especially for densely populated scenes with moving cameras. Recent text‑to‑video and video‑to‑video models can produce realistic frames but lack precise control over camera trajectories, crowd dynamics, and scene layout. C2R bridges this gap by using a lightweight 3D proxy to supply explicit structural information (camera motion, scene geometry, human trajectories) while delegating appearance, lighting, weather, and fine‑grained crowd dynamics to a data‑driven diffusion model trained on large‑scale real footage.

Training proceeds in two phases. Phase 1 (Generative Distribution Alignment) fine‑tunes only the diffusion backbone on unpaired real urban videos together with their text prompts, using a flow‑matching objective to learn a strong photorealistic prior. The VAE encoder/decoder and text encoder remain frozen. Phase 2 (Spatio‑Temporal Control Grounding) introduces controllability. Both real videos and synthetic coarse renders are processed frame‑wise by a pretrained DINOv3 ViT; the resulting patch embeddings are concatenated temporally and passed through a lightweight adapter that projects them into the diffusion latent space. The adapter’s output is added to the noisy latent at each diffusion timestep, allowing the model to respect the coarse structural cues without the over‑constraining effect of explicit depth or edge maps used in prior ControlNet‑style approaches.

Data is a mixture of abundant real videos (hundreds of thousands of clips from five continents) and a small set of paired synthetic data where a coarse render is matched with a high‑quality target. Real data shapes the generative prior, while synthetic pairs provide the sparse supervision needed to align coarse structure with realistic output. This mixed‑domain strategy prevents CG‑specific artifacts from dominating the model while still teaching it how to map low‑detail geometry to high‑detail appearance.

Experiments demonstrate that C2R can take a single coarse 3D control video and, by changing only the text prompt, generate diverse outputs varying texture detail, lighting, weather, location, and background crowd density, all while preserving the original camera motion and human trajectories. Quantitative metrics (LPIPS, FVD, temporal consistency scores) show substantial improvements over state‑of‑the‑art text‑to‑video and video‑to‑video methods, especially in long‑range temporal coherence and multi‑person interaction fidelity. Qualitative results illustrate realistic hair movement, clothing flutter, dynamic shadows, and weather effects that were absent in the coarse input.

In summary, C2R proposes a novel hybrid paradigm: coarse 3D simulations provide stable structural control, and a two‑stage diffusion model supplies photorealistic rendering. By decoupling prior learning from control grounding and using implicit spatio‑temporal features as the bridge between real and synthetic domains, the framework achieves controllable, temporally consistent, and high‑fidelity video synthesis for populated dynamic scenes with minimal 3D authoring effort. The authors plan to release the model and code, opening avenues for low‑cost virtual production, game cinematics, and immersive simulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment