Lost in Space? Vision-Language Models Struggle with Relative Camera Pose Estimation

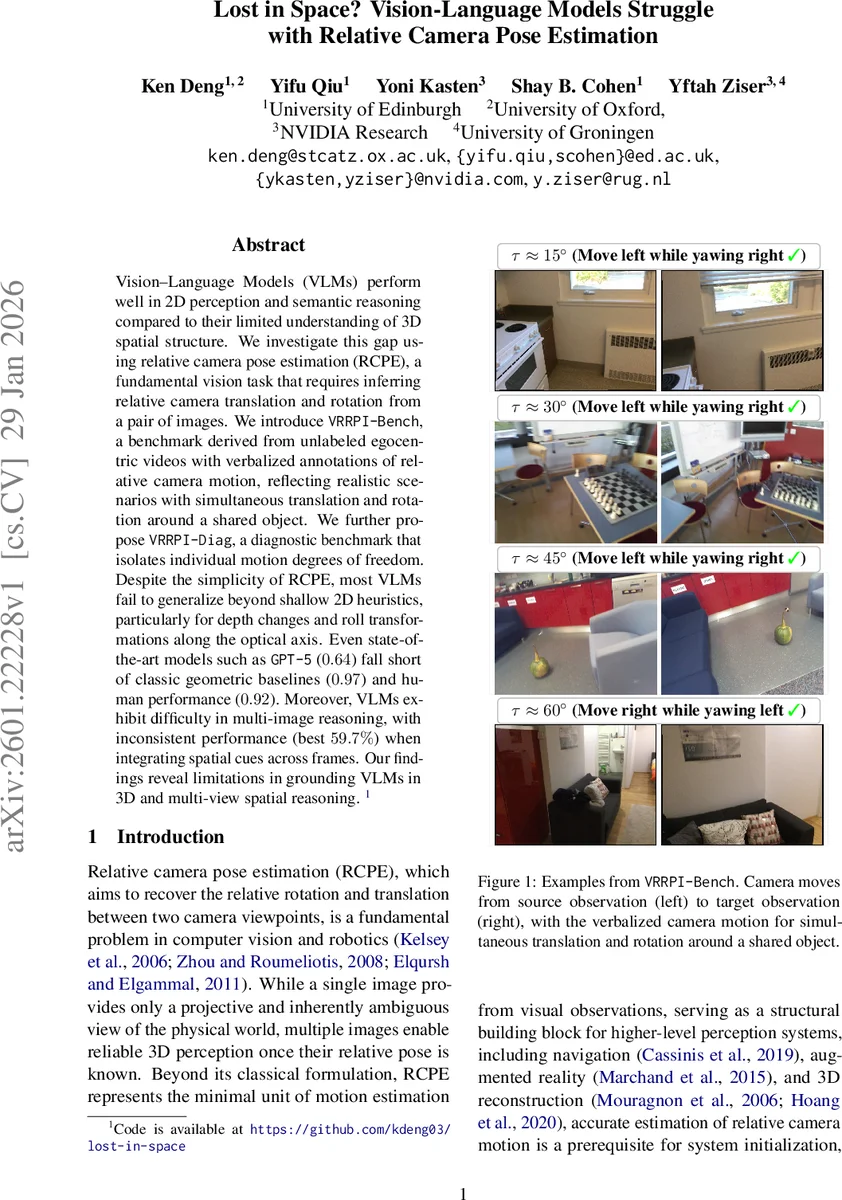

Vision-Language Models (VLMs) perform well in 2D perception and semantic reasoning compared to their limited understanding of 3D spatial structure. We investigate this gap using relative camera pose estimation (RCPE), a fundamental vision task that requires inferring relative camera translation and rotation from a pair of images. We introduce VRRPI-Bench, a benchmark derived from unlabeled egocentric videos with verbalized annotations of relative camera motion, reflecting realistic scenarios with simultaneous translation and rotation around a shared object. We further propose VRRPI-Diag, a diagnostic benchmark that isolates individual motion degrees of freedom. Despite the simplicity of RCPE, most VLMs fail to generalize beyond shallow 2D heuristics, particularly for depth changes and roll transformations along the optical axis. Even state-of-the-art models such as GPT-5 ($0.64$) fall short of classic geometric baselines ($0.97$) and human performance ($0.92$). Moreover, VLMs exhibit difficulty in multi-image reasoning, with inconsistent performance (best $59.7%$) when integrating spatial cues across frames. Our findings reveal limitations in grounding VLMs in 3D and multi-view spatial reasoning.

💡 Research Summary

This paper investigates a fundamental gap in modern vision‑language models (VLMs): while they excel at 2‑D perception and semantic reasoning, they struggle to infer 3‑D spatial relationships, specifically relative camera pose estimation (RCPE). RCPE requires estimating the six degrees of freedom (3 rotations + 3 translations) that describe how a camera moves between two viewpoints—a core problem for SLAM, structure‑from‑motion, and embodied robotics.

To evaluate VLMs on this task, the authors introduce two new benchmarks derived from real‑world egocentric video datasets (7 Scenes, ScanNet, ScanNet++). VRRPI‑Bench selects image pairs that observe the same central object from different viewpoints and attaches natural‑language descriptions of the dominant camera motion (e.g., “move left while yawing right”). The pairs are grouped into four angular bins (15°, 30°, 45°, 60°) to simulate increasing viewpoint difficulty. VRRPI‑Diag further filters pairs so that only a single degree of freedom exceeds a predefined threshold while the other five remain near zero, enabling fine‑grained analysis of each motion axis.

The experimental protocol treats RCPE as a discrete classification problem: given two images, a VLM must output the most salient motion class (e.g., “yaw right”, “translation forward”). The authors evaluate a broad spectrum of VLMs: open‑source models (Lv‑Next, Lv‑OneVision, Idefics3, Qwen2.5‑VL variants), proprietary models (GPT‑4o, GPT‑5), a fine‑tuned spatial model (SpaceQwen), and reasoning‑oriented variants (GLM‑4.1V‑Thinking, Qwen3‑VL‑thinking). All models receive the same prompt and are evaluated with macro‑averaged F1 score to mitigate class imbalance.

For reference, two classic geometry‑based pipelines are used as baselines: (1) SIFT + RANSAC, which extracts sparse keypoints and solves for the essential matrix; (2) LoFTR + RANSAC, a dense transformer‑based matcher that achieves near‑optimal performance. Human annotators also solve a subset of the benchmark for an upper bound.

Key findings:

-

Geometric baselines dominate. LoFTR reaches 0.97 F1 on 7 Scenes and 0.91 on ScanNet, essentially saturating the task. SIFT remains competitive (0.83 on 7 Scenes) but degrades on more challenging scenes (0.66 on ScanNet).

-

VLMs lag far behind. Most VLMs score between 0.30 and 0.50 F1, with performance dropping sharply for larger viewpoint angles. Even the best open‑source models (largest Qwen variants) cannot approach geometric methods.

-

GPT‑5 is the only VLM that narrows the gap, achieving 0.64 F1 on 7 Scenes and 0.61 on ScanNet, yet still far below humans (0.92) and LoFTR (0.97).

-

Multi‑image consistency is poor. When source and target images are swapped, the best VLM accuracy falls to 59.7 %, indicating a lack of symmetric reasoning across views.

-

Diagnostic analysis reveals specific weaknesses. Depth‑axis translation (t_z) and roll rotation (ψ) are the hardest for VLMs; GPT‑5’s roll accuracy is only 0.47, while its average across other axes is 0.90. Pitch, yaw, and lateral translations are comparatively better.

-

Error analysis shows VLMs rely on shallow 2‑D cues. They can correctly answer single‑image spatial queries (e.g., “object A is left of B”) but fail to establish reliable correspondences between two images, to invert object motion into camera motion, or to respect geometric constraints such as epipolar geometry.

The authors argue that these results expose a fundamental limitation: current VLMs do not internalize metric 3‑D reasoning and therefore cannot be trusted for tasks requiring precise multi‑view understanding (e.g., navigation, AR overlay, robotic manipulation). To bridge the gap, they suggest three research directions: (i) incorporating explicit depth or point‑cloud inputs; (ii) pre‑training or fine‑tuning with geometric consistency losses; and (iii) designing architectures that enforce cross‑view symmetry and coordinate grounding.

In summary, the paper contributes (1) two realistic, publicly released benchmarks for evaluating relative camera pose estimation in VLMs, (2) a comprehensive empirical study showing that even state‑of‑the‑art VLMs fall short of classic geometry and human performance, and (3) a clear roadmap for enhancing VLMs with genuine 3‑D spatial intelligence. This work underscores that progress in “vision‑language” must be complemented by “vision‑language‑geometry” to enable reliable deployment of multimodal models in embodied AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment