Value-Based Pre-Training with Downstream Feedback

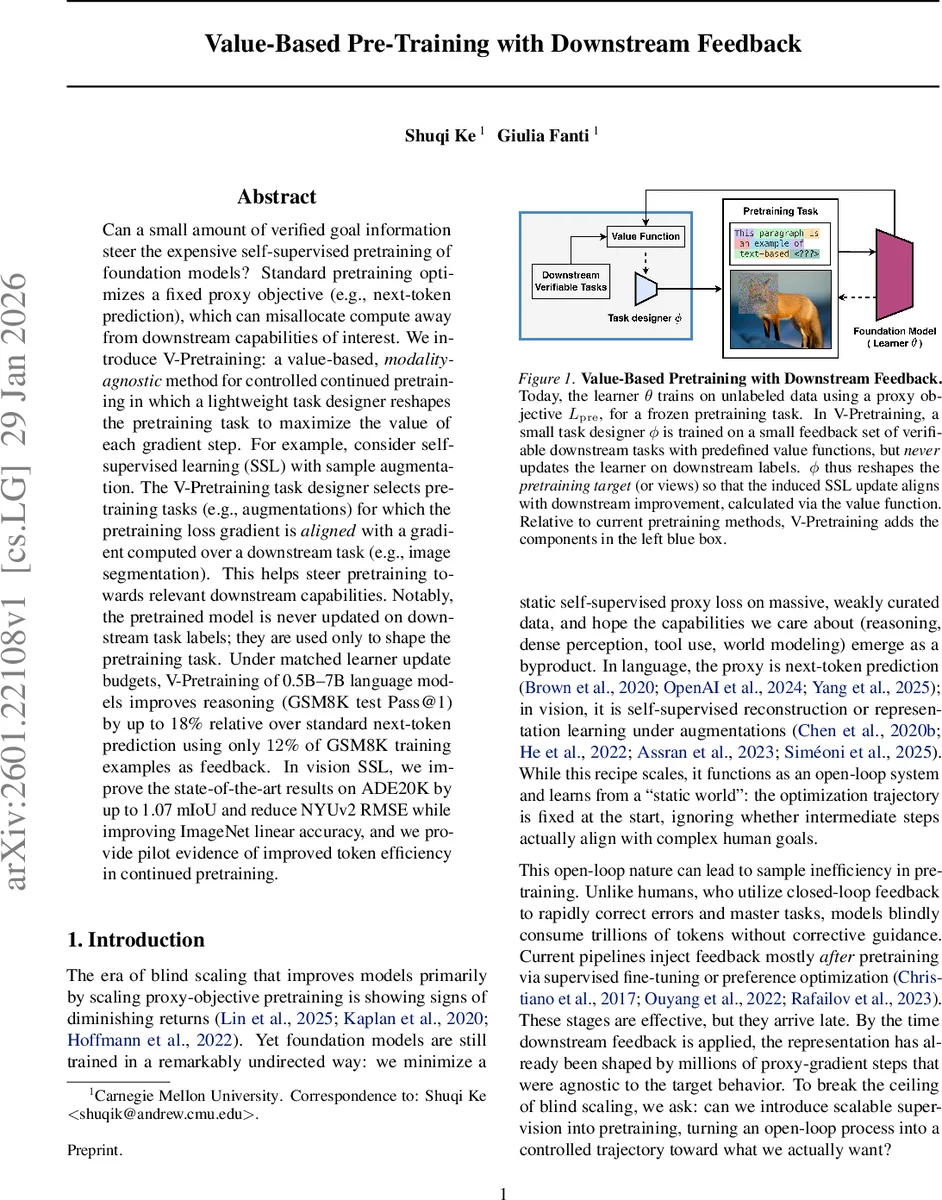

Can a small amount of verified goal information steer the expensive self-supervised pretraining of foundation models? Standard pretraining optimizes a fixed proxy objective (e.g., next-token prediction), which can misallocate compute away from downstream capabilities of interest. We introduce V-Pretraining: a value-based, modality-agnostic method for controlled continued pretraining in which a lightweight task designer reshapes the pretraining task to maximize the value of each gradient step. For example, consider self-supervised learning (SSL) with sample augmentation. The V-Pretraining task designer selects pretraining tasks (e.g., augmentations) for which the pretraining loss gradient is aligned with a gradient computed over a downstream task (e.g., image segmentation). This helps steer pretraining towards relevant downstream capabilities. Notably, the pretrained model is never updated on downstream task labels; they are used only to shape the pretraining task. Under matched learner update budgets, V-Pretraining of 0.5B–7B language models improves reasoning (GSM8K test Pass@1) by up to 18% relative over standard next-token prediction using only 12% of GSM8K training examples as feedback. In vision SSL, we improve the state-of-the-art results on ADE20K by up to 1.07 mIoU and reduce NYUv2 RMSE while improving ImageNet linear accuracy, and we provide pilot evidence of improved token efficiency in continued pretraining.

💡 Research Summary

The paper introduces “Value‑Based Pre‑Training” (V‑Pretraining), a framework that injects a small amount of verified downstream feedback into the massive, unsupervised pre‑training phase of foundation models. Traditional pre‑training optimizes a fixed proxy objective (e.g., next‑token prediction for language, masked reconstruction for vision) on huge unlabeled corpora. Because the proxy loss is static, many gradient steps are spent on improving representations that may not translate into the downstream capabilities we actually care about, such as mathematical reasoning or dense visual perception.

V‑Pretraining separates the system into two components: a large “learner” with parameters θ that continues to train on the unlabeled stream using a conventional predictive loss L_pre, and a lightweight “task designer” with parameters ϕ that is trained on a tiny verification set (e.g., a subset of GSM8K for reasoning, ADE20K/NYUv2 for segmentation or depth). The verification set is never used to directly update the learner; instead it provides a downstream loss L_down whose gradient g_down serves as a signal of what improvement is valuable.

The core idea is to align the learner’s next pre‑training gradient g_pre = ∇_θ L_pre(θ; ϕ) with the downstream gradient g_down. Using a first‑order Taylor expansion, the expected reduction in downstream loss after a single pre‑training step is approximately −η g_downᵀ g_pre. Therefore the framework defines a value function V(ϕ; θ) = g_downᵀ g_pre and trains the task designer to maximize this alignment. The gradient of V with respect to ϕ requires a Hessian‑vector product, which can be computed efficiently by automatic differentiation; in practice the authors restrict the computation to a small subset of learner parameters (e.g., adapters or the final layers) to keep the overhead modest.

Two concrete instantiations are presented:

-

Language – The fixed one‑hot next‑token target is replaced by adaptive soft targets that distribute probability mass over the learner’s top‑K predictions. The task designer learns to shape these soft targets so that the resulting gradient aligns with the downstream reasoning objective.

-

Vision – Instead of a static augmentation pipeline, a learnable view generator A_ϕ produces instance‑wise augmentations (crops, color jitter, masking patterns). The designer optimizes these views to maximize alignment with segmentation or depth‑estimation gradients.

Experiments are conducted on both modalities under matched compute budgets (i.e., the same number of learner updates). In language, continued pre‑training of Qwen‑1.5 models (0.5 B, 4 B, 7 B parameters) on a math‑focused corpus, guided by only 12 % of the GSM8K training examples, yields Pass@1 improvements of 2‑14 % relative to standard next‑token pre‑training, with up to an 18 % relative gain for the smallest model. In vision, the value‑based SSL improves state‑of‑the‑art results on ADE20K by up to 1.07 mIoU and reduces NYUv2 depth RMSE, while simultaneously preserving or slightly improving ImageNet linear probe accuracy. Ablations (random feedback, label smoothing, self‑distillation) confirm that the gains stem from the alignment objective rather than incidental regularization.

Further diagnostics show that V‑Pretraining increases “value per pre‑training step” (i.e., downstream improvement per gradient update) and offers controllable trade‑offs: by adjusting the weight of the value term, practitioners can navigate the Pareto frontier between generic representation quality and task‑specific performance. Importantly, because the method only reshapes the target or view generation, it is orthogonal to advances in model scaling, data mixture strategies, or post‑training alignment techniques (e.g., RLHF), and can be combined with them.

In summary, V‑Pretraining demonstrates that a modest amount of indirect downstream supervision can be turned into a scalable, closed‑loop signal that steers massive unsupervised training toward the capabilities we truly desire, without sacrificing generalization or requiring expensive fine‑tuning on the downstream labels. This opens a new direction for efficient foundation‑model training where weak but reliable feedback guides the learning trajectory from the very beginning.

Comments & Academic Discussion

Loading comments...

Leave a Comment