Wrapper-Aware Rate-Distortion Optimization in Feature Coding for Machines

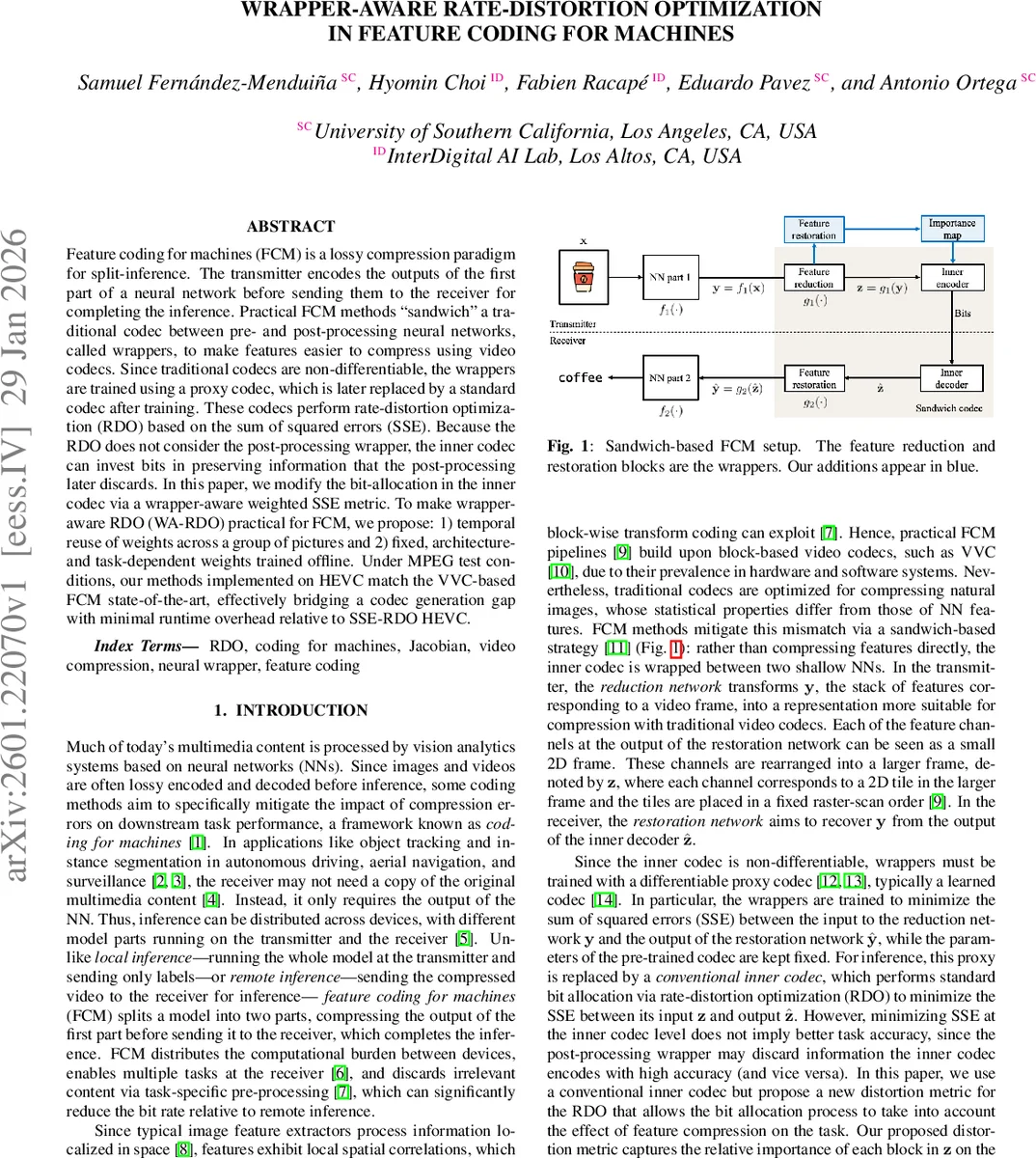

Feature coding for machines (FCM) is a lossy compression paradigm for split-inference. The transmitter encodes the outputs of the first part of a neural network before sending them to the receiver for completing the inference. Practical FCM methods ``sandwich’’ a traditional codec between pre- and post-processing neural networks, called wrappers, to make features easier to compress using video codecs. Since traditional codecs are non-differentiable, the wrappers are trained using a proxy codec, which is later replaced by a standard codec after training. These codecs perform rate-distortion optimization (RDO) based on the sum of squared errors (SSE). Because the RDO does not consider the post-processing wrapper, the inner codec can invest bits in preserving information that the post-processing later discards. In this paper, we modify the bit-allocation in the inner codec via a wrapper-aware weighted SSE metric. To make wrapper-aware RDO (WA-RDO) practical for FCM, we propose: 1) temporal reuse of weights across a group of pictures and 2) fixed, architecture- and task-dependent weights trained offline. Under MPEG test conditions, our methods implemented on HEVC match the VVC-based FCM state-of-the-art, effectively bridging a codec generation gap with minimal runtime overhead relative to SSE-RDO HEVC.

💡 Research Summary

Feature Coding for Machines (FCM) splits a deep neural network between a transmitter and a receiver: the first part of the network runs on the sender, its intermediate feature maps are compressed, transmitted, and then decoded and processed by the second part on the receiver. In practice, a “sandwich” architecture is used: a shallow encoder‑wrapper (g₁) transforms the raw features y into a codec‑friendly representation z, a conventional video codec compresses z, and a decoder‑wrapper (g₂) reconstructs ŷ from the decoded ẑ before the final inference network f₂ runs. Existing FCM pipelines train the wrappers with a differentiable proxy codec but replace it at test time with a standard codec (e.g., HEVC, AV‑C). The inner codec performs rate‑distortion optimization (RDO) that minimizes the sum of squared errors (SSE) between z and ẑ, completely ignoring the effect of g₂. Consequently, bits may be spent preserving information that g₂ later discards, harming the downstream task (object detection, segmentation, tracking).

The paper introduces Wrapper‑Aware RDO (WA‑RDO), a method that modifies the distortion term of the codec’s RDO to account for the post‑processing wrapper. Starting from the task‑level distortion ‖y – g₂(ẑ)‖², the authors linearize the effect of the codec error using the Jacobian J_g(z) of the restoration wrapper evaluated at z. Under a high‑bit‑rate assumption (quantization noise is white and uncorrelated), the cross‑term vanishes and the dominant term becomes ‖J_g(z)(ẑ – z)‖². Direct computation of J_g(z) is infeasible because y can have millions of elements. To overcome this, the Jacobian is “sketched”: a random matrix S (size n_s × n_p) projects J_g(z) onto a lower‑dimensional space, yielding J_s(z) = S J_g(z). By the Johnson–Lindenstrauss lemma, the projected norm approximates the original norm within a controllable ε. The diagonal of the resulting approximate Hessian H_s(z) = J_s(z)ᵀJ_s(z) forms an importance map h(z) whose i‑th entry quantifies how much the i‑th element of z influences the overall task error.

WA‑RDO then replaces the per‑block SSE in the codec’s RDO with a weighted SSE: for each coding unit i, the cost becomes

( ẑ_i – z_i )ᵀ diag(h_i) ( ẑ_i – z_i ) + τ‖ẑ_i – z_i‖² + λ R_i(ẑ_i).

τ balances the weighted term against the ordinary SSE, and λ is scaled according to the norm of h(z) to keep the rate‑distortion trade‑off comparable to standard SSE‑RDO.

Two practical simplifications are proposed to make WA‑RDO viable on low‑power devices. (1) Temporal reuse (IW‑RDO): importance maps are computed only for intra‑coded (I) frames and reused for all P/B frames within the same group of pictures (GOP), exploiting temporal redundancy in video features. (2) Architectural freezing (FW‑RDO): for a given pair of wrappers and a fixed task, the importance pattern is largely independent of the specific input. By averaging importance maps over a large training set, a fixed map h_a is obtained offline; during encoding the codec uses this frozen map, eliminating the need to run g₂ or compute Jacobians at test time.

Experiments follow the MPEG FCM Test Model (FCTM) and its common test conditions, covering four datasets (object detection, instance segmentation, object tracking on both images and videos). The inner codecs are AV‑C (JM 19.1) and HEVC (HM 18.0). Baselines include the state‑of‑the‑art VVC‑based FCM (VVC with standard SSE‑RDO) and the same codecs with ordinary SSE‑RDO. Results show: • HEVC‑WA‑RDO matches VVC‑SSE‑RDO in BD‑accuracy, effectively closing the generation gap between codec families. • AV‑C‑WA‑RDO achieves the same task‑level performance as HEVC‑SSE‑RDO, confirming that the proposed weighting compensates for the lower compression efficiency of older codecs. • IW‑RDO and FW‑RDO incur only a modest runtime overhead (≈5–10 % on a single‑core CPU) while preserving the accuracy gains, demonstrating suitability for real‑time or edge deployments. • The importance maps exhibit high correlation across frames (temporal consistency) and across inputs for a fixed wrapper (architectural consistency), justifying the reuse strategies.

In summary, the paper presents a principled, Jacobian‑based weighting scheme that integrates the effect of the post‑processing wrapper into the codec’s RDO, and shows that with lightweight temporal and architectural approximations this scheme can be deployed on standard video codecs without sacrificing task performance. The approach opens the door to using legacy codecs in machine‑centric compression pipelines, reduces the need for costly VVC deployments, and suggests future work on low‑bit‑rate extensions, multi‑task weight sharing, and hardware‑accelerated implementations.

Comments & Academic Discussion

Loading comments...

Leave a Comment