MasalBench: A Benchmark for Contextual and Cross-Cultural Understanding of Persian Proverbs in LLMs

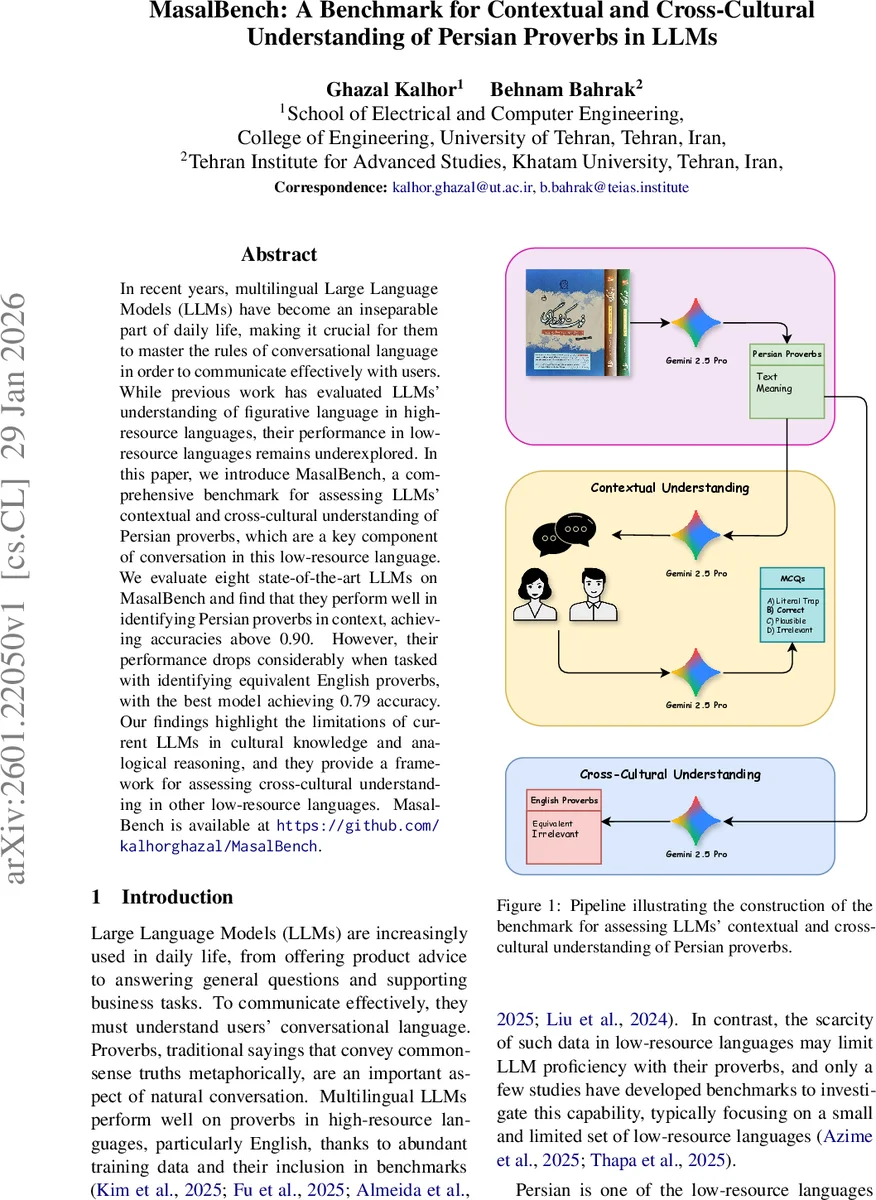

In recent years, multilingual Large Language Models (LLMs) have become an inseparable part of daily life, making it crucial for them to master the rules of conversational language in order to communicate effectively with users. While previous work has evaluated LLMs’ understanding of figurative language in high-resource languages, their performance in low-resource languages remains underexplored. In this paper, we introduce MasalBench, a comprehensive benchmark for assessing LLMs’ contextual and cross-cultural understanding of Persian proverbs, which are a key component of conversation in this low-resource language. We evaluate eight state-of-the-art LLMs on MasalBench and find that they perform well in identifying Persian proverbs in context, achieving accuracies above 0.90. However, their performance drops considerably when tasked with identifying equivalent English proverbs, with the best model achieving 0.79 accuracy. Our findings highlight the limitations of current LLMs in cultural knowledge and analogical reasoning, and they provide a framework for assessing cross-cultural understanding in other low-resource languages. MasalBench is available at https://github.com/kalhorghazal/MasalBench.

💡 Research Summary

MasalBench is a newly introduced benchmark designed to evaluate multilingual large language models (LLMs) on two complementary aspects of proverb comprehension: (1) contextual understanding of Persian proverbs within dialogue and (2) cross‑cultural mapping of Persian proverbs to their English equivalents. The benchmark consists of 1,000 multiple‑choice items for the contextual task and 700 binary‑choice items for the cross‑cultural task. For the contextual portion, each question presents a short, natural dialogue in Persian where the second speaker uses a proverb. The model must select the correct interpretation of the speaker’s intent from three distractors deliberately crafted as (i) a literal trap (the proverb’s word‑for‑word meaning), (ii) a plausible but incorrect meaning, and (iii) an irrelevant meaning. This design forces the model to go beyond surface lexical matching and to reason about metaphorical intent.

The cross‑cultural portion asks the model to identify which of two English proverbs best matches the meaning of a given Persian proverb. One English option is a true cultural equivalent, while the other is a distractor that may share surface features (tone, imagery, or wording) but conveys a different meaning. This task tests cultural grounding and analogical reasoning, which are not captured by standard translation benchmarks.

To construct MasalBench, the authors extracted 4,000+ Persian proverbs and their narrative explanations from the reference work “Persian Proverbs and Their Stories” (Rahmandost et al., 2011). They employed Gemini 2.5 Pro for OCR of the scanned volumes, followed by automated generation of dialogues and distractors, and then performed exhaustive manual verification by native Persian speakers. For the cross‑cultural pairs, Gemini 2.5 Pro was prompted to generate two English proverbs per Persian entry – one semantically aligned and one unrelated – and the authors manually confirmed authenticity and semantic alignment.

Eight state‑of‑the‑art multilingual LLMs were evaluated: Llama 4 Scout, Llama 3.3 70B Instruct, Qwen 2.5 72B Instruct, Qwen QwQ 32B, DeepSeek V3.1, DeepSeek R1, GPT‑4.1 mini, and GPT‑4o mini. All models were tested in a zero‑shot setting with deterministic decoding (temperature = 0, top‑p = 1) and the answer options were randomly shuffled to avoid positional bias.

Results for contextual understanding were uniformly high: every model achieved ≥ 0.90 accuracy, with DeepSeek V3.1 (671 B parameters) reaching the top score of 0.943. Model size and instruction‑tuning were the strongest predictors of performance; instruction‑tuned variants consistently outperformed reasoning‑focused counterparts within the same family. Error analysis revealed that when models erred, the mistake was overwhelmingly a “plausible” distractor (≈ 5‑8% of responses), indicating a tendency to select semantically coherent but incorrect interpretations. Literal and irrelevant errors were rare (≤ 2%).

Cross‑cultural performance was markedly lower. Accuracy ranged from 0.647 (Llama 4 Scout) to 0.793 (DeepSeek R1). Even the best model fell short of the contextual scores, underscoring the additional difficulty of cultural abstraction and analogical mapping. Instruction‑tuning still provided a modest boost, but the limited exposure of training data to culturally aligned proverb pairs constrained gains. The authors note that true English equivalents for many Persian proverbs are scarce, which inherently raises task difficulty.

The paper concludes that while modern multilingual LLMs have largely mastered intra‑language metaphorical reasoning, they remain limited in cross‑cultural transfer and analogical reasoning. The authors suggest future work should focus on (i) augmenting pre‑training corpora with culturally diverse proverb collections, (ii) developing multi‑modal or retrieval‑augmented approaches that can access cultural knowledge at inference time, and (iii) designing specialized prompts or fine‑tuning regimes that explicitly target analogical mapping. MasalBench itself is released publicly (GitHub link) to serve as a testbed for further research on culturally informed language understanding, especially for low‑resource languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment