From Logits to Latents: Contrastive Representation Shaping for LLM Unlearning

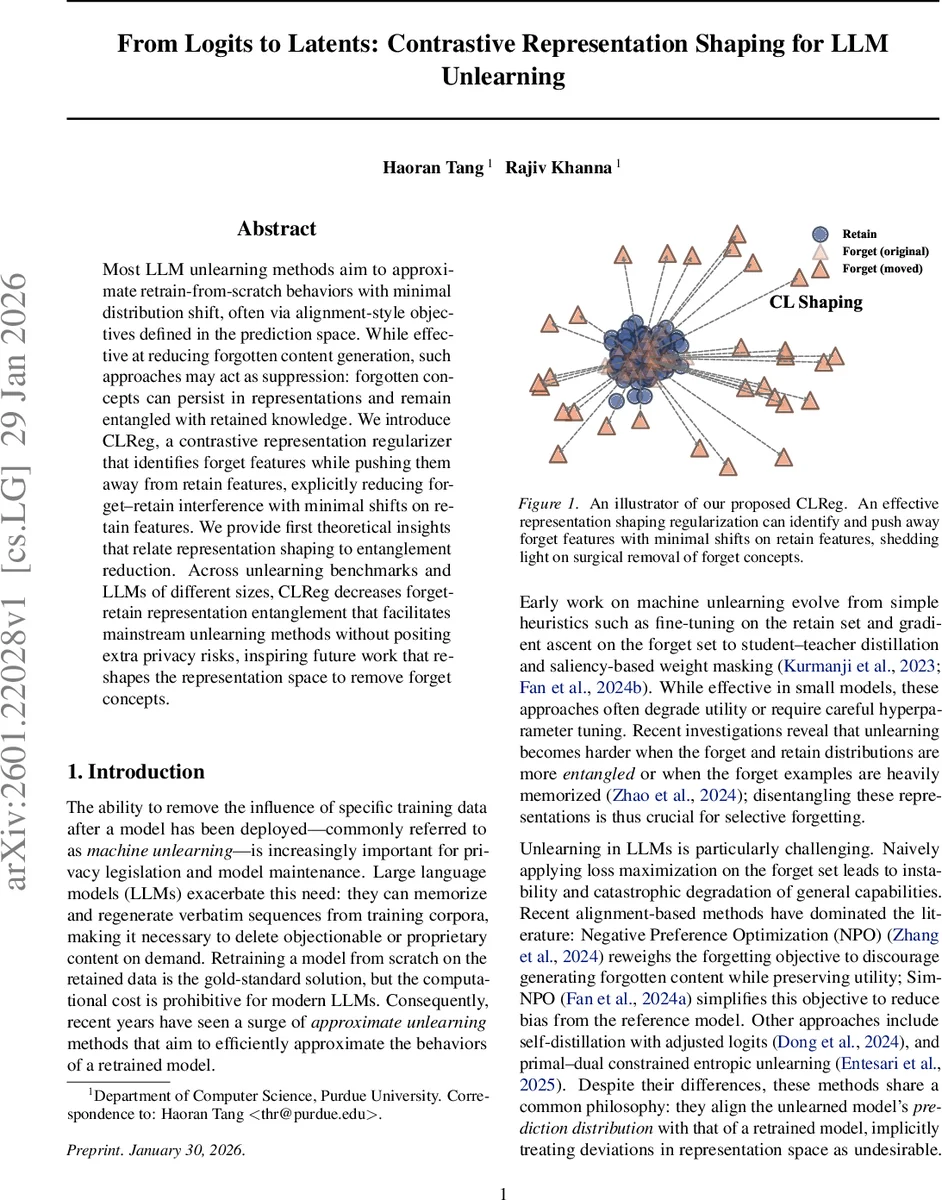

Most LLM unlearning methods aim to approximate retrain-from-scratch behaviors with minimal distribution shift, often via alignment-style objectives defined in the prediction space. While effective at reducing forgotten content generation, such approaches may act as suppression: forgotten concepts can persist in representations and remain entangled with retained knowledge. We introduce CLReg, a contrastive representation regularizer that identifies forget features while pushing them away from retain features, explicitly reducing forget-retain interference with minimal shifts on retain features. We provide first theoretical insights that relate representation shaping to entanglement reduction. Across unlearning benchmarks and LLMs of different sizes, CLReg decreases forget-retain representation entanglement that facilitates mainstream unlearning methods without positing extra privacy risks, inspiring future work that reshapes the representation space to remove forget concepts.

💡 Research Summary

The paper tackles the problem of post‑hoc removal of specific data or concepts from large language models (LLMs), a task that has become increasingly critical due to privacy regulations and the need to maintain model integrity after deployment. Traditional “unlearning” approaches for LLMs, such as Negative Preference Optimization (NPO), SimNPO, UnDIAL, and recent primal‑dual constrained methods, focus on aligning the model’s output distribution (logits) with that of a retrained model that has never seen the forgotten data. While these alignment‑style objectives successfully lower the probability of generating forgotten content, they largely leave the internal representations of the forgotten concepts untouched. Consequently, the forgotten information can remain entangled with retained knowledge in the hidden states, posing a risk of inadvertent leakage and making it harder to erase highly memorized content.

To address this limitation, the authors propose a contrastive representation regularizer called CLReg. The central idea is to explicitly reshape the latent space so that embeddings of forget examples are pushed away from embeddings of retain examples, thereby reducing representation‑level entanglement. CLReg operates on top of any existing unlearning algorithm, adding a contrastive loss term that works alongside the usual forget‑loss (e.g., SimNPO) and retain‑loss (standard language‑model training loss).

Construction of contrastive pairs

For each sample (x_f) in the forget set (F), two positive augmentations are generated:

- A dropout‑perturbed hidden representation (z_f).

- A paraphrased version of the same input, passed through the model and optionally dropout‑perturbed, yielding (z_f^{+}).

Dropout rates are sampled from a normal distribution (N(0.1,0.05)) and clamped to (

Comments & Academic Discussion

Loading comments...

Leave a Comment