Hierarchy of discriminative power and complexity in learning quantum ensembles

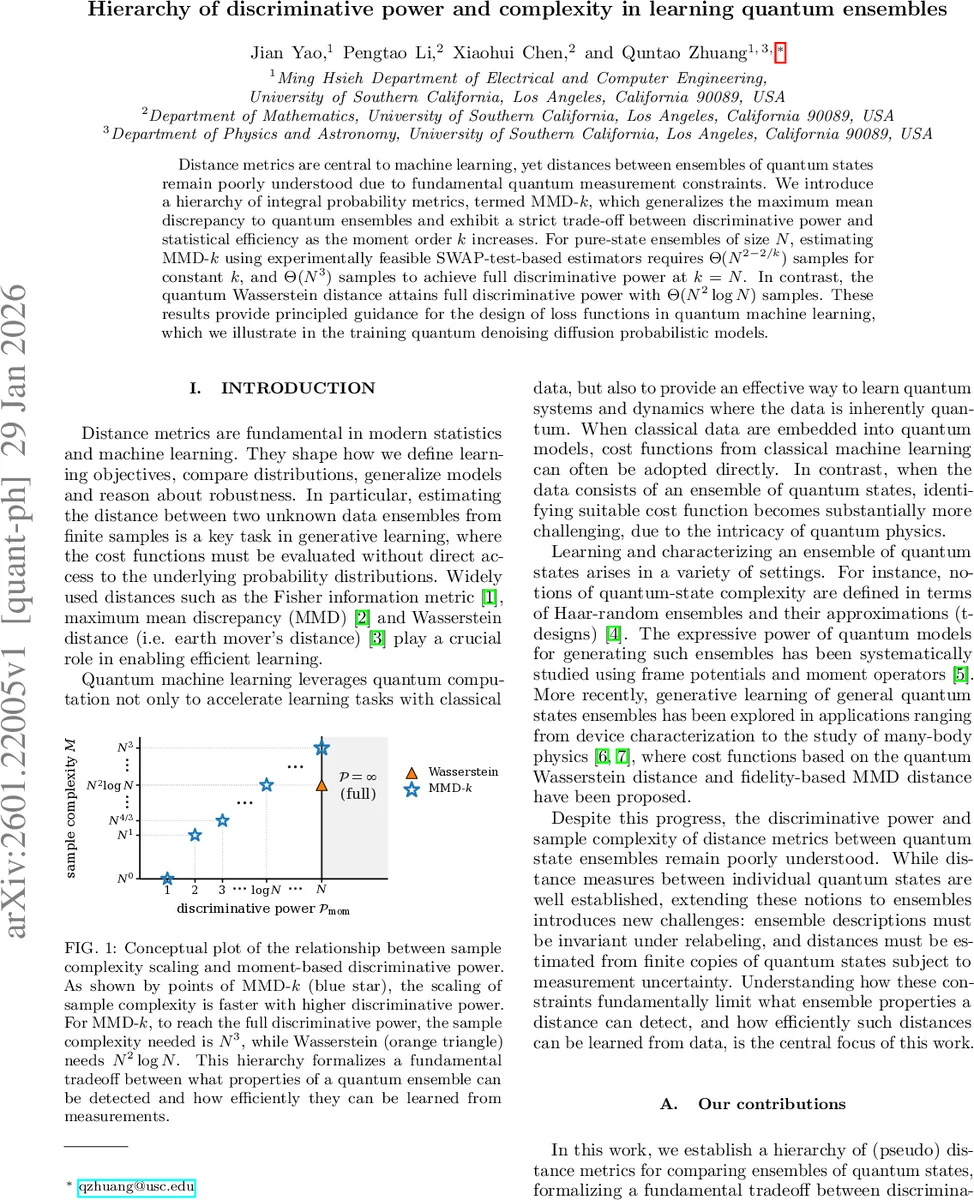

Distance metrics are central to machine learning, yet distances between ensembles of quantum states remain poorly understood due to fundamental quantum measurement constraints. We introduce a hierarchy of integral probability metrics, termed MMD-$k$, which generalizes the maximum mean discrepancy to quantum ensembles and exhibit a strict trade-off between discriminative power and statistical efficiency as the moment order $k$ increases. For pure-state ensembles of size $N$, estimating MMD-$k$ using experimentally feasible SWAP-test-based estimators requires $Θ(N^{2-2/k})$ samples for constant $k$, and $Θ(N^3)$ samples to achieve full discriminative power at $k = N$. In contrast, the quantum Wasserstein distance attains full discriminative power with $Θ(N^2 \log N)$ samples. These results provide principled guidance for the design of loss functions in quantum machine learning, which we illustrate in the training quantum denoising diffusion probabilistic models.

💡 Research Summary

The paper addresses a fundamental gap in quantum machine learning: how to quantify distances between ensembles of quantum states when only a limited number of copies of each state are available. Classical distance measures such as the Fisher information metric, maximum mean discrepancy (MMD), and Wasserstein distance are central to modern learning, but their quantum counterparts for ensembles have been under‑explored because quantum measurements are destructive and states cannot be cloned perfectly. The authors first formalize the notion of a quantum ensemble as a set of labeled pairs ((p_x,\rho_x)) and insist that any sensible distance must be invariant under permutations of the labels. They point out that the common classical‑quantum (CQ) state representation fails this invariance, especially for ensembles like Haar‑random collections where the label carries no physical meaning.

To fill this void, the authors introduce a hierarchy of integral probability metrics called MMD‑k, which generalizes the classical MMD by raising the inner‑product fidelity to the power (k). Formally, \

Comments & Academic Discussion

Loading comments...

Leave a Comment