PaddleOCR-VL-1.5: Towards a Multi-Task 0.9B VLM for Robust In-the-Wild Document Parsing

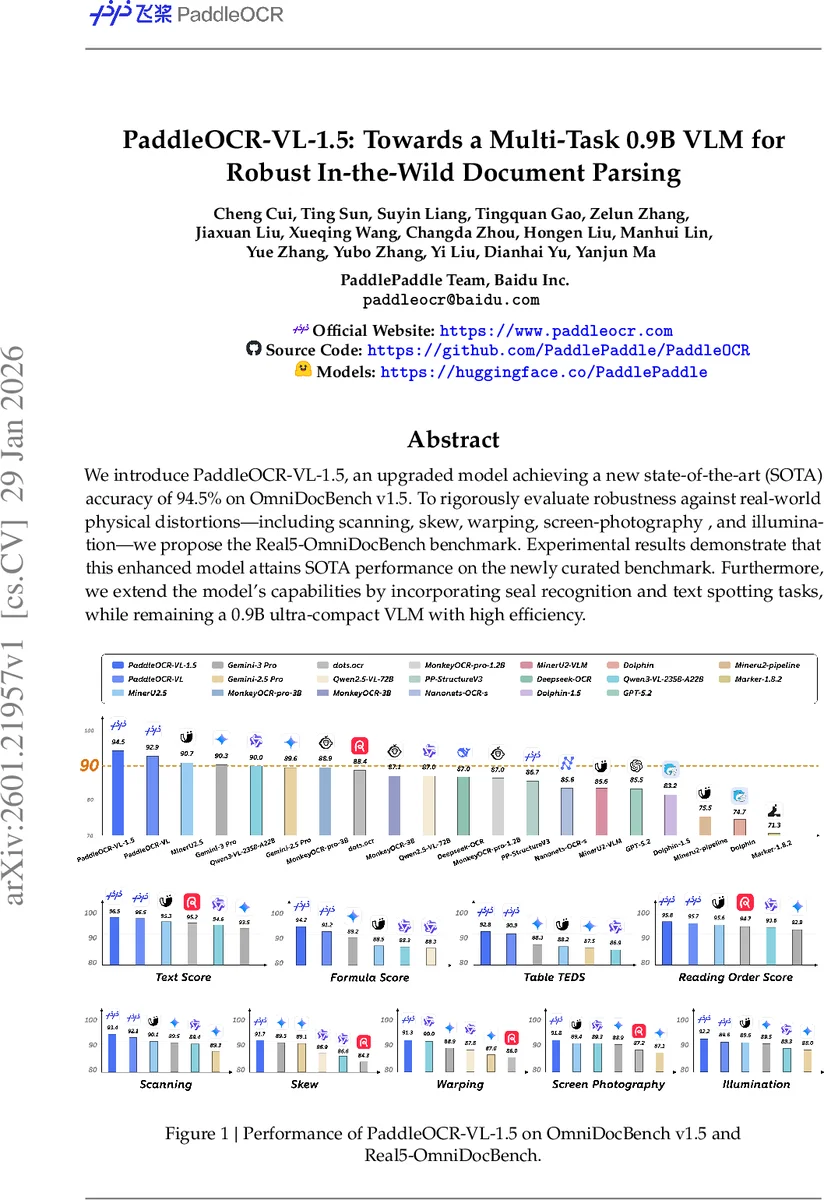

We introduce PaddleOCR-VL-1.5, an upgraded model achieving a new state-of-the-art (SOTA) accuracy of 94.5% on OmniDocBench v1.5. To rigorously evaluate robustness against real-world physical distortions, including scanning, skew, warping, screen-photography, and illumination, we propose the Real5-OmniDocBench benchmark. Experimental results demonstrate that this enhanced model attains SOTA performance on the newly curated benchmark. Furthermore, we extend the model’s capabilities by incorporating seal recognition and text spotting tasks, while remaining a 0.9B ultra-compact VLM with high efficiency. Code: https://github.com/PaddlePaddle/PaddleOCR

💡 Research Summary

PaddleOCR‑VL‑1.5 is a compact 0.9‑billion‑parameter vision‑language model (VLM) that pushes the state‑of‑the‑art in document parsing, especially under real‑world physical distortions. The authors first introduce a new benchmark, Real5‑OmniDocBench, which augments the existing OmniDocBench v1.5 with five distortion scenarios: scanning, warping, screen‑photography, illumination variations, and skew. This benchmark provides a rigorous test of robustness that has been largely missing from prior evaluations, which mostly focus on clean, digitally‑born PDFs.

The core technical contribution lies in two upgraded modules. The layout engine, PP‑DocLayoutV3, replaces the earlier rectangular detector with an RT‑DETR‑based instance‑segmentation architecture. It predicts pixel‑accurate masks for each layout element and simultaneously infers logical reading order via a global‑pointer mechanism embedded in the transformer decoder. By learning an anti‑symmetric precedence matrix and applying a voting‑based ranking, the model derives a globally consistent reading sequence in a single forward pass, eliminating the cascade of separate detection, segmentation, and ordering steps. Multi‑point (typically quadrilateral) bounding representations enable accurate handling of skewed or warped pages where axis‑aligned boxes would overlap or include excessive background.

The second module, PaddleOCR‑VL‑1.5‑0.9B, builds on the lightweight NaViT visual encoder, an Adaptive MLP connector, and the ERNIE‑4.5‑0.3B language backbone. The model is trained on an expanded pre‑training corpus of 46 million image‑text pairs (up from 29 M in the previous version) and explicitly incorporates seal‑recognition and text‑spotting data. Text spotting is realized by appending eight location tokens (four (x, y) pairs) to the textual output, using a dedicated vocabulary of <LOC_0>…<LOC_1000> tokens to represent normalized coordinates. This unified representation allows the model to generate both the recognized string and its precise quadrilateral location in a single autoregressive pass, a significant departure from traditional OCR pipelines that treat detection and recognition as separate stages.

Training proceeds in three stages. For layout analysis, 38 k high‑quality documents are annotated with multi‑point masks and reading order, and a distortion‑aware augmentation pipeline simulates real‑world deformations (perspective warp, uneven lighting, motion blur, etc.). The model is optimized with AdamW (weight decay = 1e‑4) at a constant learning rate of 2e‑4 for 150 epochs, batch size = 32, jointly updating the RT‑DETR backbone, mask head, and order decoder. For the recognition module, a two‑phase approach is used: (1) a large‑scale vision‑language alignment pre‑training (46 M pairs, max resolution = 2048 × 2048, LR = 5e‑5, 1 epoch) and (2) instruction‑fine‑tuning on 5.6 M task‑specific examples (OCR, table, formula, chart, seal, and spotting) with a lower learning rate (8e‑6) for one epoch. Reinforcement learning is later applied to refine the spatial token embeddings.

Empirically, PaddleOCR‑VL‑1.5 achieves 94.5 % accuracy on OmniDocBench v1.5, surpassing the previous best of 93.2 %. On the newly introduced Real5‑OmniDocBench, it records an overall 92.05 % accuracy, outperforming massive VLMs such as Qwen3‑VL‑235B and Gemini‑3 Pro despite having three orders of magnitude fewer parameters. Inference speed is also impressive: ~45 FPS on an NVIDIA A100 GPU and ~8 FPS on a standard Intel Xeon CPU, confirming its suitability for real‑time applications.

The paper’s contributions can be summarized as: (1) a unified instance‑segmentation + reading‑order transformer (PP‑DocLayoutV3) that handles non‑planar documents in a single pass; (2) a novel token‑based spatial encoding that enables end‑to‑end text spotting; (3) the integration of seal recognition, expanding the model’s utility for official documents; (4) the release of Real5‑OmniDocBench, filling a gap in robustness evaluation; and (5) demonstration that a sub‑billion‑parameter VLM can match or exceed the performance of much larger models when trained with targeted data augmentations and task‑specific pre‑training.

Limitations include potential degradation on extremely high‑resolution, densely‑packed charts where the 0.9 B backbone may miss fine details, and a current language coverage limited to roughly 30 languages, leaving room for broader multilingual support. Future work could explore further model compression (quantization, pruning, knowledge distillation) for edge devices, richer multimodal attention mechanisms to better fuse visual and textual cues, and expanding the benchmark to cover more script families and document genres.

Overall, PaddleOCR‑VL‑1.5 sets a new benchmark for compact, robust document parsing VLMs, offering a practical solution for real‑world deployments where documents are photographed, scanned under poor lighting, or physically deformed, while maintaining high accuracy and low computational cost.

Comments & Academic Discussion

Loading comments...

Leave a Comment