JADE: Bridging the Strategic-Operational Gap in Dynamic Agentic RAG

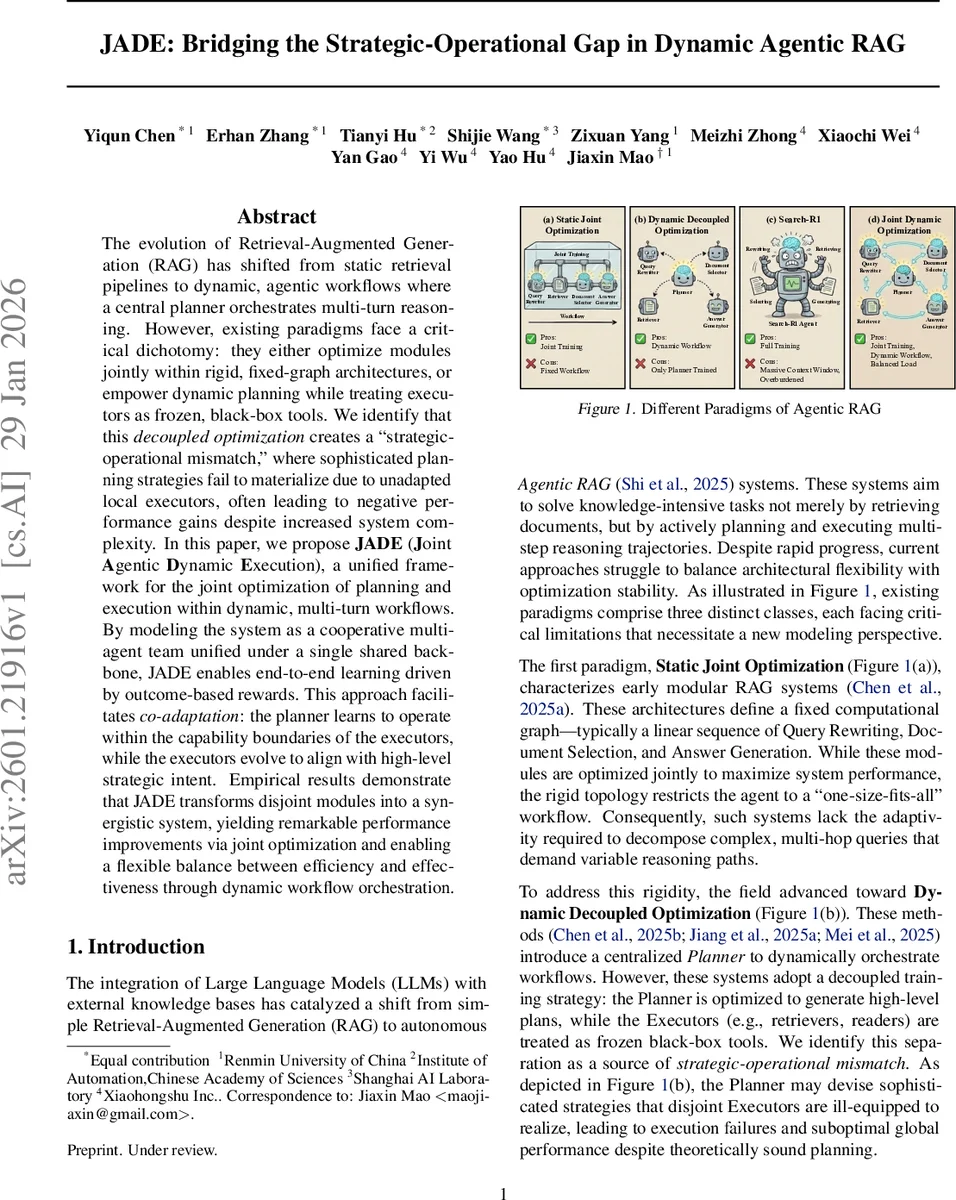

The evolution of Retrieval-Augmented Generation (RAG) has shifted from static retrieval pipelines to dynamic, agentic workflows where a central planner orchestrates multi-turn reasoning. However, existing paradigms face a critical dichotomy: they either optimize modules jointly within rigid, fixed-graph architectures, or empower dynamic planning while treating executors as frozen, black-box tools. We identify that this \textit{decoupled optimization} creates a ``strategic-operational mismatch,’’ where sophisticated planning strategies fail to materialize due to unadapted local executors, often leading to negative performance gains despite increased system complexity. In this paper, we propose \textbf{JADE} (\textbf{J}oint \textbf{A}gentic \textbf{D}ynamic \textbf{E}xecution), a unified framework for the joint optimization of planning and execution within dynamic, multi-turn workflows. By modeling the system as a cooperative multi-agent team unified under a single shared backbone, JADE enables end-to-end learning driven by outcome-based rewards. This approach facilitates \textit{co-adaptation}: the planner learns to operate within the capability boundaries of the executors, while the executors evolve to align with high-level strategic intent. Empirical results demonstrate that JADE transforms disjoint modules into a synergistic system, yielding remarkable performance improvements via joint optimization and enabling a flexible balance between efficiency and effectiveness through dynamic workflow orchestration.

💡 Research Summary

The paper introduces JADE (Joint Agentic Dynamic Execution), a novel framework that tackles a fundamental problem in modern Retrieval‑Augmented Generation (RAG) systems: the strategic‑operational mismatch. Traditional RAG pipelines fall into three categories. Static joint optimization fixes a linear graph of modules (query rewriting → document selection → answer generation) and trains them together, which yields stable learning but lacks the flexibility to handle complex, multi‑hop queries. Dynamic decoupled optimization adds a centralized planner that can compose arbitrary workflows, yet the planner is trained while the executors (retrievers, readers, generators) remain frozen black‑boxes, causing sophisticated plans to fail in practice. End‑to‑end monolithic models such as Search‑R1 fuse planning, search, and generation into a single network, but suffer from huge context windows, sparse rewards, and unstable training.

JADE unifies the best of both worlds. It models the entire interaction as a Multi‑Agent Semi‑Markov Decision Process (MSMDP) with partial observability. The global state consists of the original user query and a dynamically growing execution trace that records sub‑queries and their answers. At each round, a Planner agent selects a set of Executors and a directed workflow graph (sequential, parallel, or hybrid) that best addresses the current sub‑query. Executors then carry out concrete actions—query rewriting, document retrieval, answer generation—updating the global trace.

The key technical contribution is parameter sharing: all agents (Planner and Executors) are instantiated from a single large language model backbone parameterized by θ. Role‑specific system prompts differentiate functionality, but gradients flow through the same weights during training. This “co‑adaptation” forces the Planner to learn strategies that respect the actual capabilities of the Executors, while the Executors adapt to the Planner’s high‑level intent. Training optimizes a shared global reward (accuracy, efficiency, latency) via reinforcement learning, which mitigates the long‑horizon credit‑assignment problem that plagues sparse‑reward settings.

Empirically, the authors evaluate JADE on seven benchmark tasks covering multi‑hop question answering, open‑domain retrieval, and knowledge‑intensive reasoning. A 7‑billion‑parameter JADE model outperforms both decoupled planner‑executor systems and a GPT‑4o‑based baseline, achieving 4–6 percentage‑point gains in accuracy and up to 30 % reduction in computational cost on complex queries. Training converges twice as fast as monolithic Search‑R1 models, and memory consumption is lower thanks to the shared backbone.

The paper acknowledges limitations: the current design assumes homogeneous agents sharing the same LLM, which may restrict integration with specialized external modules (e.g., high‑performance search engines or domain‑specific readers). Future work proposes extending the framework with heterogeneous parameter‑exchange mechanisms or meta‑RL negotiation protocols to broaden applicability.

In summary, JADE presents a coherent solution to the strategic‑operational gap in dynamic agentic RAG by jointly optimizing planning and execution within a shared LLM, delivering both higher effectiveness and greater efficiency, and establishing a new direction for cooperative multi‑agent reinforcement learning in language‑driven information‑seeking systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment