Towards A Sustainable Future for Peer Review in Software Engineering

Peer review is the main mechanism by which the software engineering community assesses the quality of scientific results. However, the rapid growth of paper submissions in software engineering venues has outpaced the availability of qualified reviewers, creating a growing imbalance that risks constraining and negatively impacting the long-term growth of the Software Engineering (SE) research community. Our vision of the Future of the SE research landscape involves a more scalable, inclusive, and resilient peer review process that incorporates additional mechanisms for: 1) attracting and training newcomers to serve as high-quality reviewers, 2) incentivizing more community members to serve as peer reviewers, and 3) cautiously integrating AI tools to support a high-quality review process.

💡 Research Summary

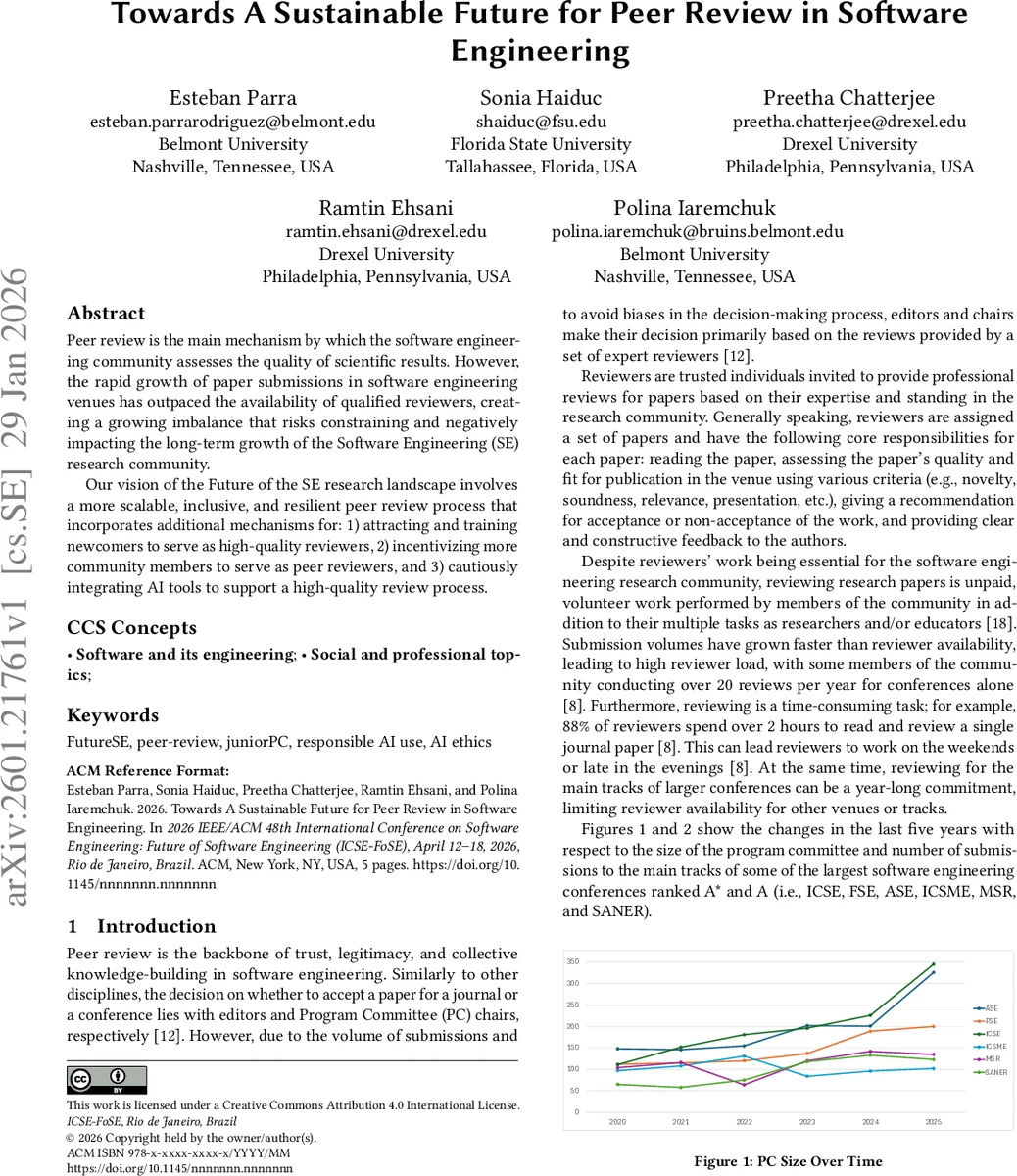

The paper addresses a growing sustainability crisis in the peer‑review ecosystem of software engineering (SE) research. Empirical data from the major A* and A conferences (ICSE, FSE, ASE, etc.) show that over the past five years both program‑committee (PC) size and the number of submitted papers have risen sharply, yet the average reviewer workload has increased from roughly 8 to over 10 papers per reviewer per year. This imbalance is driven by three structural factors: (1) reviewing is unpaid volunteer work that competes with researchers’ own publishing and teaching responsibilities; (2) existing incentives such as the Distinguished Reviewer Award recognize only a small elite of senior scholars, providing little motivation for newcomers; and (3) mentorship schemes like Junior/Shadow PCs are limited to a single review cycle and have not been widely adopted across venues.

Compounding the problem, the rapid advancement of large language models (LLMs) has enabled the generation of low‑quality, AI‑only reviews, raising concerns about confidentiality breaches, hallucinated citations, and bias amplification. Current ACM and IEEE policies prohibit uploading manuscript content to non‑confidential AI services, yet incidents such as the ICLR‑26 case—where thousands of reviews were fully AI‑generated—highlight the urgency of a responsible AI strategy.

To build a more scalable, inclusive, and resilient peer‑review process, the authors propose three interlocking mechanisms. First, a scalable training pipeline for junior reviewers: an open‑access online course modeled after CITI training, featuring video lectures from award‑winning reviewers, systematic coverage of review criteria (novelty, methodology, presentation, etc.), quizzes, and a certification of completion. Graduates would have their profiles (affiliation, expertise, ORCID) publicly listed, enabling PC chairs and editors to match reviewers efficiently. Second, a responsible AI framework: clear policy definitions of permissible AI uses (e.g., language polishing, code generation) versus prohibited actions (confidentiality violations, full‑review generation). Automated detection tools such as EditLens would be employed to flag AI‑generated content in submissions and reviews, with violations penalized. After human reviewers submit their original reviews, an AI‑generated supplemental review could be offered as a sanity‑check, provided it does not influence the initial human judgment. Third, a diversified incentive system: beyond Distinguished Reviewer Awards, introduce a points‑based service credit, reviewer‑only workshops, and institutional recognition of reviewing as a valued service in tenure and promotion dossiers.

Collectively, these proposals aim to expand the reviewer pool, improve review quality, and mitigate the risks associated with unchecked AI usage. By making training universally accessible, linking reviewer credentials to searchable researcher identifiers, and establishing transparent AI policies, the SE community can move toward a peer‑review model that is both ethically sound and operationally sustainable. The paper concludes by calling for pilot implementations and longitudinal studies to evaluate the effectiveness of these mechanisms and to develop community‑wide standards for future practice.

Comments & Academic Discussion

Loading comments...

Leave a Comment