RSGround-R1: Rethinking Remote Sensing Visual Grounding through Spatial Reasoning

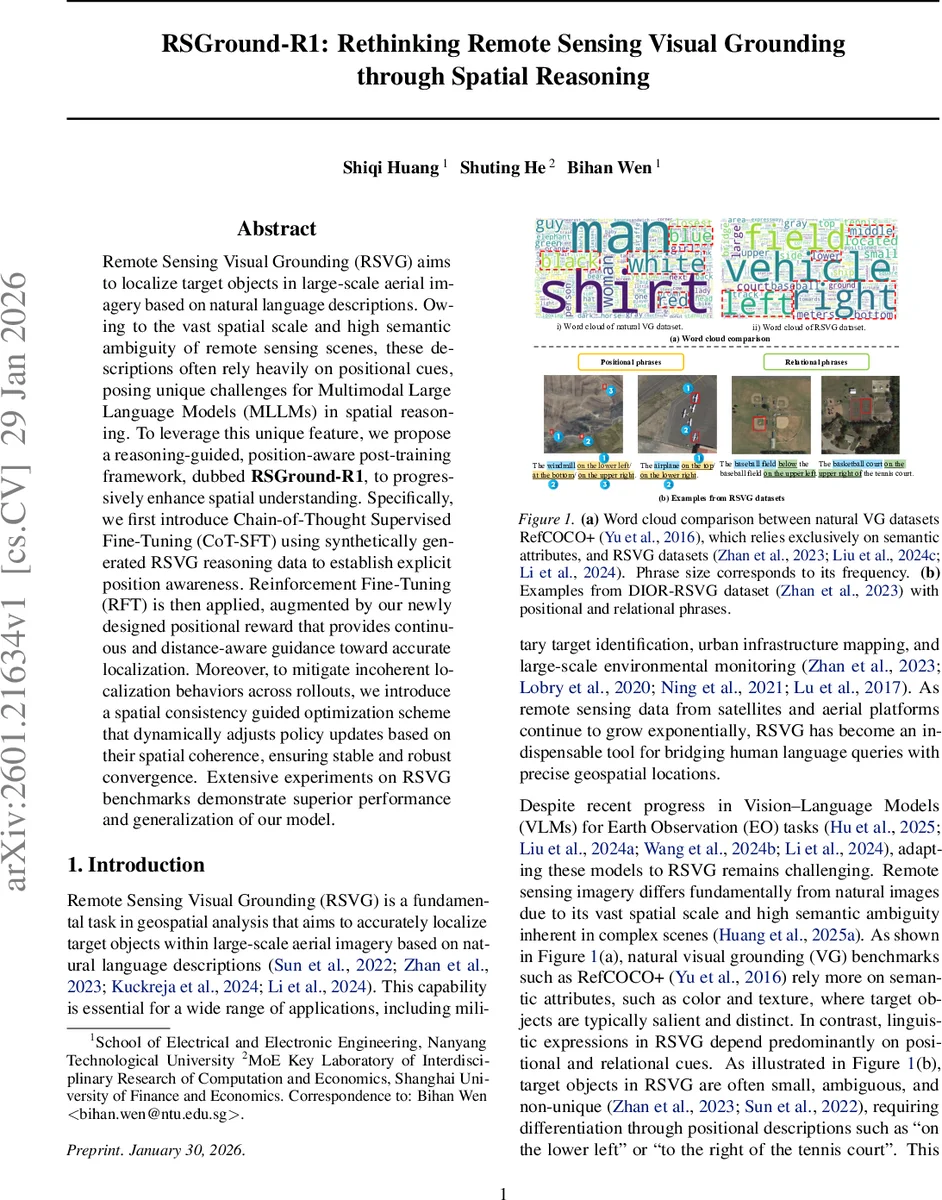

Remote Sensing Visual Grounding (RSVG) aims to localize target objects in large-scale aerial imagery based on natural language descriptions. Owing to the vast spatial scale and high semantic ambiguity of remote sensing scenes, these descriptions often rely heavily on positional cues, posing unique challenges for Multimodal Large Language Models (MLLMs) in spatial reasoning. To leverage this unique feature, we propose a reasoning-guided, position-aware post-training framework, dubbed \textbf{RSGround-R1}, to progressively enhance spatial understanding. Specifically, we first introduce Chain-of-Thought Supervised Fine-Tuning (CoT-SFT) using synthetically generated RSVG reasoning data to establish explicit position awareness. Reinforcement Fine-Tuning (RFT) is then applied, augmented by our newly designed positional reward that provides continuous and distance-aware guidance toward accurate localization. Moreover, to mitigate incoherent localization behaviors across rollouts, we introduce a spatial consistency guided optimization scheme that dynamically adjusts policy updates based on their spatial coherence, ensuring stable and robust convergence. Extensive experiments on RSVG benchmarks demonstrate superior performance and generalization of our model.

💡 Research Summary

The paper tackles Remote Sensing Visual Grounding (RSVG), a task that requires locating a target object in large‑scale aerial images from a natural‑language description. Unlike conventional visual grounding on natural images, RSVG heavily relies on positional and relational phrases because objects are often small, ambiguous, and non‑unique. Existing multimodal large language models (MLLMs) struggle with such spatial reasoning, especially when the predicted region does not overlap with the ground‑truth, yielding zero gradient from the usual IoU reward.

To address these challenges, the authors propose RSGround‑R1, a two‑stage post‑training framework that progressively equips an MLLM with explicit spatial reasoning capabilities.

Stage 1 – Chain‑of‑Thought Supervised Fine‑Tuning (CoT‑SFT). A synthetic dataset of 30 k “think‑and‑answer” examples is generated using a strong MLLM (Qwen2.5‑VL‑72B). Each sample contains an image, a referring expression, a step‑by‑step reasoning trace (enclosed in <think>…</think>) and the ground‑truth bounding box (enclosed in <answer>…</answer>). Low‑quality samples are filtered out. The base model (Qwen2.5‑VL‑3B) is then fine‑tuned to maximize the conditional likelihood of the concatenated reasoning‑plus‑answer sequence. This cold‑start aligns the model with a structured, position‑aware thinking process, enabling it to generate interpretable spatial chains rather than a single raw coordinate guess.

Stage 2 – Reinforcement Fine‑Tuning (RFT). After CoT‑SFT, the model becomes both the policy and the reference network for a Group Relative Policy Optimization (GRPO) loop. Two novel augmentations are introduced:

- Positional Reward for Spatial Guidance. Instead of relying solely on IoU, a continuous reward is computed from the Euclidean distance between the predicted box center and the ground‑truth center, passed through a Gaussian kernel. The reward rises smoothly as the prediction approaches the target, providing informative gradients even when IoU = 0.

- Spatial Consistency Guided Optimization. Remote‑sensing scenes contain densely packed, visually similar objects, causing rollouts for the same query to scatter spatially. The authors quantify the dispersion of bounding‑box centers within each query group and re‑weight the GRPO loss (

L_SC = w * L_GRPO) so that highly inconsistent rollouts receive larger penalties. This encourages the policy to produce coherent, stable predictions across multiple samples.

The overall loss combines format correctness, IoU, the new positional reward, and the consistency‑based weighting.

Experiments. The method is evaluated on several RSVG benchmarks (DIOR‑RSVG, RSIT‑Ground, etc.). RSGround‑R1 outperforms prior state‑of‑the‑art approaches by 6–9 absolute points in mean IoU and shows marked gains on queries that are purely positional. Notably, the system achieves these results while using less than 10 % of the full training data, demonstrating the efficiency of the CoT‑SFT warm‑start and the dense positional signal. Ablation studies confirm that removing CoT‑SFT, the positional reward, or the consistency weighting each leads to a substantial drop in performance, underscoring the necessity of all three components.

Contributions.

- A cold‑start CoT‑SFT strategy that injects explicit spatial reasoning into MLLMs for remote sensing.

- A continuous, distance‑aware positional reward that supplies gradient information even under zero‑overlap conditions.

- A spatial‑consistency guided optimization scheme that stabilizes policy updates by penalizing dispersed rollouts.

- A unified RSGround‑R1 framework that combines the above to achieve strong, generalizable grounding across multiple remote‑sensing datasets.

In summary, RSGround‑R1 demonstrates that equipping MLLMs with step‑wise spatial thinking, dense positional feedback, and consistency‑aware learning dramatically improves their ability to interpret positional language in aerial imagery, opening the door for more reliable language‑driven GIS, disaster response, and urban‑planning applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment