Noise as a Probe: Membership Inference Attacks on Diffusion Models Leveraging Initial Noise

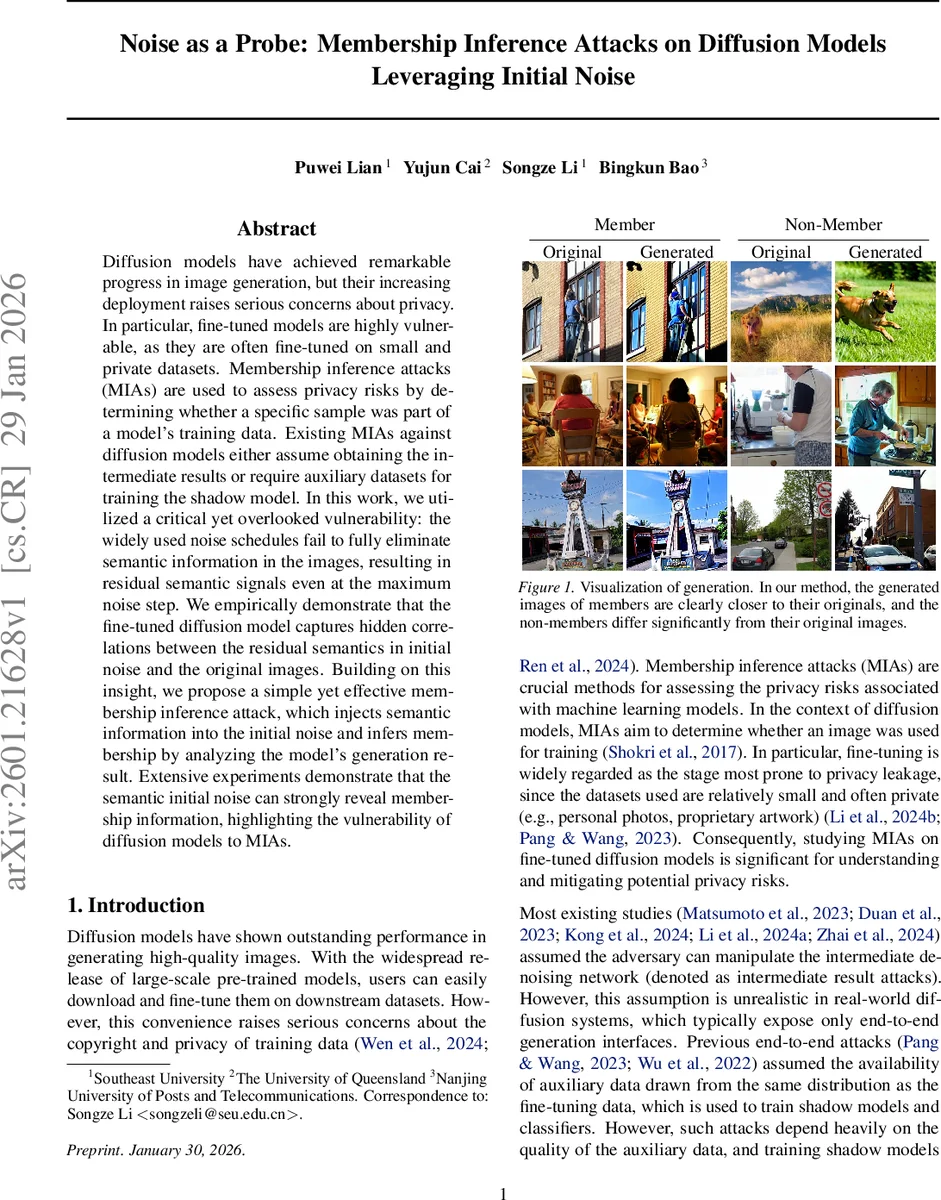

Diffusion models have achieved remarkable progress in image generation, but their increasing deployment raises serious concerns about privacy. In particular, fine-tuned models are highly vulnerable, as they are often fine-tuned on small and private datasets. Membership inference attacks (MIAs) are used to assess privacy risks by determining whether a specific sample was part of a model’s training data. Existing MIAs against diffusion models either assume obtaining the intermediate results or require auxiliary datasets for training the shadow model. In this work, we utilized a critical yet overlooked vulnerability: the widely used noise schedules fail to fully eliminate semantic information in the images, resulting in residual semantic signals even at the maximum noise step. We empirically demonstrate that the fine-tuned diffusion model captures hidden correlations between the residual semantics in initial noise and the original images. Building on this insight, we propose a simple yet effective membership inference attack, which injects semantic information into the initial noise and infers membership by analyzing the model’s generation result. Extensive experiments demonstrate that the semantic initial noise can strongly reveal membership information, highlighting the vulnerability of diffusion models to MIAs.

💡 Research Summary

The paper investigates a previously overlooked privacy vulnerability in diffusion‑based image generation models, especially when such models are fine‑tuned on small, private datasets. Traditional membership inference attacks (MIAs) on diffusion models either assume the attacker can query intermediate denoising steps or rely on auxiliary data to train shadow models. Both assumptions are unrealistic or costly in real‑world deployments. The authors discover that the widely used noise schedules do not completely erase semantic information from the original image, even at the final diffusion timestep T. By measuring the signal‑to‑noise ratio (SNR) for linear, cosine, and Stable Diffusion schedules, they show that SNR(T) remains non‑zero (e.g., 4.68 × 10⁻³ for Stable Diffusion), indicating residual semantic signals.

Leveraging the DDIM inversion technique, the authors demonstrate that one can recover a “semantic initial noise” – a noisy latent that still encodes the content of the original image. They find that fine‑tuned diffusion models inadvertently learn to exploit these residual signals, establishing a hidden correlation between the initial noise and the training data. Evidence is provided through cross‑attention heatmaps and normalized ℓ₂ distance statistics: when generating from semantic noise, member samples reconstruct much more faithfully than non‑members.

The proposed attack proceeds in two steps. First, a publicly available pre‑trained diffusion model is used to perform DDIM inversion on a target image and its textual prompt, yielding semantic initial noise (x_T^{\text{sem}}). Second, this noise (together with the same prompt) is fed to the black‑box fine‑tuned target model, which produces a final image. The attacker then computes a similarity metric (e.g., normalized ℓ₂ distance or SSIM) between the generated image and the original. A low distance indicates membership, while a high distance suggests non‑membership. No access to model parameters, intermediate denoising outputs, or shadow models is required; only the ability to supply custom initial noise is assumed, which is supported by many diffusion libraries (e.g., HuggingFace Diffusers).

Extensive experiments on MS‑COCO, Flickr, and other datasets show that the attack achieves an Area Under the ROC Curve (AUC) of 90.46 % and a true‑positive rate of 21.80 % at a false‑positive rate of 1 %. The semantic noise consistently reduces the ℓ₂ distance for members (Δ ≈ –0.10 to –0.16) while sometimes increasing it for non‑members (Δ ≈ +0.04 to +0.11). These results outperform prior end‑to‑end attacks that require auxiliary data or shadow model training, demonstrating that the initial noise itself is a powerful probe of membership.

The paper’s contributions are threefold: (1) identifying and quantifying residual semantic information in the final diffusion timestep; (2) introducing a simple, practical MIA that exploits DDIM inversion to inject semantics into the initial noise; (3) empirically validating the attack’s effectiveness across multiple datasets and fine‑tuning settings.

In the discussion, the authors suggest defensive directions: redesigning noise schedules to push SNR(T) toward zero, applying regularization during fine‑tuning to suppress over‑learning of semantic residuals, and limiting exposure of generation outputs or metadata. The work highlights a new class of privacy risks for diffusion models and calls for reconsideration of noise schedule design and fine‑tuning practices in privacy‑sensitive applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment