Beyond Imitation: Reinforcement Learning for Active Latent Planning

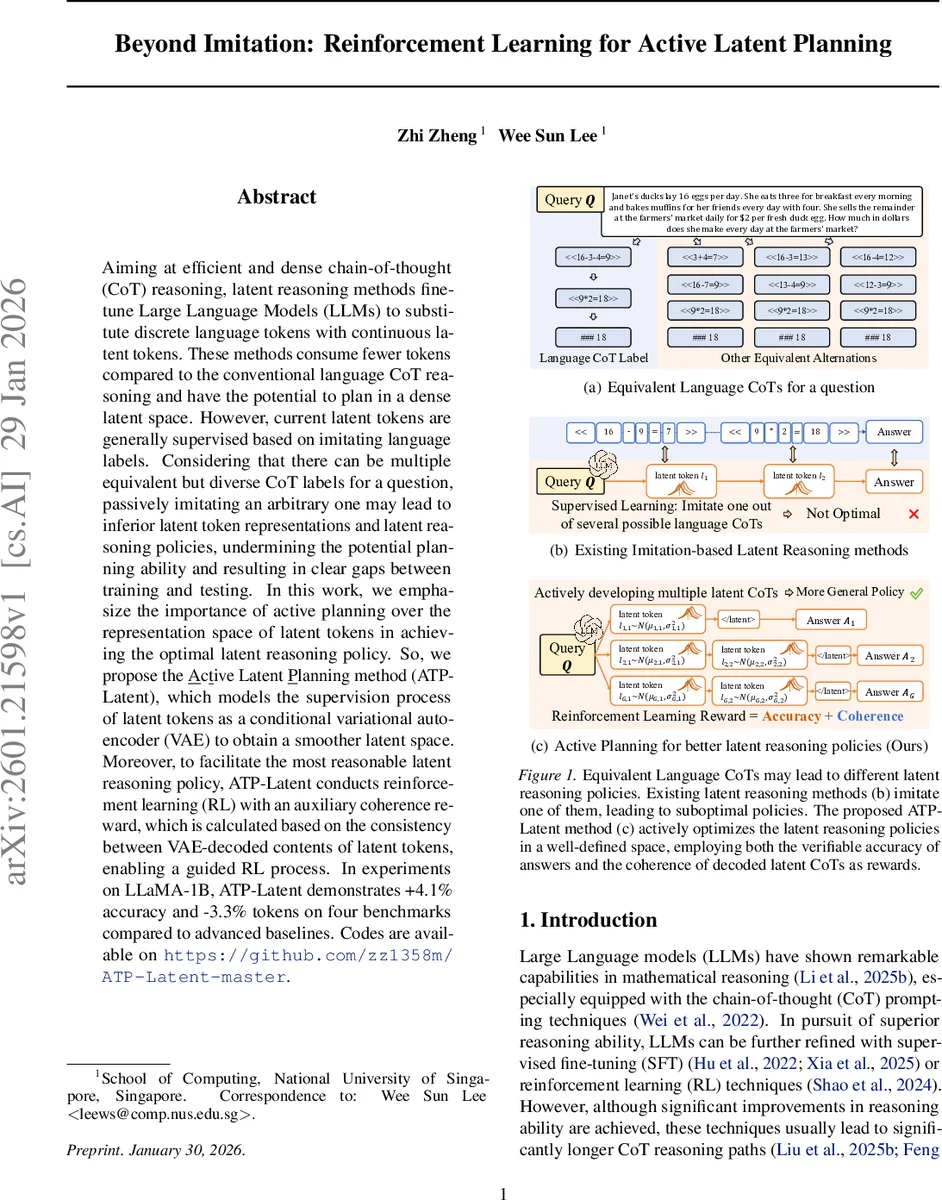

Aiming at efficient and dense chain-of-thought (CoT) reasoning, latent reasoning methods fine-tune Large Language Models (LLMs) to substitute discrete language tokens with continuous latent tokens. These methods consume fewer tokens compared to the conventional language CoT reasoning and have the potential to plan in a dense latent space. However, current latent tokens are generally supervised based on imitating language labels. Considering that there can be multiple equivalent but diverse CoT labels for a question, passively imitating an arbitrary one may lead to inferior latent token representations and latent reasoning policies, undermining the potential planning ability and resulting in clear gaps between training and testing. In this work, we emphasize the importance of active planning over the representation space of latent tokens in achieving the optimal latent reasoning policy. So, we propose the \underline{A}c\underline{t}ive Latent \underline{P}lanning method (ATP-Latent), which models the supervision process of latent tokens as a conditional variational auto-encoder (VAE) to obtain a smoother latent space. Moreover, to facilitate the most reasonable latent reasoning policy, ATP-Latent conducts reinforcement learning (RL) with an auxiliary coherence reward, which is calculated based on the consistency between VAE-decoded contents of latent tokens, enabling a guided RL process. In experiments on LLaMA-1B, ATP-Latent demonstrates +4.1% accuracy and -3.3% tokens on four benchmarks compared to advanced baselines. Codes are available on https://github.com/zz1358m/ATP-Latent-master.

💡 Research Summary

The paper addresses a fundamental limitation of current latent chain‑of‑thought (CoT) reasoning methods, which replace discrete language tokens with continuous latent tokens to achieve denser reasoning and lower token consumption. Existing approaches train these latent tokens by imitating a single language‑based CoT label, even though many equivalent CoT formulations can solve the same problem. This “one‑shot imitation” leads to biased latent representations and sub‑optimal reasoning policies, causing a noticeable gap between training and inference performance.

To overcome this, the authors propose Active Latent Planning (ATP‑Latent), a two‑stage framework that combines a conditional variational auto‑encoder (VAE) with reinforcement learning (RL) equipped with an auxiliary coherence reward.

Stage 1 – Supervised Fine‑Tuning (SFT)

A conditional VAE is trained on question‑answer pairs. The encoder maps a question (and optionally a partial answer) to a sequence of continuous latent tokens L = (l₁,…,l_T). The decoder reconstructs a language‑level CoT from L. The KL‑divergence term forces the latent space to follow a smooth, approximately Gaussian distribution, ensuring that nearby points correspond to semantically similar reasoning steps. A “stop‑head” MLP predicts when the latent sequence should terminate, preventing unnecessary token generation.

Stage 2 – Reinforcement Learning

After the VAE has shaped a well‑behaved latent space, an RL policy π_θ generates latent tokens step‑by‑step. The reward function consists of two components:

- Accuracy – a binary signal indicating whether the final answer is correct.

- Coherence – computed as the consistency between multiple VAE‑decoded CoTs generated from the same latent policy. Concretely, the policy samples several latent trajectories; each is decoded back to language using the VAE decoder, and the pairwise overlap (e.g., token‑level BLEU or exact match of intermediate results) is measured. High coherence indicates that the latent policy is stable and produces semantically aligned reasoning, even if the exact token sequence differs.

The RL objective follows a variant of Group‑Relative Policy Optimization (GRPO), where the advantage is estimated across a batch of sampled trajectories. Exploration is performed by sampling from the VAE’s posterior distribution rather than adding ad‑hoc Gaussian noise, which preserves the smoothness of the latent manifold.

Implementation Details

The authors augment LLaMA‑1B with two lightweight heads: a latent‑head (MLP) that predicts the next latent vector and a stop‑head (MLP) that predicts termination. The VAE is first warm‑started using a conventional latent‑CoT method (Coconut) to provide initial latent sequences. During RL, eight candidate trajectories are generated per question; the combined reward (accuracy + coherence) guides policy updates via PPO‑style clipping.

Experiments

Four numerical reasoning benchmarks are used: GSM‑8K, MathQA, MultiArith, and AQUA‑RAT. Baselines include imitation‑only latent methods (Coconut, SIM‑CoT) and recent RL‑based language‑CoT approaches (Lite PPO, DR‑GRPO). ATP‑Latent achieves an average +4.1 percentage‑point improvement in accuracy and ‑3.3 % reduction in token count relative to the strongest baselines. Moreover, the “overthinking” phenomenon—where RL tends to generate longer CoTs—is mitigated, leading to faster inference (≈12 % latency reduction). Ablation studies confirm that (i) removing the VAE’s KL regularization degrades both coherence and accuracy, and (ii) omitting the coherence reward causes the policy to revert to deterministic imitation, losing the benefits of active planning.

Analysis and Limitations

The success of ATP‑Latent hinges on the quality of the VAE decoder: if the decoder cannot faithfully reconstruct language CoTs, the coherence reward becomes noisy. The current work evaluates only a 1‑billion‑parameter model; scaling to larger LLaMA variants (7B, 13B, 70B) may present computational challenges. Training time is roughly 1.5× that of pure imitation methods due to the additional VAE and RL phases.

Conclusion

ATP‑Latent demonstrates that moving from passive imitation to active planning in the latent token space yields tangible gains in both efficiency and correctness. By enforcing a smooth latent manifold via a conditional VAE and guiding exploration with a decoder‑based coherence signal, the method aligns latent reasoning trajectories with the underlying semantic structure of the problem. The paper opens avenues for further research on latent‑space RL, especially concerning scalability, decoder robustness, and integration with larger LLMs.

Comments & Academic Discussion

Loading comments...

Leave a Comment