Language Models as Artificial Learners: Investigating Crosslinguistic Influence

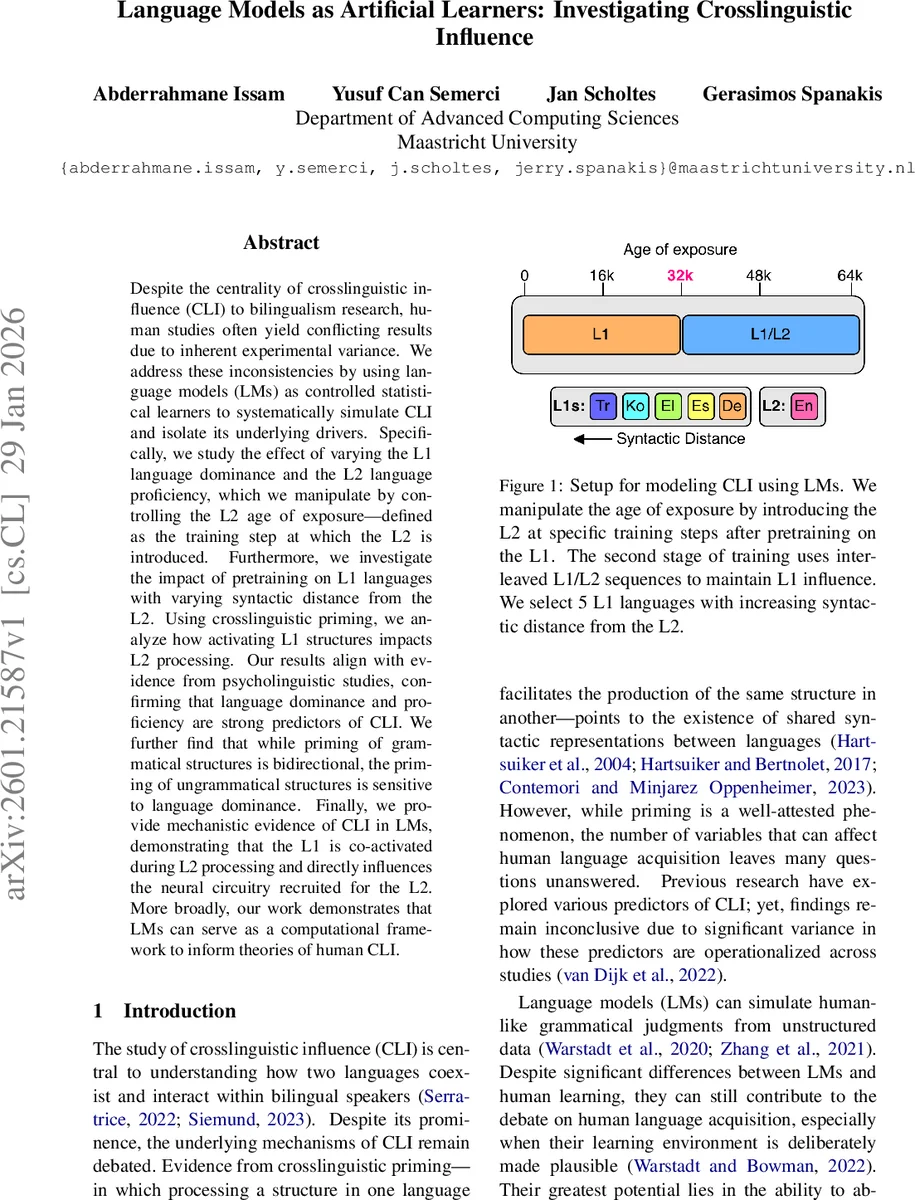

Despite the centrality of crosslinguistic influence (CLI) to bilingualism research, human studies often yield conflicting results due to inherent experimental variance. We address these inconsistencies by using language models (LMs) as controlled statistical learners to systematically simulate CLI and isolate its underlying drivers. Specifically, we study the effect of varying the L1 language dominance and the L2 language proficiency, which we manipulate by controlling the L2 age of exposure – defined as the training step at which the L2 is introduced. Furthermore, we investigate the impact of pretraining on L1 languages with varying syntactic distance from the L2. Using cross-linguistic priming, we analyze how activating L1 structures impacts L2 processing. Our results align with evidence from psycholinguistic studies, confirming that language dominance and proficiency are strong predictors of CLI. We further find that while priming of grammatical structures is bidirectional, the priming of ungrammatical structures is sensitive to language dominance. Finally, we provide mechanistic evidence of CLI in LMs, demonstrating that the L1 is co-activated during L2 processing and directly influences the neural circuitry recruited for the L2. More broadly, our work demonstrates that LMs can serve as a computational framework to inform theories of human CLI.

💡 Research Summary

The paper tackles the longstanding problem of inconsistent findings in cross‑linguistic influence (CLI) research by treating language models (LMs) as controlled statistical learners. The authors manipulate two key variables that are known to affect CLI in human bilinguals—L1 dominance and L2 proficiency—by varying the “age of exposure,” i.e., the training step at which the second language (L2) is introduced. Four exposure points (0, 16 K, 32 K, 48 K steps) are used, with interleaved L1/L2 sequences after L2 introduction to prevent catastrophic forgetting. This design mirrors human acquisition: earlier L2 exposure yields higher L2 proficiency and lower L1 dominance, while later exposure yields the opposite.

Five L1 languages (German, Spanish, Greek, Korean, Turkish) are paired with English as L2. Their syntactic distance from English is quantified using the number of non‑shared WALS features (ranging from 9 to 27). By selecting languages with increasing distance, the study directly tests whether structural dissimilarity modulates CLI.

Three complementary evaluation streams are employed. First, the BLiMP benchmark (67 k minimal pairs across 67 grammatical categories) measures grammatical accuracy. For each bilingual model, accuracy is compared to a monolingual English baseline that shares the same bilingual tokenizer, ensuring that any performance gap reflects CLI rather than vocabulary bias. The CLI effect is defined as the relative change in accuracy; positive values indicate interference, negative values indicate facilitation. Results show a clear negative correlation between syntactic distance and L2 accuracy, and a strong interaction with age of exposure: later L2 introduction (high L1 dominance, low L2 proficiency) produces the greatest interference, especially for distant L1 languages.

Second, the First Certificate in English (FCE) corpus, annotated with learner L1s, is transformed into a surprisal‑based acceptability task. The normalized surprisal difference (ΔS) quantifies how “surprising” errors from a given L1 are to a model trained with that L1 as primary. Models consistently assign lower ΔS to errors from their own L1, indicating a computational preference for L1‑specific interlanguage patterns—an effect that scales with L1 dominance and syntactic distance.

Third, a cross‑linguistic priming paradigm is implemented. Acceptable sentences from BLiMP are translated into each L1 using the NLLB‑200 model to create primes. The presence of an L1 prime before the L2 target pair is shown to improve L2 grammatical judgments, confirming bidirectional priming for grammatical structures. However, priming of ungrammatical structures only yields a benefit when L1 dominance is high, supporting the “separate‑but‑connected” hypothesis rather than a fully shared syntax account.

Mechanistically, the authors apply LogitLens to decode hidden states during L2 processing. They observe measurable activation of L1‑specific neurons, with the magnitude of activation increasing with L1 dominance and decreasing with syntactic distance. Additionally, neuron‑overlap analyses reveal that language pairs with smaller syntactic distance share more neurons, providing a concrete neural‑network substrate for the observed CLI effects.

Overall, the paper makes three major contributions: (1) a novel bilingual LM training framework that cleanly isolates L1 dominance and L2 proficiency via age‑of‑exposure manipulation; (2) systematic evidence that syntactic distance, L1 dominance, and L2 proficiency jointly predict CLI across grammatical accuracy, error‑type preferences, and priming behavior; and (3) mechanistic insight showing co‑activation of L1 representations during L2 processing, thereby offering a computational bridge to human CLI theories. By demonstrating that LMs can serve as precise, scalable testbeds for bilingual phenomena, the work opens a promising avenue for integrating computational modeling with psycholinguistic research.

Comments & Academic Discussion

Loading comments...

Leave a Comment