Depth-Recurrent Attention Mixtures: Giving Latent Reasoning the Attention it Deserves

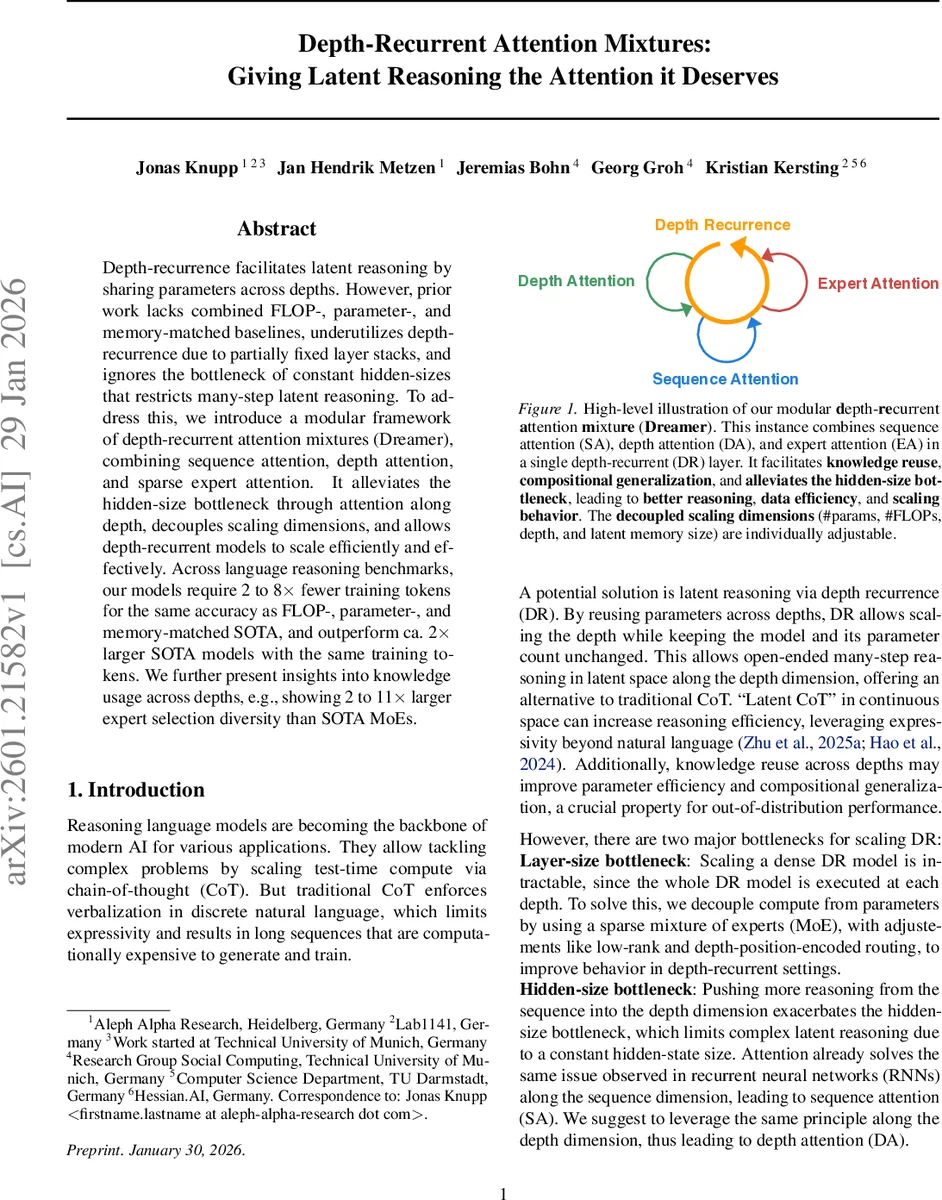

Depth-recurrence facilitates latent reasoning by sharing parameters across depths. However, prior work lacks combined FLOP-, parameter-, and memory-matched baselines, underutilizes depth-recurrence due to partially fixed layer stacks, and ignores the bottleneck of constant hidden-sizes that restricts many-step latent reasoning. To address this, we introduce a modular framework of depth-recurrent attention mixtures (Dreamer), combining sequence attention, depth attention, and sparse expert attention. It alleviates the hidden-size bottleneck through attention along depth, decouples scaling dimensions, and allows depth-recurrent models to scale efficiently and effectively. Across language reasoning benchmarks, our models require 2 to 8x fewer training tokens for the same accuracy as FLOP-, parameter-, and memory-matched SOTA, and outperform ca. 2x larger SOTA models with the same training tokens. We further present insights into knowledge usage across depths, e.g., showing 2 to 11x larger expert selection diversity than SOTA MoEs.

💡 Research Summary

The paper introduces Dreamer, a modular “Depth‑Recurrent Attention Mixture” architecture that unifies three orthogonal attention mechanisms—sequence attention (SA), depth attention (DA), and expert attention (EA)—into a single recurrent layer. Traditional depth‑recurrent Transformers share parameters across layers to increase depth without increasing parameter count, but they suffer from three major shortcomings: (1) lack of FLOP‑, parameter‑, and memory‑matched baselines, (2) under‑utilization of depth recurrence because of partially fixed layer stacks, and (3) a hidden‑size bottleneck that limits complex reasoning when depth is increased.

Dreamer addresses these issues by:

- Sequence Attention (SA) – identical to standard Transformer self‑attention, modeling token‑to‑token interactions.

- Depth Attention (DA) – applies dot‑product attention over the hidden states of the same token across previous recurrence steps. By treating the token batch as the “sequence” dimension and depth as the “time” dimension, DA re‑uses existing optimized attention kernels, incurs negligible extra memory (the KV cache is per‑token and overwritten each step), and directly mitigates the hidden‑size bottleneck in the depth dimension.

- Expert Attention (EA) – a sparse mixture‑of‑experts (MoE) where each expert is a lightweight MLP. Queries and keys are computed for each token‑depth pair, and routing scores are obtained via a sigmoid‑top‑K operation with a bias‑balancing term similar to DeepSeek‑V3. A shared always‑active expert is added to stabilize top‑1 routing gradients.

All three attention modules share their projection weights across depths, which would normally destabilize training. To keep training stable, the authors (a) apply RMSNorm to the residual stream itself, (b) replace dense projection matrices with linear MoE layers, and (c) introduce a shared linear expert that is scaled by the maximum routing score but has stopped‑gradient flow, effectively providing a baseline output for each depth.

The authors also devise a bi‑variate coordinate‑descent procedure to match FLOPs, parameter count, and memory usage across models. They first binary‑search the intermediate MLP size of EA, then adjust the number of experts, ensuring that each comparison model is tightly matched to the Dreamer baseline.

Empirically, Dreamer is evaluated on a suite of language reasoning benchmarks (GSM‑8K, ARC‑E, MMLU, etc.). When matched for FLOPs, parameters, and memory, Dreamer achieves the same or higher accuracy while requiring 2‑8× fewer training tokens than state‑of‑the‑art (SOTA) MoE baselines. Moreover, a 1 B‑parameter Dreamer matches the performance of a 2× larger SOTA model trained on the same token budget. Analysis of expert utilization shows 2‑11× greater selection diversity across depths compared to existing MoEs, indicating that depth‑aware routing indeed spreads knowledge more evenly.

Additional ablations isolate the contributions of depth recurrence and depth attention. Depth attention alone yields noticeable gains by alleviating the hidden‑size bottleneck, while depth recurrence combined with expert attention provides the largest efficiency improvements. Parallel variants that compute DA, SA, and EA simultaneously give a modest (~15 %) speedup at the cost of a 10‑20 % increase in error for 1 B‑scale models; the authors therefore adopt a partially parallel formulation (DA + SA computed together, EA added afterward).

The paper also offers qualitative insights: depth‑wise attention weights reveal that early depths focus on coarse semantic extraction, while deeper steps refine logical chains, effectively performing a “latent chain‑of‑thought” without expanding the token sequence. Expert selection patterns show that certain experts specialize in arithmetic, others in commonsense reasoning, and many are reused across depths, confirming the hypothesis that depth‑recurrent sharing promotes compositional generalization.

In summary, Dreamer contributes (1) a unified attention‑mixture framework that couples sequence, depth, and expert dimensions, (2) a novel depth‑attention mechanism that removes the hidden‑size bottleneck for deep recurrent reasoning, (3) a rigorous FLOP‑, parameter‑, and memory‑matched experimental protocol, and (4) extensive analysis of knowledge reuse across depths. These innovations enable scalable, token‑efficient latent reasoning and set a new baseline for future large‑scale language models that aim to combine parameter efficiency with deep, multi‑step reasoning capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment