KromHC: Manifold-Constrained Hyper-Connections with Kronecker-Product Residual Matrices

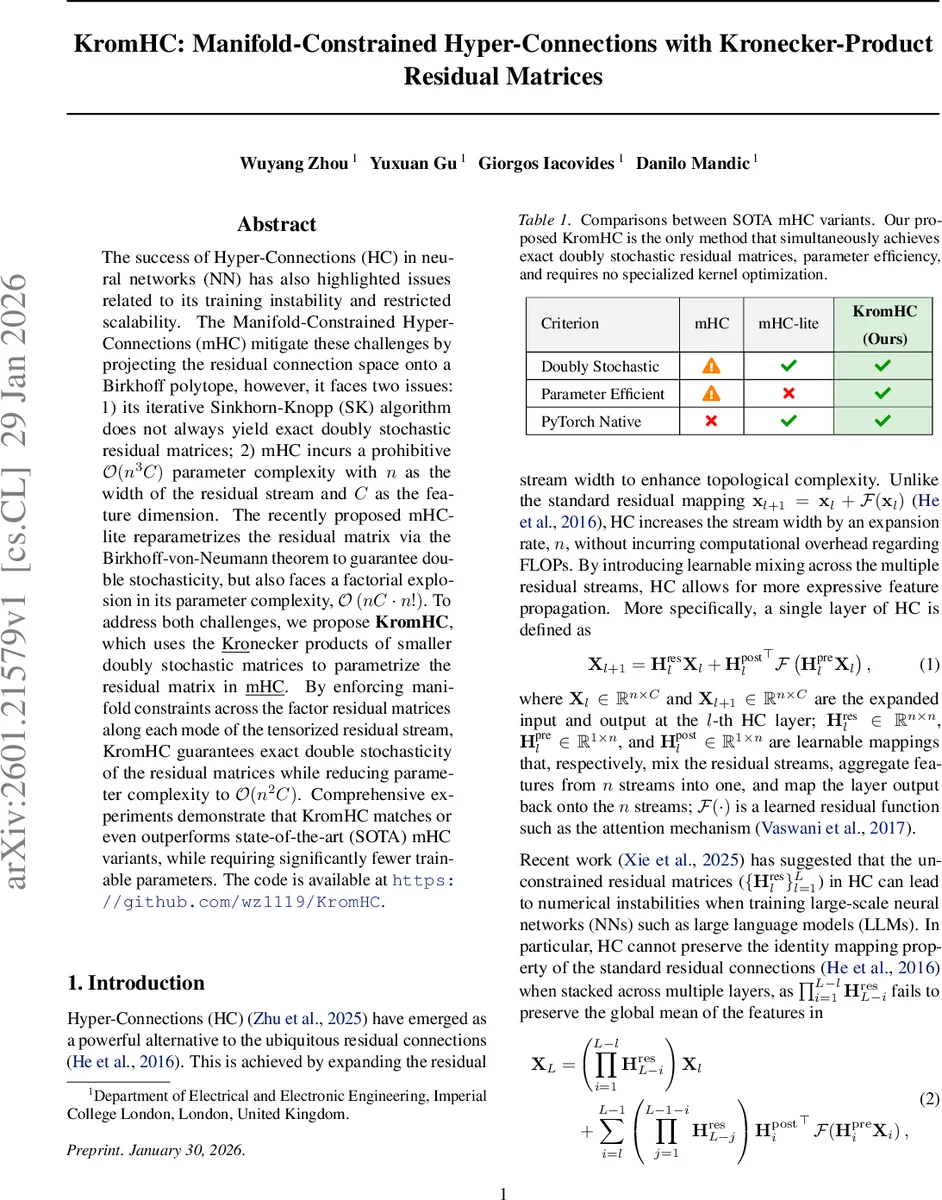

The success of Hyper-Connections (HC) in neural networks (NN) has also highlighted issues related to its training instability and restricted scalability. The Manifold-Constrained Hyper-Connections (mHC) mitigate these challenges by projecting the residual connection space onto a Birkhoff polytope, however, it faces two issues: 1) its iterative Sinkhorn-Knopp (SK) algorithm does not always yield exact doubly stochastic residual matrices; 2) mHC incurs a prohibitive $\mathcal{O}(n^3C)$ parameter complexity with $n$ as the width of the residual stream and $C$ as the feature dimension. The recently proposed mHC-lite reparametrizes the residual matrix via the Birkhoff-von-Neumann theorem to guarantee double stochasticity, but also faces a factorial explosion in its parameter complexity, $\mathcal{O} \left( nC \cdot n! \right)$. To address both challenges, we propose \textbf{KromHC}, which uses the \underline{Kro}necker products of smaller doubly stochastic matrices to parametrize the residual matrix in \underline{mHC}. By enforcing manifold constraints across the factor residual matrices along each mode of the tensorized residual stream, KromHC guarantees exact double stochasticity of the residual matrices while reducing parameter complexity to $\mathcal{O}(n^2C)$. Comprehensive experiments demonstrate that KromHC matches or even outperforms state-of-the-art (SOTA) mHC variants, while requiring significantly fewer trainable parameters. The code is available at \texttt{https://github.com/wz1119/KromHC}.

💡 Research Summary

The paper addresses two fundamental shortcomings of existing Hyper‑Connections (HC) and their manifold‑constrained variants. Standard HC expands the residual stream width but suffers from numerical instability because the learnable mixing matrix H_res is unconstrained. Manifold‑Constrained Hyper‑Connections (mHC) mitigate this by projecting H_res onto the Birkhoff polytope using a finite‑iteration Sinkhorn‑Knopp (SK) algorithm. While this improves stability, the SK procedure does not guarantee exact double‑stochasticity after a limited number of iterations, leading to error accumulation across layers. Moreover, mHC’s parameter count scales as O(n³C), where n is the residual‑stream width and C the feature dimension, making it impractical for large n.

A subsequent work, mHC‑lite, enforces exact double‑stochasticity by representing H_res as a convex combination of all n! permutation matrices, invoking the Birkhoff‑von‑Neumann theorem. This eliminates the SK approximation error but introduces a factorial explosion in parameters (O(nC·n!)), which quickly becomes infeasible even for moderate n.

KromHC is proposed as a unified solution that simultaneously guarantees exact double‑stochasticity and dramatically reduces parameter complexity. The key insight is to factor the residual mixing matrix H_res as a Kronecker product of several much smaller doubly stochastic matrices U₁,…,U_K. By factorizing the residual‑stream width n into a product of small integers (e.g., n = 2^K, with each i_k = 2), each factor U_k lives in a low‑dimensional Birkhoff polytope B_{i_k}. These factors can be parametrized efficiently as convex combinations of the i_k × i_k permutation matrices, requiring only i_k² parameters (or even fewer when i_k = 2).

Theoretical support comes from two classic results. First, the Birkhoff‑von‑Neumann theorem guarantees that any doubly stochastic matrix can be expressed as a convex combination of permutation matrices, ensuring that each U_k can be learned while staying within the polytope. Second, the Kronecker product closure property states that the Kronecker product of doubly stochastic matrices is itself doubly stochastic. Consequently, H_res = U₁ ⊗ U₂ ⊗ … ⊗ U_K is exactly doubly stochastic without any iterative projection.

Implementation-wise, the input tensor X_l ∈ ℝ^{n×C} is reshaped into an order‑(K+1) tensor of shape i₁ × i₂ × … × i_K × C. Each mode‑k is multiplied by the corresponding factor U_k, while the final mode (C) uses an identity matrix. After mode‑wise multiplication, the tensor is matricized back to the original n × C shape, yielding H_res X_l. This operation is equivalent to a matrix‑vector multiplication with the Kronecker‑product matrix, which can be executed efficiently using standard PyTorch operations; no custom kernels are required.

Empirical evaluation spans three domains: large‑scale language model pre‑training, image classification (CIFAR‑10/100), and time‑series forecasting. Across all tasks, KromHC matches or exceeds the performance of both mHC and mHC‑lite while using dramatically fewer parameters. In a 24‑layer transformer LLM experiment, mHC exhibited a mean absolute error (MAE) of ~0.05 in the column‑sum of the product of residual matrices, indicating drift from doubly stochasticity. Both KromHC and mHC‑lite achieved zero MAE, confirming exact stochasticity and improved training stability. Parameter counts illustrate the efficiency: with n = 1024 and C = 512, mHC requires ~540 M parameters, mHC‑lite would need on the order of 10⁹ (practically infeasible), whereas KromHC uses only ~130 M.

In summary, KromHC delivers three decisive advantages: (1) exact double‑stochasticity without iterative projection, (2) parameter complexity reduced from O(n³C) or factorial to O(n²C), and (3) seamless integration into existing HC pipelines using only native tensor operations. This makes it a practical and scalable alternative for deep networks that wish to exploit wide residual streams without sacrificing stability. Future directions suggested include exploring non‑binary factor dimensions, adaptive selection of the number of Kronecker factors, and combining the approach with other tensor decomposition techniques to further boost expressive power.

Comments & Academic Discussion

Loading comments...

Leave a Comment