EmboCoach-Bench: Benchmarking AI Agents on Developing Embodied Robots

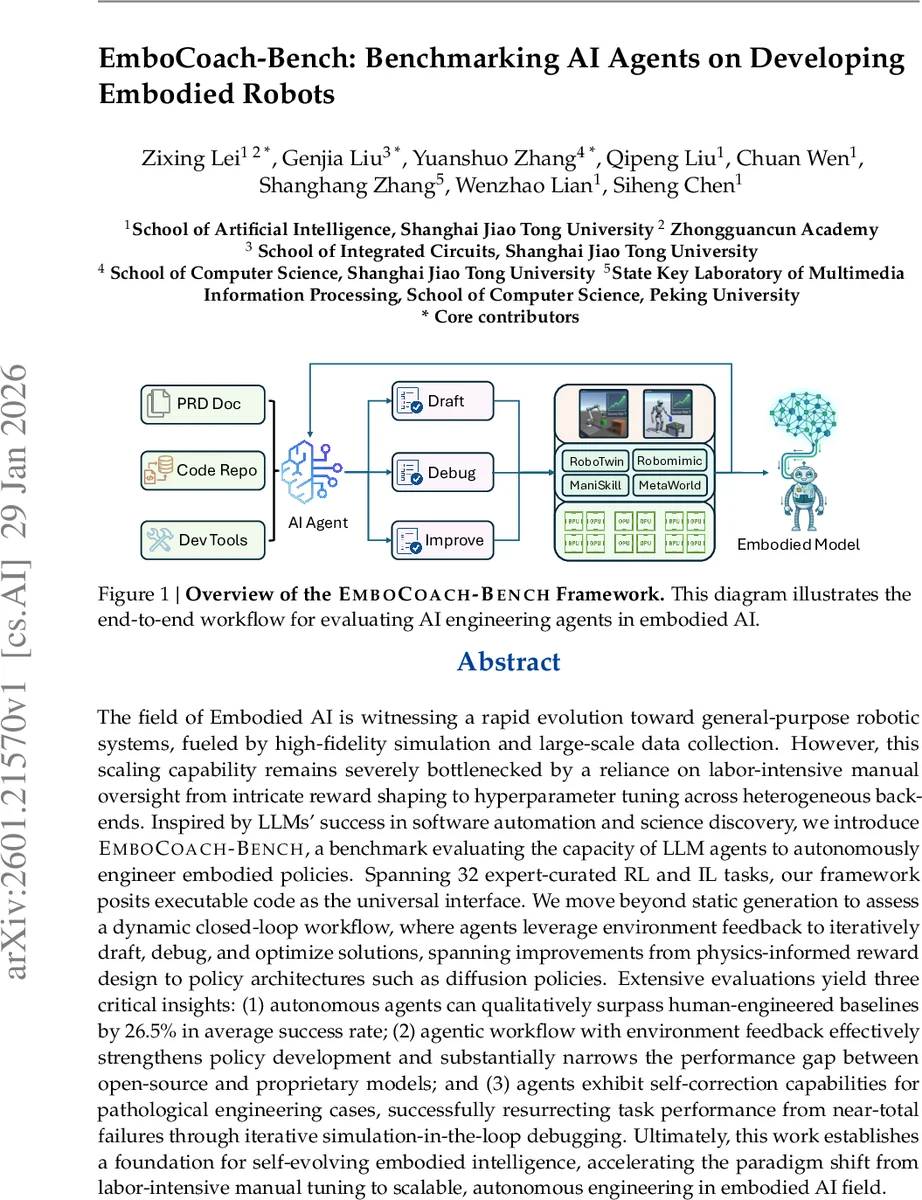

The field of Embodied AI is witnessing a rapid evolution toward general-purpose robotic systems, fueled by high-fidelity simulation and large-scale data collection. However, this scaling capability remains severely bottlenecked by a reliance on labor-intensive manual oversight from intricate reward shaping to hyperparameter tuning across heterogeneous backends. Inspired by LLMs’ success in software automation and science discovery, we introduce \textsc{EmboCoach-Bench}, a benchmark evaluating the capacity of LLM agents to autonomously engineer embodied policies. Spanning 32 expert-curated RL and IL tasks, our framework posits executable code as the universal interface. We move beyond static generation to assess a dynamic closed-loop workflow, where agents leverage environment feedback to iteratively draft, debug, and optimize solutions, spanning improvements from physics-informed reward design to policy architectures such as diffusion policies. Extensive evaluations yield three critical insights: (1) autonomous agents can qualitatively surpass human-engineered baselines by 26.5% in average success rate; (2) agentic workflow with environment feedback effectively strengthens policy development and substantially narrows the performance gap between open-source and proprietary models; and (3) agents exhibit self-correction capabilities for pathological engineering cases, successfully resurrecting task performance from near-total failures through iterative simulation-in-the-loop debugging. Ultimately, this work establishes a foundation for self-evolving embodied intelligence, accelerating the paradigm shift from labor-intensive manual tuning to scalable, autonomous engineering in embodied AI field.

💡 Research Summary

The paper introduces EmboCoach‑Bench, a novel benchmark designed to evaluate large language model (LLM) agents on the full lifecycle of embodied robot policy development. While recent advances in high‑fidelity simulators and large‑scale manipulation datasets have propelled Embodied AI toward general‑purpose robotic systems, progress remains hampered by labor‑intensive manual engineering—reward shaping, hyper‑parameter tuning, and integration across heterogeneous back‑ends. EmboCoach‑Bench addresses this bottleneck by treating code as the universal interface between an autonomous agent and the simulated environment, and by embedding a closed‑loop “draft‑debug‑improve” workflow that leverages real‑time simulation feedback.

The benchmark comprises 32 expert‑curated tasks spanning reinforcement learning (RL) and imitation learning (IL) across four widely adopted simulators: ManiSkill, RoboTwin, RoboMimic, and MetaWorld. Each task is formalized as a triplet T = (D_prd, P_sys, C_env). D_prd (product requirement document) specifies the high‑level objective, persona, resource budget, and immutable metrics; P_sys defines the operational protocol and tool APIs (file editor, terminal, debugger); C_env provides the full codebase—including baseline skeletons, Docker‑wrapped simulation containers, and configuration files—that the agent must explore, modify, compile, and execute. This formulation forces the agent to perform system‑level reasoning, physics‑aware reward design, and environment‑in‑the‑loop debugging rather than merely generating isolated code snippets.

The core technical contribution is the integration of environment feedback into the agent’s decision‑making loop. After each code edit, the agent triggers a simulation run, observes quantitative signals (success rate, collision events, slippage) and qualitative logs, and uses these observations to guide subsequent edits. This iterative process mirrors how human robotics researchers iteratively refine policies, but it is fully automated. The authors implement the workflow using a unified IDE‑like interface that combines OpenHands‑style code generation with ML‑Master orchestration, allowing the agent to invoke tools via deterministic, schema‑driven prompts.

Experiments evaluate several state‑of‑the‑art LLMs—including GPT‑4‑Turbo, Claude‑2, and Llama‑2‑70B—in both feedback‑enabled and feedback‑disabled modes. Results show that (1) agents surpass human‑engineered baselines by an average of 26.5 percentage points in success rate; (2) the feedback‑driven workflow is essential—without it, even the strongest models achieve sub‑10 % success, whereas with feedback they consistently exceed the baselines; (3) agents demonstrate robust self‑correction. In pathological cases where the initial code yields near‑zero performance (e.g., overly sparse rewards, invalid hyper‑parameters), the agent parses error logs, identifies root causes, and applies targeted patches, restoring performance to 70 %+ on many tasks. Moreover, the benchmark includes modern policy architectures such as Diffusion Policies, Action Chunking Transformers (ACT), and Vision‑Language‑Action (VLA) models; agents automatically respect architectural constraints (e.g., matching skip‑connection dimensions, limiting diffusion inference steps) and tune hyper‑parameters to meet strict resource budgets.

The benchmark’s design also embeds realistic constraints—wall‑clock training limits, GPU memory caps, immutable evaluation metrics, and restricted file access—forcing agents to operate under conditions akin to real research labs or industry pipelines. By doing so, EmboCoach‑Bench not only measures raw coding ability but also assesses strategic planning, resource management, and safety‑aware engineering.

In conclusion, EmboCoach‑Bench provides compelling evidence that LLM‑driven agents can autonomously engineer embodied policies, close the performance gap between open‑source and proprietary models, and recover from severe engineering failures through iterative simulation‑in‑the‑loop debugging. The work paves the way for scaling embodied AI development from artisanal, expert‑driven processes to industrial‑strength, self‑evolving systems. Future directions include extending the benchmark to higher‑fidelity physics engines, real‑world robot hardware, multi‑agent collaboration, and joint optimization of data collection and policy learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment