FlexCausal: Flexible Causal Disentanglement via Structural Flow Priors and Manifold-Aware Interventions

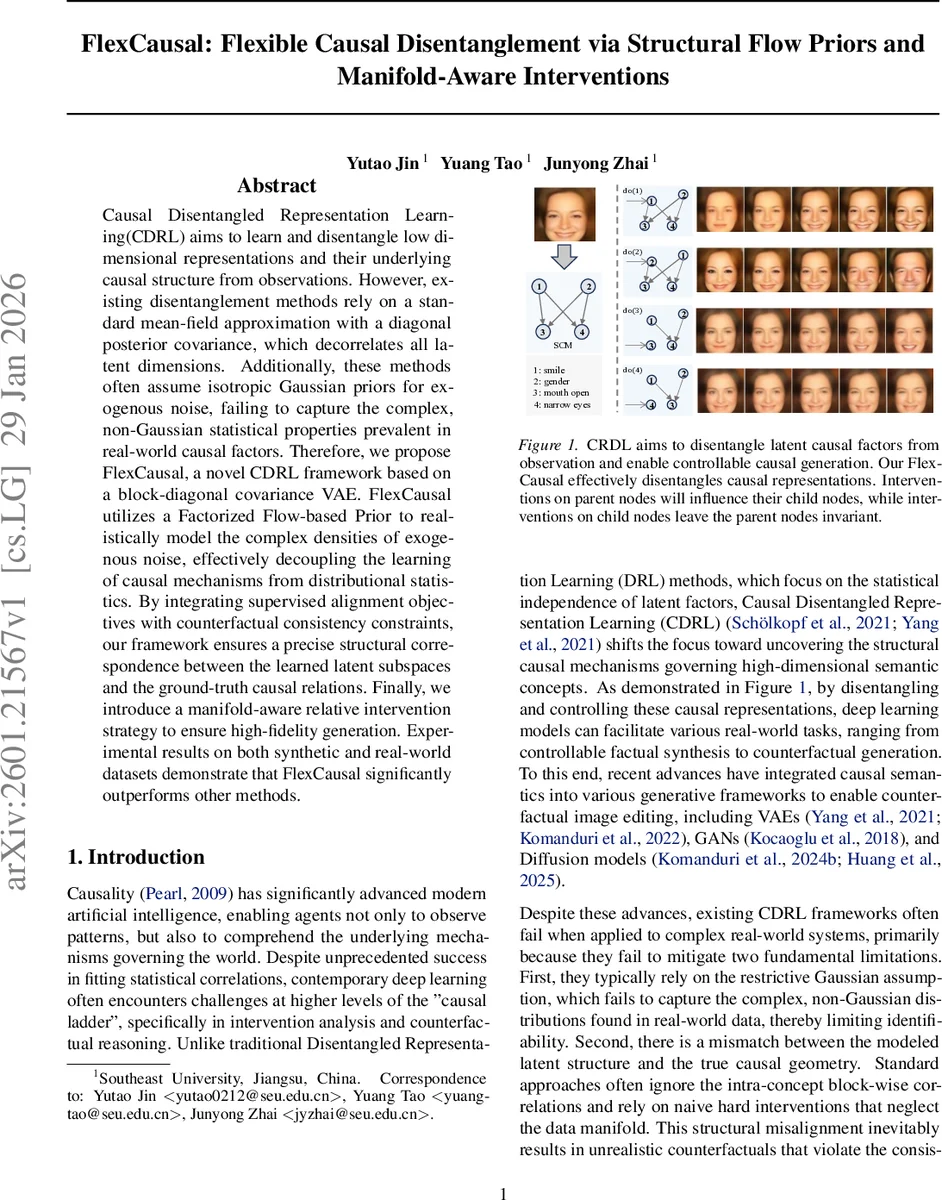

Causal Disentangled Representation Learning(CDRL) aims to learn and disentangle low dimensional representations and their underlying causal structure from observations. However, existing disentanglement methods rely on a standard mean-field approximation with a diagonal posterior covariance, which decorrelates all latent dimensions. Additionally, these methods often assume isotropic Gaussian priors for exogenous noise, failing to capture the complex, non-Gaussian statistical properties prevalent in real-world causal factors. Therefore, we propose FlexCausal, a novel CDRL framework based on a block-diagonal covariance VAE. FlexCausal utilizes a Factorized Flow-based Prior to realistically model the complex densities of exogenous noise, effectively decoupling the learning of causal mechanisms from distributional statistics. By integrating supervised alignment objectives with counterfactual consistency constraints, our framework ensures a precise structural correspondence between the learned latent subspaces and the ground-truth causal relations. Finally, we introduce a manifold-aware relative intervention strategy to ensure high-fidelity generation. Experimental results on both synthetic and real-world datasets demonstrate that FlexCausal significantly outperforms other methods.

💡 Research Summary

FlexCausal addresses two fundamental shortcomings of existing causal disentangled representation learning (CDRL) methods: (i) the mean‑field assumption that forces a diagonal posterior covariance and thus destroys intra‑concept correlations, and (ii) the use of simple isotropic Gaussian priors for exogenous noise, which cannot capture the complex, non‑Gaussian distributions observed in real‑world causal factors. The proposed framework combines a block‑diagonal variational auto‑encoder (VAE) with normalizing‑flow based priors for each latent concept. By partitioning the latent vector into K blocks, each representing a high‑dimensional semantic unit, the model enforces zero correlation across blocks while preserving dense intra‑block dependencies via a Cholesky‑parameterized block‑diagonal covariance. This respects the natural structure of vector‑valued concepts such as facial expressions or object poses.

For the exogenous variables, independent normalizing flows are learned per block, allowing the prior to model arbitrary non‑Gaussian, multimodal densities. Because the flow is volume‑preserving, the log‑likelihood in latent space equals that in exogenous space, effectively decoupling causal mechanisms from distributional statistics and improving identifiability beyond the Markov equivalence class.

FlexCausal further introduces a Counterfactual Consistency loss that penalizes violations of the structural equations under do‑interventions, ensuring that intervened latent variables still satisfy the underlying SCM. To avoid unrealistic hard interventions that push samples off the data manifold, a manifold‑aware relative intervention mechanism is proposed. It learns semantic direction vectors for each block and performs soft, relative shifts along the high‑density geodesic of the learned manifold, preserving visual fidelity in generated counterfactuals.

The authors construct a synthetic benchmark called “Filter” where each latent concept’s exogenous noise follows complex distributions (Laplace, bimodal, etc.), and evaluate on standard datasets such as CelebA, dSprites, and Shapes3D. Across metrics—including ELBO, Mutual Information Gap, Separated Attribute Predictability, structural accuracy, and qualitative counterfactual realism—FlexCausal consistently outperforms prior CDRL models such as CausalVAE, SCM‑VAE, ICM‑VAE, and CFI‑VAE.

Contributions are: (1) a block‑diagonal VAE that maintains intra‑concept correlations; (2) flow‑based exogenous priors that relax Gaussian assumptions; (3) a trainable counterfactual consistency constraint; (4) a manifold‑aware directional intervention strategy; and (5) the Filter benchmark for evaluating non‑Gaussian exogenous factors. Limitations include reliance on a pre‑specified causal graph and additional computational overhead from flow training. Future work may focus on learning the graph structure jointly and designing more efficient flow architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment