Note2Chat: Improving LLMs for Multi-Turn Clinical History Taking Using Medical Notes

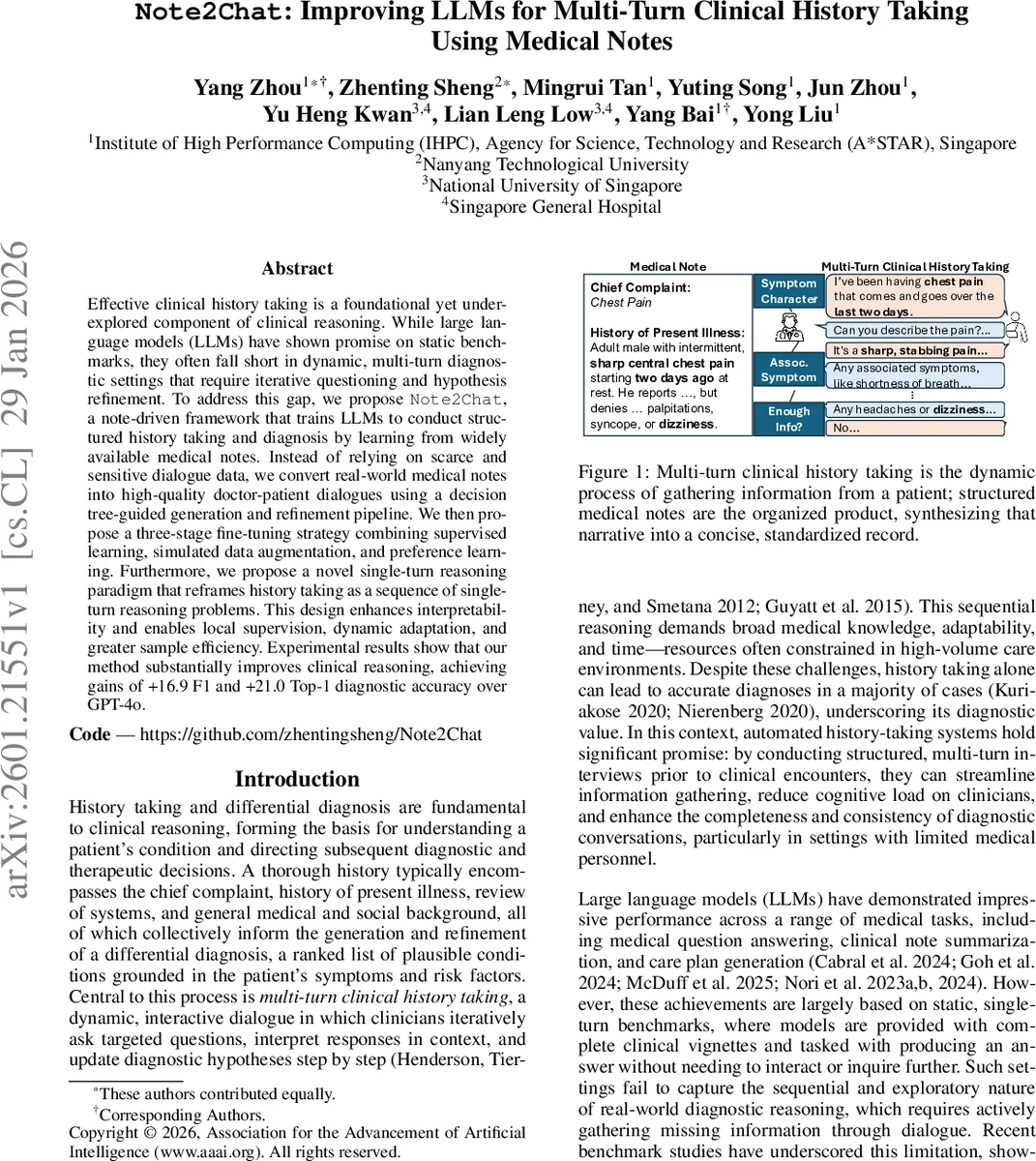

Effective clinical history taking is a foundational yet underexplored component of clinical reasoning. While large language models (LLMs) have shown promise on static benchmarks, they often fall short in dynamic, multi-turn diagnostic settings that require iterative questioning and hypothesis refinement. To address this gap, we propose \method{}, a note-driven framework that trains LLMs to conduct structured history taking and diagnosis by learning from widely available medical notes. Instead of relying on scarce and sensitive dialogue data, we convert real-world medical notes into high-quality doctor-patient dialogues using a decision tree-guided generation and refinement pipeline. We then propose a three-stage fine-tuning strategy combining supervised learning, simulated data augmentation, and preference learning. Furthermore, we propose a novel single-turn reasoning paradigm that reframes history taking as a sequence of single-turn reasoning problems. This design enhances interpretability and enables local supervision, dynamic adaptation, and greater sample efficiency. Experimental results show that our method substantially improves clinical reasoning, achieving gains of +16.9 F1 and +21.0 Top-1 diagnostic accuracy over GPT-4o. Our code and dataset can be found at https://github.com/zhentingsheng/Note2Chat.

💡 Research Summary

Note2Chat addresses a critical gap in the application of large language models (LLMs) to clinical reasoning: while LLMs excel on static, single‑turn medical benchmarks, they falter in the dynamic, multi‑turn setting of history taking, where clinicians must iteratively ask focused questions, interpret answers, and refine differential diagnoses. The authors propose a three‑pronged solution that (1) leverages the abundance of real‑world medical notes as supervision, (2) introduces a decision‑tree‑guided pipeline to convert those notes into high‑quality doctor‑patient dialogues, and (3) designs a three‑stage fine‑tuning regimen together with a novel single‑turn reasoning paradigm.

Data Generation

Using the MIMIC‑IV dataset, the pipeline extracts the History of Present Illness (HPI) and primary diagnosis from discharge notes. Relevant clinical findings (symptoms, timing, risk factors) are identified, while downstream information such as labs or treatments—unavailable during the interview—is deliberately omitted. A diagnostic decision tree maps findings to candidate diagnoses, providing a structured scaffold for the LLM to generate a sequence of question‑answer pairs that mimic realistic differential‑diagnosis workflows. An LLM‑based critic then reviews each generated dialogue, adding missing questions, correcting premature inferences, and ensuring that no information leakage occurs. This process yields 8,944 synthetic multi‑turn dialogues covering 4,972 patients, with an average length of 17.8 turns, plus 67,077 successful roll‑outs and 11,403 preference pairs for later training.

Three‑Stage Fine‑Tuning

- Cold‑Start Supervised Fine‑Tuning (SFT) – The base model (Qwen2.5‑7B) is first trained on the note‑guided dialogues to learn basic conversational structure, question ordering, and the mapping from patient responses to diagnostic hypotheses.

- Self‑Augmentation via Trajectory Sampling – To avoid over‑fitting to the idealized dialogues, the SFT‑trained doctor model engages in self‑play with a larger simulated patient model (Qwen2.5‑32B). This generates imperfect, noisy trajectories (e.g., ambiguous answers, irrelevant questions), teaching the model robustness to real‑world variability.

- Preference‑Based Optimization (DPO) – Using the 11,403 preference pairs (better vs. worse dialogue turns), Direct Preference Optimization aligns the model’s policy with human‑like information‑gain and diagnostic relevance, encouraging concise, clinically useful questioning.

Single‑Turn Reasoning Paradigm

Instead of treating the entire conversation as a monolithic sequence, each turn is modeled as an independent decision problem: given the chief complaint and the dialogue history, the model selects either a question‑asking action or a diagnosis action. This formulation enables (i) explicit reward shaping based on information gain, (ii) local supervision for each turn, (iii) interpretability, as the model’s reasoning plan for each question can be inspected.

Results

On a held‑out test set of 500 patients across ten conditions (e.g., heart failure, cellulitis, pneumonia), Note2Chat outperforms GPT‑4o by +16.9 F1 points and +21.0% Top‑1 diagnostic accuracy. Information‑gathering metrics improve by +57.53% relative to the baseline, demonstrating that the model asks more relevant questions and extracts a higher proportion of the findings documented in the original notes.

Comparison to Prior Work

Previous attempts such as AMIE, DoctorAgent‑RL, and various multi‑agent or RL frameworks rely on proprietary dialogue datasets or focus primarily on final diagnosis accuracy, often neglecting the quality of the history‑taking process. Note2Chat’s novelty lies in (a) using publicly available discharge notes as a scalable supervision source, (b) a decision‑tree‑guided generation that embeds clinical reasoning structure, (c) a three‑stage training pipeline that balances supervised learning, robustness through self‑play, and preference alignment, and (d) a single‑turn reasoning architecture that yields interpretable, reward‑driven questioning.

Limitations and Future Directions

The current implementation is limited to English notes from a single institution (MIMIC‑IV), so multilingual or cross‑institution generalization remains untested. The simulated patient model, while grounded in extracted findings, may not capture the full variability of real patient responses, necessitating prospective clinical validation. Moreover, the decision tree is handcrafted for the selected disease groups; scaling to the full ICD‑10 taxonomy will require automated tree construction or hierarchical prompting.

Impact

By shifting the focus from end‑diagnosis accuracy to the upstream task of efficient, high‑quality history taking, Note2Chat offers a more clinically realistic pathway for LLM integration into healthcare workflows. Its reliance on readily available notes makes it reproducible and adaptable to diverse health systems, and its modular training strategy can be extended to other conversational medical tasks such as medication reconciliation or discharge counseling. If further validated in real‑world settings, Note2Chat could substantially reduce clinician cognitive load, improve documentation completeness, and ultimately enhance diagnostic safety.

Comments & Academic Discussion

Loading comments...

Leave a Comment