Multi-Modal Time Series Prediction via Mixture of Modulated Experts

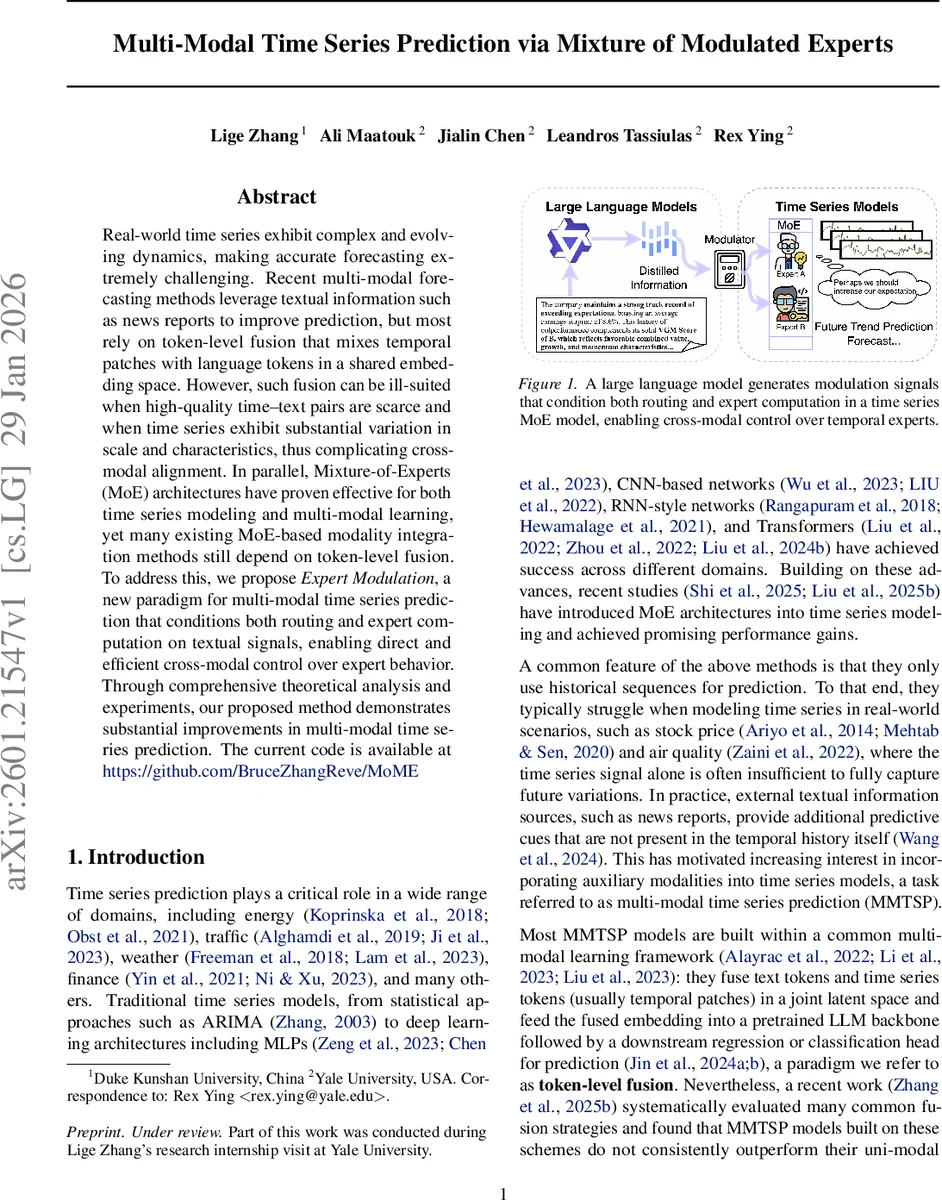

Real-world time series exhibit complex and evolving dynamics, making accurate forecasting extremely challenging. Recent multi-modal forecasting methods leverage textual information such as news reports to improve prediction, but most rely on token-level fusion that mixes temporal patches with language tokens in a shared embedding space. However, such fusion can be ill-suited when high-quality time-text pairs are scarce and when time series exhibit substantial variation in scale and characteristics, thus complicating cross-modal alignment. In parallel, Mixture-of-Experts (MoE) architectures have proven effective for both time series modeling and multi-modal learning, yet many existing MoE-based modality integration methods still depend on token-level fusion. To address this, we propose Expert Modulation, a new paradigm for multi-modal time series prediction that conditions both routing and expert computation on textual signals, enabling direct and efficient cross-modal control over expert behavior. Through comprehensive theoretical analysis and experiments, our proposed method demonstrates substantial improvements in multi-modal time series prediction. The current code is available at https://github.com/BruceZhangReve/MoME

💡 Research Summary

The paper tackles the problem of multi‑modal time‑series prediction (MMTSP), where auxiliary textual information (e.g., news reports) is used together with a numerical time‑series to forecast future values or trends. Existing MMTSP approaches largely rely on token‑level fusion: temporal patches and language tokens are embedded into a shared latent space and then processed by a large language model (LLM) or a transformer. While effective in some settings, token‑level fusion suffers when high‑quality time‑text pairs are scarce, when the text is noisy, or when the time series exhibit large scale variations and strong non‑stationarity. In such cases, aligning heterogeneous token embeddings becomes difficult and the cross‑modal signal is often under‑exploited.

To overcome these limitations, the authors propose a new paradigm called Expert Modulation (EM), built on a Mixture‑of‑Experts (MoE) backbone. Instead of mixing modalities at the representation level, EM conditions both the routing decisions and the internal computations of the experts directly on the textual signal. This creates a functional‑level interaction: the text tells the model which experts to activate and how each expert should behave.

The paper first offers a geometric interpretation of MoE. By decomposing a dense MLP into a sum of smaller sub‑MLPs (Lemma 4.1), the authors view each sub‑MLP as an “expert directional signal”. A conventional MoE then rescales these directions with input‑dependent routing coefficients g_i(x). Sparse Top‑K routing is interpreted as an energy‑based truncation that discards low‑energy directions, analogous to a truncated PCA. Theorem 4.2 provides an error bound for this truncation, showing that the approximation error decreases monotonically with K and that the bound depends on the norms and mutual coherence of the expert directions. This perspective motivates the idea that an auxiliary modality can directly modulate the expert directions and their remixing weights.

EM consists of three concrete steps:

-

Context Token Distillation – A pre‑trained LLM processes the textual input s_cxt, producing hidden states H. A linear projection followed by a multi‑head cross‑attention (QueryPool) extracts m distilled context tokens Z ∈ ℝ^{m×d}. These tokens capture high‑level semantic summaries of the text.

-

Router Modulation (RM) – The distilled tokens are pooled (e.g., average) into a single vector z. A learned linear map G_β′(z) ∈ ℝ^{E} is added to the original routing scores g(x_p) for each temporal patch x_p, yielding context‑aware routing scores g(x_p|Z) = g(x_p) + G_β′(z). Thus the text can bias which experts are selected.

-

Expert‑independent Linear Modulation (EiLM) – For each expert f_i, two modulation functions generate a scale γ_i(z) = w_i^⊤ z and a bias β_i(z) = W_i z. The expert’s output is transformed as f_i(x_p|Z) = γ_i(z)·f_i(x_p) + β_i(z). This allows the text to reshape the functional mapping of each expert independently of the others.

The final MoME output for a patch is the Top‑K weighted sum of the modulated expert outputs using the modulated routing scores: MoME(x_p|Z) = Σ_{i∈A} g_i(x_p|Z)·f_i(x_p|Z).

The authors integrate EM into several popular time‑series backbones (Transformer‑based, CNN‑based, RNN‑based) and evaluate on diverse datasets covering energy consumption, traffic flow, financial markets, and weather. Baselines include state‑of‑the‑art token‑level fusion models that use concatenation, cross‑attention, or vision‑language pipelines. Across all settings, EM consistently improves forecasting metrics (MAE, RMSE, SMAPE) by 2–5% absolute, with larger gains when the textual modality is noisy or when the number of paired samples is limited. Ablation studies confirm that both router modulation and expert modulation contribute positively, and that varying the sparsity K aligns with the theoretical error bound derived earlier.

The paper also discusses practical aspects: the modulation modules add negligible overhead compared to full token‑level fusion, and the approach scales well because routing remains sparse. The code and pretrained models are released publicly, facilitating reproducibility.

In summary, the work introduces a principled, function‑level multimodal integration technique that leverages the expressive power of MoE. By allowing textual information to directly steer expert selection and transformation, the method sidesteps the pitfalls of token‑level alignment and demonstrates robust performance across heterogeneous time‑series forecasting tasks. This contribution opens a new direction for multimodal learning where cross‑modal control is exercised at the expert computation level rather than at the embedding level.

Comments & Academic Discussion

Loading comments...

Leave a Comment