IROS: A Dual-Process Architecture for Real-Time VLM-Based Indoor Navigation

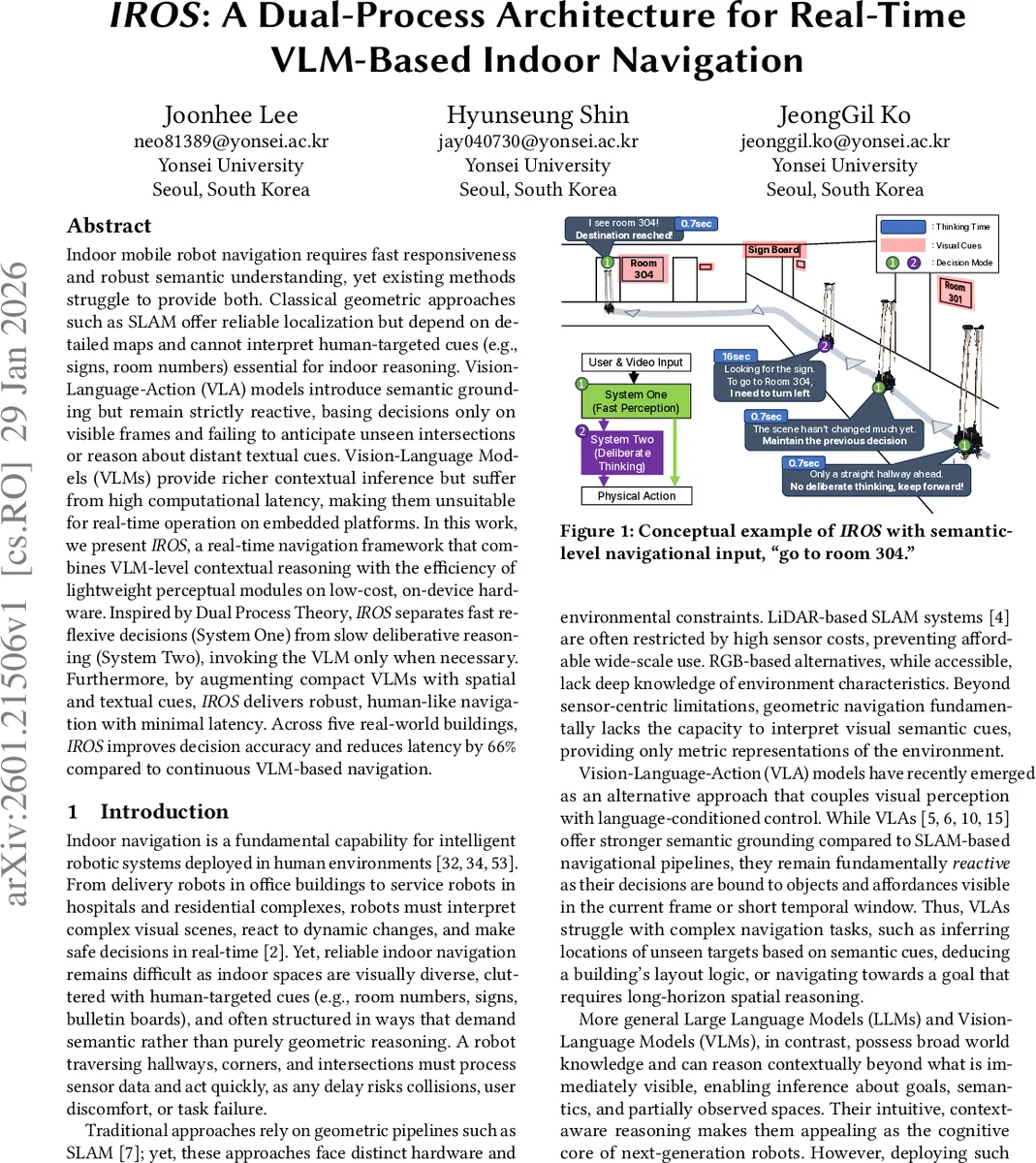

Indoor mobile robot navigation requires fast responsiveness and robust semantic understanding, yet existing methods struggle to provide both. Classical geometric approaches such as SLAM offer reliable localization but depend on detailed maps and cannot interpret human-targeted cues (e.g., signs, room numbers) essential for indoor reasoning. Vision-Language-Action (VLA) models introduce semantic grounding but remain strictly reactive, basing decisions only on visible frames and failing to anticipate unseen intersections or reason about distant textual cues. Vision-Language Models (VLMs) provide richer contextual inference but suffer from high computational latency, making them unsuitable for real-time operation on embedded platforms. In this work, we present IROS, a real-time navigation framework that combines VLM-level contextual reasoning with the efficiency of lightweight perceptual modules on low-cost, on-device hardware. Inspired by Dual Process Theory, IROS separates fast reflexive decisions (System One) from slow deliberative reasoning (System Two), invoking the VLM only when necessary. Furthermore, by augmenting compact VLMs with spatial and textual cues, IROS delivers robust, human-like navigation with minimal latency. Across five real-world buildings, IROS improves decision accuracy and reduces latency by 66% compared to continuous VLM-based navigation.

💡 Research Summary

The paper introduces IROS, a dual‑process architecture designed to enable real‑time indoor navigation for mobile robots while preserving the rich semantic reasoning capabilities of Vision‑Language Models (VLMs). Existing navigation pipelines face a fundamental trade‑off: geometric SLAM provides precise localization but cannot interpret human‑centric cues such as signs or room numbers, whereas VLA/VLM approaches offer contextual understanding but suffer from prohibitive computational latency on embedded hardware. Inspired by Dual Process Theory, IROS separates decision making into two complementary subsystems.

System One (Fast Perception) operates as a reflexive pathway. It consists of a lightweight vision encoder, semantic segmentation, and an OCR module. A Key Frame Compare (KFC) gate measures embedding similarity between the current camera frame and the last frame that triggered a decision; only when a significant visual change is detected does the system proceed. The Condition Matching stage then performs two checks: (1) Destination Arrival Checking compares the current view with the textual goal description (e.g., “room 304”) to detect arrival, and (2) Vision Condition Matching generates a textual description of the scene (including spatial layout and OCR‑extracted text) and matches it against a pre‑computed Condition‑to‑Action Table using cosine similarity. If a unique high‑similarity match is found, the corresponding low‑level motion command (forward, turn left, etc.) is executed immediately, typically within sub‑second latency.

System Two (Deliberative Reasoning) is invoked only when System One cannot resolve a decision—either because the visual change is ambiguous, multiple candidate actions exist, or higher‑level semantic reasoning is required. At initialization, the VLM generates the Condition‑to‑Action Table by analyzing the goal and a coarse map of the environment, thereby encoding possible “conditions” (e.g., “straight hallway”, “sign reading ‘A‑301’ on the left”) and their associated actions. During runtime, System Two feeds the current image, OCR results, and goal description into a compact on‑device VLM (e.g., Gemma‑3 4B) via an augmented prompt that explicitly includes spatial descriptors. The VLM then performs chain‑of‑thought reasoning to produce a context‑aware decision such as “turn left at the sign for room 304”. This selective invocation dramatically reduces the overall inference load.

The authors implemented IROS on a low‑cost NVIDIA Jetson Nano platform, avoiding any cloud dependency. Experiments were conducted across five real‑world buildings with varied hallway layouts, intersections, and textual signage. Compared to a baseline that continuously queries a VLM for every frame, IROS achieved a 66 % reduction in average decision latency (from ~1.5 s to ~0.5 s) and improved decision accuracy from 48.2 % to 64.3 %. Moreover, more than 58 % of all navigation decisions were handled by System One alone, ensuring real‑time responsiveness. Travel time across test routes decreased by roughly 30 %, demonstrating practical efficiency gains.

Key contributions include: (1) a novel dual‑process navigation framework that cleanly decouples fast perception from slow reasoning; (2) a conditional inference mechanism (KFC and condition‑action matching) that triggers VLM computation only when necessary; (3) spatial and textual augmentation of VLM prompts to compensate for the limited spatial awareness of compact models; and (4) a full end‑to‑end on‑device implementation validated in diverse indoor settings.

Limitations noted by the authors involve the need to pre‑compute the condition‑action table, which may be costly in highly dynamic environments, and the dependence on OCR quality under poor lighting. Future work aims to incorporate continual multimodal learning so that robots can autonomously expand and update the condition table during operation, further reducing reliance on offline preparation.

In summary, IROS demonstrates that by mimicking human dual‑process cognition—leveraging fast reflexes for routine navigation and reserving heavyweight semantic reasoning for complex moments—robots can achieve human‑like, context‑aware indoor navigation without sacrificing the real‑time performance required for safe physical interaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment