System 1&2 Synergy via Dynamic Model Interpolation

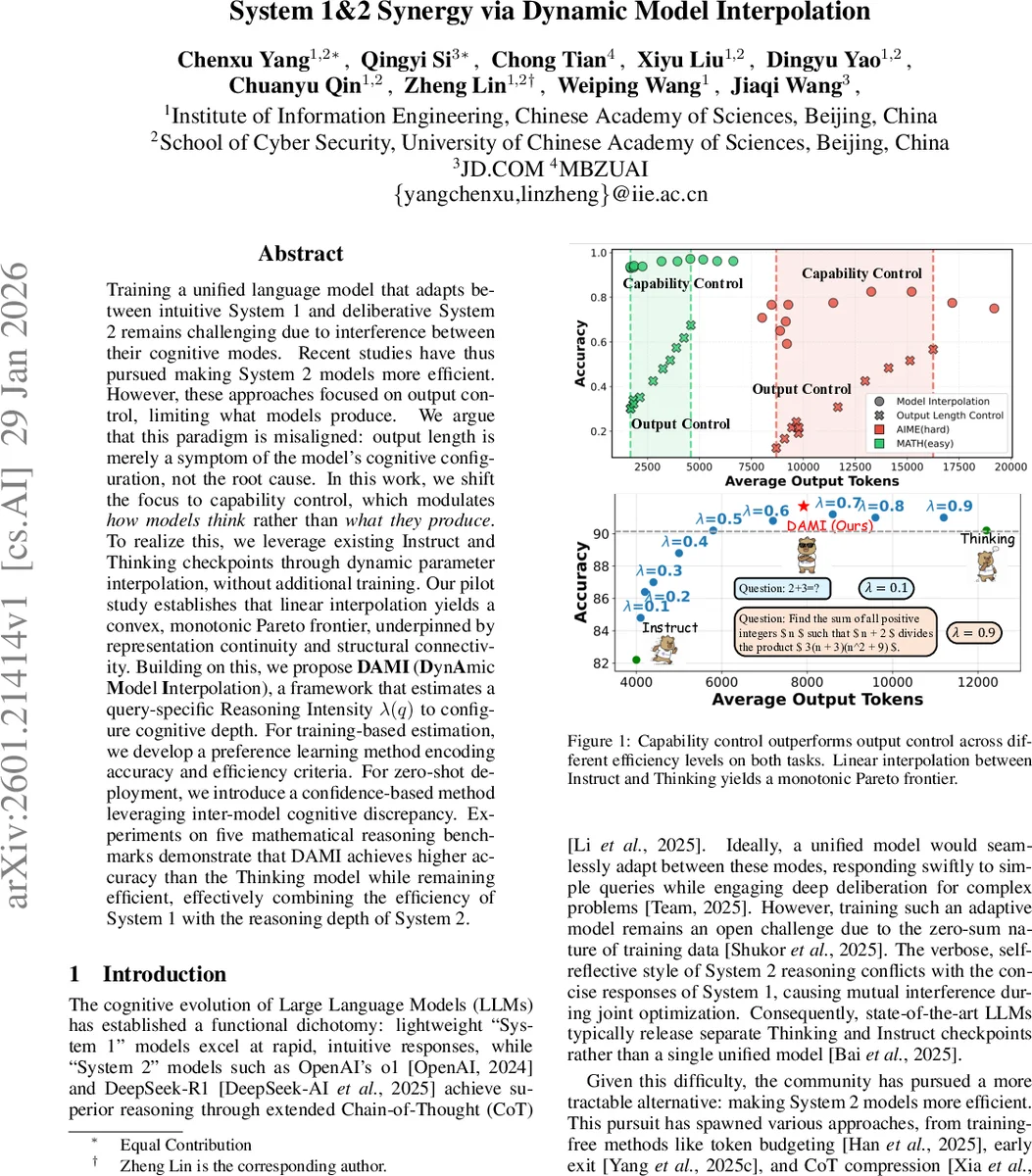

Training a unified language model that adapts between intuitive System 1 and deliberative System 2 remains challenging due to interference between their cognitive modes. Recent studies have thus pursued making System 2 models more efficient. However, these approaches focused on output control, limiting what models produce. We argue that this paradigm is misaligned: output length is merely a symptom of the model’s cognitive configuration, not the root cause. In this work, we shift the focus to capability control, which modulates \textit{how models think} rather than \textit{what they produce}. To realize this, we leverage existing Instruct and Thinking checkpoints through dynamic parameter interpolation, without additional training. Our pilot study establishes that linear interpolation yields a convex, monotonic Pareto frontier, underpinned by representation continuity and structural connectivity. Building on this, we propose \textbf{DAMI} (\textbf{D}yn\textbf{A}mic \textbf{M}odel \textbf{I}nterpolation), a framework that estimates a query-specific Reasoning Intensity $λ(q)$ to configure cognitive depth. For training-based estimation, we develop a preference learning method encoding accuracy and efficiency criteria. For zero-shot deployment, we introduce a confidence-based method leveraging inter-model cognitive discrepancy. Experiments on five mathematical reasoning benchmarks demonstrate that DAMI achieves higher accuracy than the Thinking model while remaining efficient, effectively combining the efficiency of System 1 with the reasoning depth of System 2.

💡 Research Summary

The paper tackles the longstanding challenge of reconciling the fast, intuitive responses of “System 1” language models with the deep, deliberative reasoning of “System 2” models. Existing work has largely focused on making System 2 models more efficient by imposing output‑level constraints such as token budgets, early‑exit mechanisms, or chain‑of‑thought (CoT) compression. The authors argue that these “output‑control” methods merely limit what the model produces, without addressing the underlying cognitive configuration that determines how the model thinks.

To shift the paradigm, the authors propose “capability control,” which directly modulates the model’s reasoning intensity. Their key insight is that the parameters of an Instruct‑style (System 1) checkpoint and a Thinking‑style (System 2) checkpoint are highly aligned: cosine similarity across all transformer layers exceeds 0.99, indicating that both checkpoints lie in the same loss basin and satisfy Linear Mode Connectivity. Leveraging this alignment, they linearly interpolate the two parameter sets:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment