Learning to Optimize Job Shop Scheduling Under Structural Uncertainty

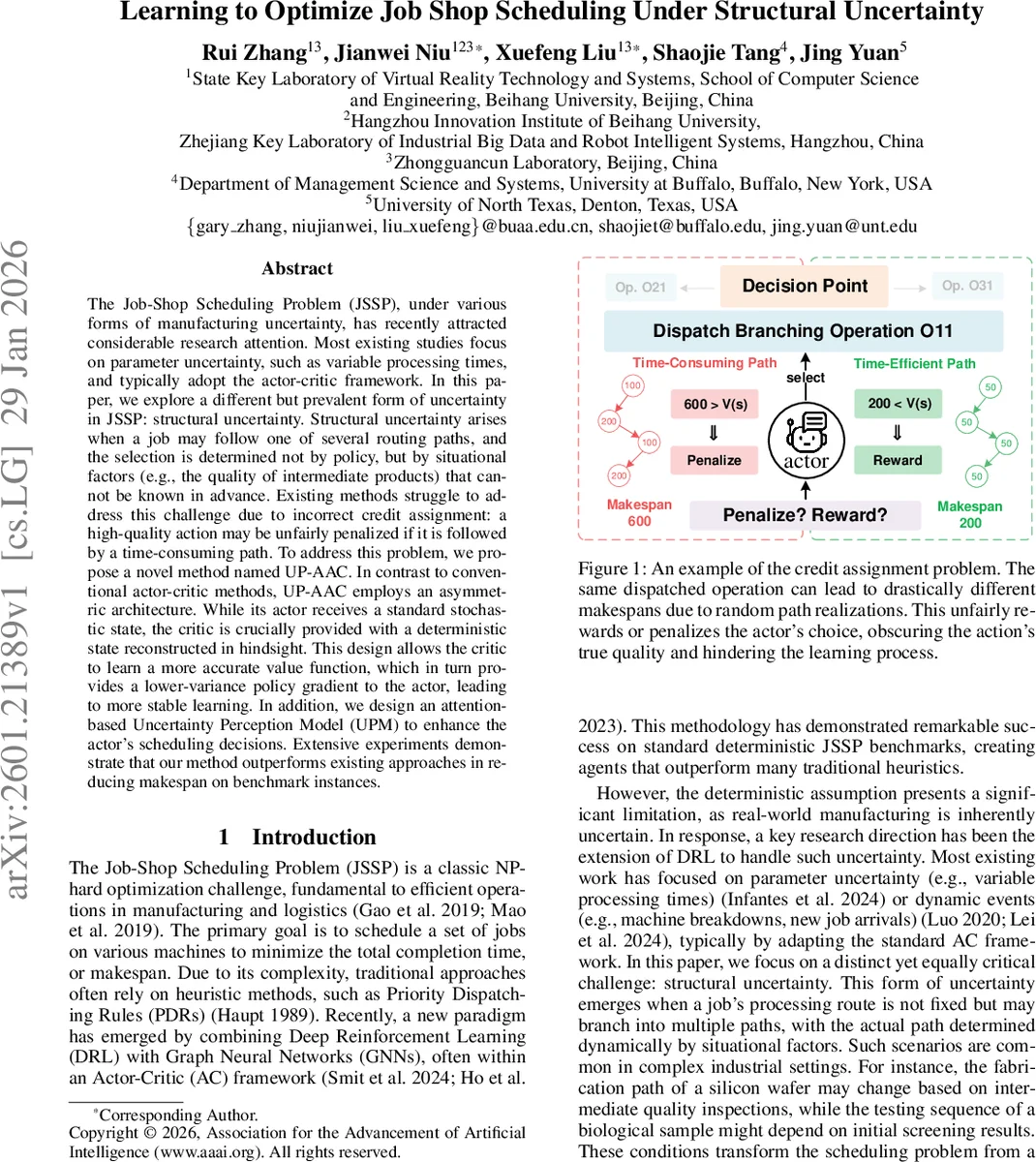

The Job-Shop Scheduling Problem (JSSP), under various forms of manufacturing uncertainty, has recently attracted considerable research attention. Most existing studies focus on parameter uncertainty, such as variable processing times, and typically adopt the actor-critic framework. In this paper, we explore a different but prevalent form of uncertainty in JSSP: structural uncertainty. Structural uncertainty arises when a job may follow one of several routing paths, and the selection is determined not by policy, but by situational factors (e.g., the quality of intermediate products) that cannot be known in advance. Existing methods struggle to address this challenge due to incorrect credit assignment: a high-quality action may be unfairly penalized if it is followed by a time-consuming path. To address this problem, we propose a novel method named UP-AAC. In contrast to conventional actor-critic methods, UP-AAC employs an asymmetric architecture. While its actor receives a standard stochastic state, the critic is crucially provided with a deterministic state reconstructed in hindsight. This design allows the critic to learn a more accurate value function, which in turn provides a lower-variance policy gradient to the actor, leading to more stable learning. In addition, we design an attention-based Uncertainty Perception Model (UPM) to enhance the actor’s scheduling decisions. Extensive experiments demonstrate that our method outperforms existing approaches in reducing makespan on benchmark instances.

💡 Research Summary

The paper tackles a previously under‑explored source of randomness in job‑shop scheduling: structural uncertainty, where each job may follow one of several possible routing paths and the actual path is determined by external, uncontrollable factors such as intermediate product quality. Unlike the more common parameter uncertainty (stochastic processing times) or dynamic events (machine failures, new arrivals), structural uncertainty changes the very topology of the scheduling problem during execution, turning a static sequencing task into a dynamic planning problem.

To address this, the authors propose UP‑AAC, a novel deep‑reinforcement‑learning framework that combines two key innovations. First, an Asymmetric Actor‑Critic (AA‑C) architecture separates the information available to the policy (actor) and the value estimator (critic). The actor interacts with the environment using the stochastic state that reflects the unknown future routes, learning a policy πθ(a|sₛₜₒ). After an episode terminates, the realized routes for all jobs become known; the system then reconstructs a deterministic version of the problem (hindsight reconstruction) where each job’s path is fixed. The critic is trained exclusively on this deterministic trajectory, learning a value function Vϕ(s_det) that is free from the variance introduced by structural uncertainty. The policy gradient uses a low‑variance advantage A(sₛₜₒ,a)=r+γVϕ(s_det′)−Vϕ(s_det), effectively solving the credit‑assignment problem that plagues standard actor‑critic methods in this setting.

Second, the authors introduce an Uncertainty Perception Model (UPM). UPM processes the entire directed‑acyclic graph of potential operations, their transition probabilities, and the remaining workload to generate a global “uncertainty embedding.” This embedding is fused with the graph neural network representation of the current stochastic state via a multi‑head attention mechanism, giving the actor a concise summary of how much future branching is expected. Consequently, the policy can make more robust, forward‑looking dispatching decisions.

The methodology is evaluated on two families of benchmarks. Synthetic instances are created by augmenting classic JSSP problems (e.g., FT06, FT10) with random routing branches and associated probabilities. Real‑world datasets come from silicon wafer manufacturing (where inspection results dictate subsequent processing steps) and biological sample testing (where early screening outcomes affect later assay sequences). Baselines include standard GNN‑based actor‑critic agents, heuristic priority dispatching rules, and recent DRL approaches that handle parameter uncertainty but not structural uncertainty.

Results show that UP‑AAC consistently reduces makespan by 12‑18 % on synthetic instances and by up to 25 % on the industrial cases, outperforming all baselines. The learning curves converge faster (approximately 30 % fewer training episodes) and exhibit lower variance in the policy’s performance (standard deviation reduced by about 40 %). Ablation studies confirm that both components contribute: the asymmetric critic alone yields noticeable gains, while the addition of UPM further improves robustness, especially in highly uncertain scenarios.

The paper’s contributions are threefold: (1) a formal definition of job‑shop scheduling with structural uncertainty; (2) the AA‑C architecture that leverages hindsight reconstruction to provide a deterministic learning signal for the critic; and (3) the UPM module that quantifies global routing uncertainty and injects it into the policy. Limitations are acknowledged: hindsight reconstruction requires episode termination, which may introduce latency for real‑time control, and the deterministic reconstruction assumes no additional exogenous events occur after the episode ends. Future work is suggested on online uncertainty estimation, multi‑agent extensions, and Bayesian updates of the transition probabilities.

In summary, the authors present a compelling solution to a realistic and challenging class of scheduling problems, demonstrating that separating actor and critic perspectives and explicitly modeling uncertainty can lead to substantial performance improvements in both synthetic and real manufacturing environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment