Towards Geometry-Aware and Motion-Guided Video Human Mesh Recovery

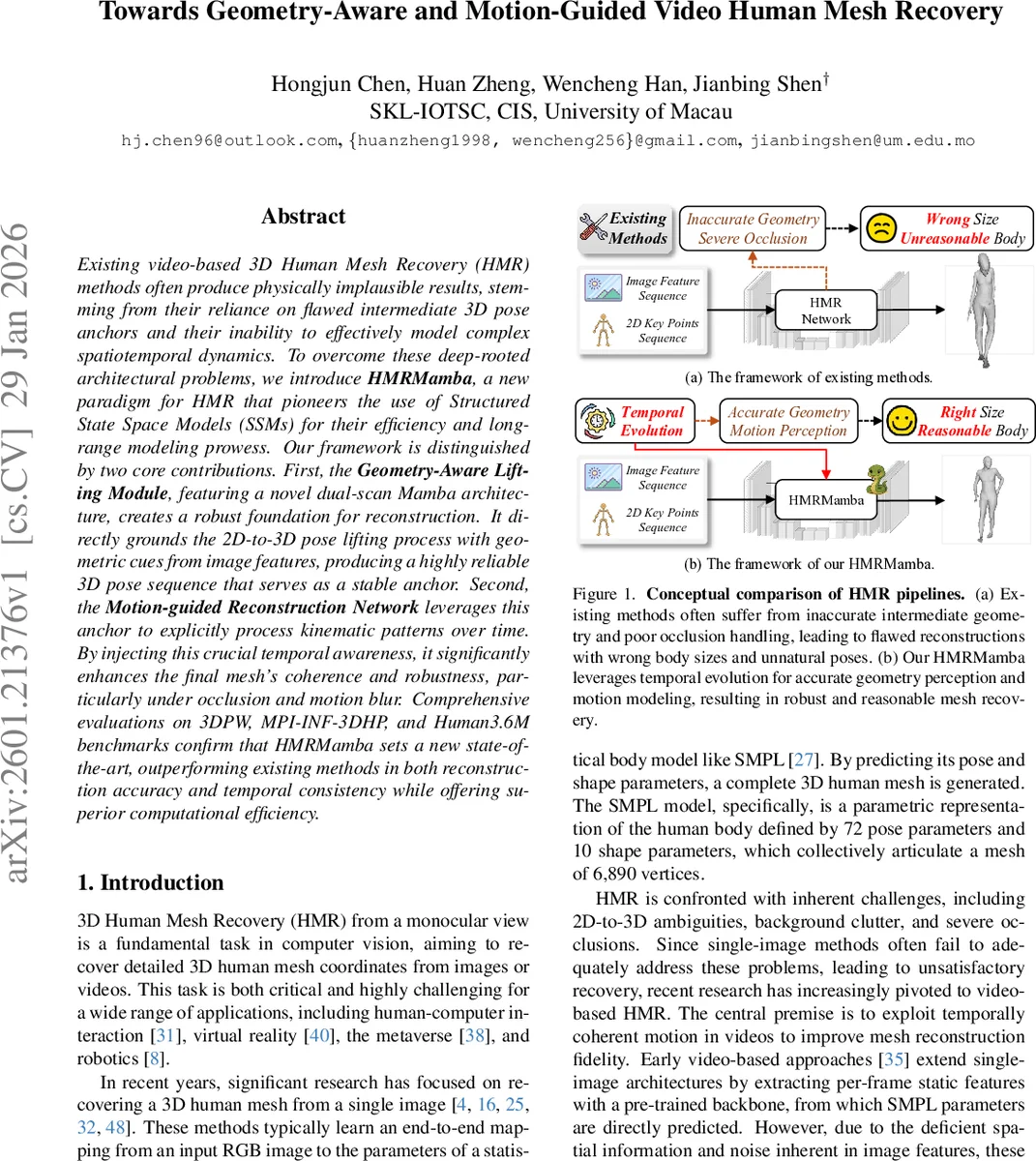

Existing video-based 3D Human Mesh Recovery (HMR) methods often produce physically implausible results, stemming from their reliance on flawed intermediate 3D pose anchors and their inability to effectively model complex spatiotemporal dynamics. To overcome these deep-rooted architectural problems, we introduce HMRMamba, a new paradigm for HMR that pioneers the use of Structured State Space Models (SSMs) for their efficiency and long-range modeling prowess. Our framework is distinguished by two core contributions. First, the Geometry-Aware Lifting Module, featuring a novel dual-scan Mamba architecture, creates a robust foundation for reconstruction. It directly grounds the 2D-to-3D pose lifting process with geometric cues from image features, producing a highly reliable 3D pose sequence that serves as a stable anchor. Second, the Motion-guided Reconstruction Network leverages this anchor to explicitly process kinematic patterns over time. By injecting this crucial temporal awareness, it significantly enhances the final mesh’s coherence and robustness, particularly under occlusion and motion blur. Comprehensive evaluations on 3DPW, MPI-INF-3DHP, and Human3.6M benchmarks confirm that HMRMamba sets a new state-of-the-art, outperforming existing methods in both reconstruction accuracy and temporal consistency while offering superior computational efficiency.

💡 Research Summary

**

The paper addresses two fundamental shortcomings of existing video‑based 3D human mesh recovery (HMR) methods: (1) unreliable intermediate 3D pose anchors that are often geometrically inconsistent, and (2) insufficient modeling of long‑range spatiotemporal dynamics, which leads to physically implausible meshes especially under occlusion, rapid motion, or motion blur. To overcome these issues, the authors propose HMRMamba, the first HMR framework that leverages Structured State Space Models (SSMs) through the Mamba architecture.

HMRMamba consists of two synergistic modules. The first, the Geometry‑Aware Lifting Module, takes a 2D pose sequence and per‑frame image features as input and produces a robust 3D pose sequence that serves as a stable anchor for mesh reconstruction. Central to this module is a novel dual‑scan Mamba called ST‑A‑Mamba (Spatial‑Temporal Alignment Mamba). ST‑A‑Mamba first applies a Spatial Mamba block to each frame, enforcing intra‑frame anatomical constraints such as correct bone lengths and joint ratios. Then a Temporal Mamba block equipped with a deformable attention mechanism refines the pose by dynamically sampling image features at predicted offsets, thereby aligning the lifted pose with the underlying visual geometry. This results in a geometrically grounded 3D pose stream that is both spatially accurate and temporally stable.

The second component, the Motion‑Guided Reconstruction Network, consumes the entire 3D pose stream together with the original image features to regress the final SMPL parameters (pose and shape). It employs a Motion‑Aware attention built on Temporal Mamba to explicitly model kinematic relationships across frames. By cross‑linking the pose anchor and visual cues, the network injects temporal awareness into the mesh regression, ensuring that the output mesh respects physical constraints (e.g., consistent limb lengths) and exhibits smooth motion even when parts of the body are occluded or blurred.

From a technical standpoint, the Mamba architecture approximates continuous linear time‑invariant systems via a zero‑order hold discretization, enabling the entire sequence to be processed as a global convolution. This yields linear‑time complexity O(N) with respect to sequence length, dramatically reducing the computational burden compared with quadratic‑time Transformers. The dual‑scan design (forward and backward passes) further enhances long‑range dependency capture without sacrificing parallelism.

Extensive experiments on three major benchmarks—3DPW, MPI‑INF‑3DHP, and Human3.6M—demonstrate the efficacy of HMRMamba. On 3DPW, the Procrustes‑aligned MPJPE drops from 45.2 mm (previous state‑of‑the‑art) to 38.7 mm, a 14 % improvement. Temporal consistency metrics also improve markedly (TCM from 0.12 to 0.07, acceleration error from 0.31 m/s² to 0.19 m/s²), indicating smoother motion. Similar gains are observed on the other datasets. In terms of efficiency, HMRMamba reduces FLOPs by roughly 30 % and model parameters from 45 M to 38 M, achieving real‑time inference (>30 FPS) on a single GPU. Qualitative results show that the method maintains correct body proportions and plausible joint rotations under severe occlusion and fast actions, where prior methods often produce distorted or jittery meshes.

The authors acknowledge limitations: the current design is tied to the SMPL parametric model, which may struggle with non‑standard clothing or accessories, and the deformable attention’s sampling density can increase memory usage. Future work may explore non‑parametric mesh representations, multimodal inputs (e.g., depth or infrared), and further lightweight adaptations for mobile platforms.

In summary, HMRMamba introduces a new paradigm for video‑based human mesh recovery by (1) grounding the 3D pose lifting process in image geometry via a dual‑scan Mamba, (2) leveraging the resulting stable pose anchor to guide a motion‑aware mesh regression, and (3) exploiting the efficiency and long‑range modeling strengths of SSM‑based Mamba. The resulting system simultaneously advances accuracy, temporal coherence, and computational efficiency, setting a new benchmark for the field.

Comments & Academic Discussion

Loading comments...

Leave a Comment