ViTMAlis: Towards Latency-Critical Mobile Video Analytics with Vision Transformers

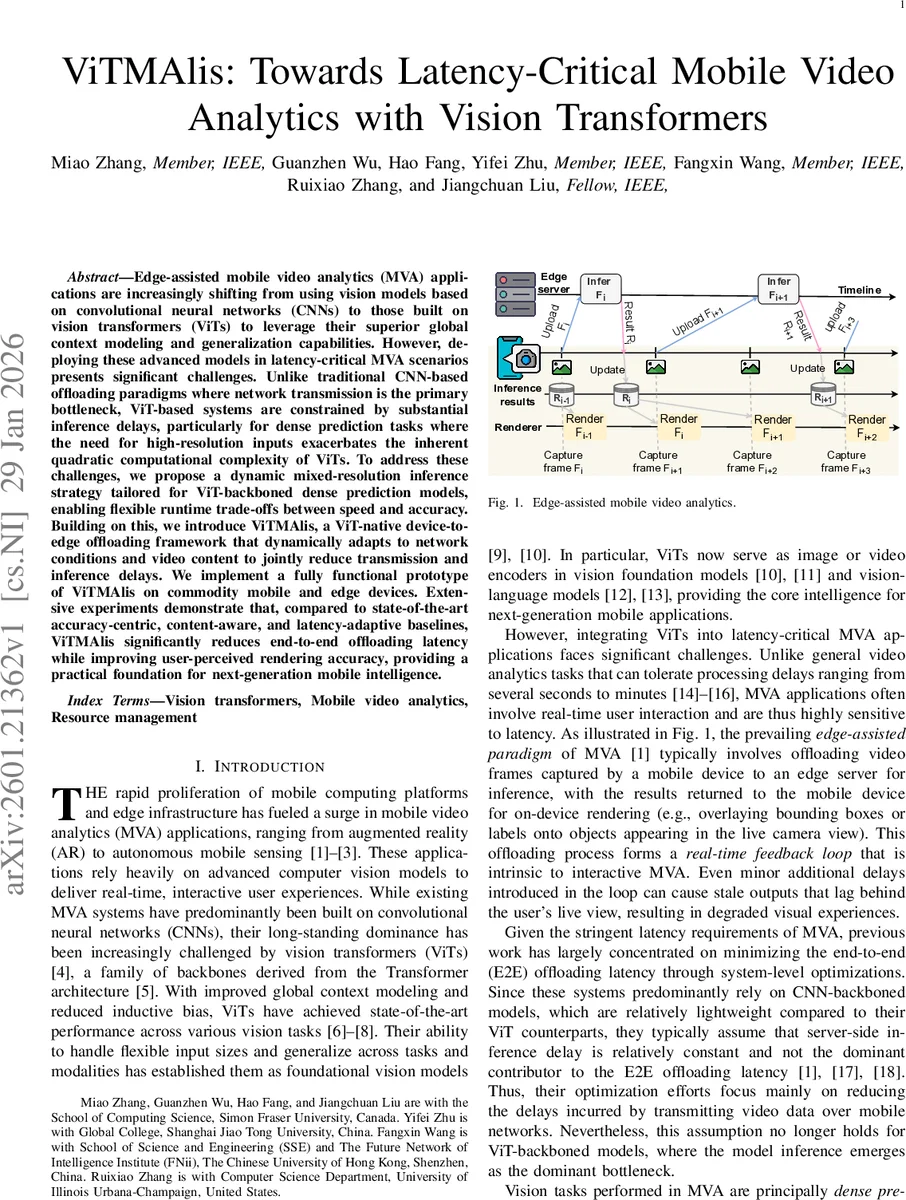

Edge-assisted mobile video analytics (MVA) applications are increasingly shifting from using vision models based on convolutional neural networks (CNNs) to those built on vision transformers (ViTs) to leverage their superior global context modeling and generalization capabilities. However, deploying these advanced models in latency-critical MVA scenarios presents significant challenges. Unlike traditional CNN-based offloading paradigms where network transmission is the primary bottleneck, ViT-based systems are constrained by substantial inference delays, particularly for dense prediction tasks where the need for high-resolution inputs exacerbates the inherent quadratic computational complexity of ViTs. To address these challenges, we propose a dynamic mixed-resolution inference strategy tailored for ViT-backboned dense prediction models, enabling flexible runtime trade-offs between speed and accuracy. Building on this, we introduce ViTMAlis, a ViT-native device-to-edge offloading framework that dynamically adapts to network conditions and video content to jointly reduce transmission and inference delays. We implement a fully functional prototype of ViTMAlis on commodity mobile and edge devices. Extensive experiments demonstrate that, compared to state-of-the-art accuracy-centric, content-aware, and latency-adaptive baselines, ViTMAlis significantly reduces end-to-end offloading latency while improving user-perceived rendering accuracy, providing a practical foundation for next-generation mobile intelligence.

💡 Research Summary

Mobile video analytics (MVA) applications such as augmented reality and autonomous sensing require real‑time visual inference on resource‑constrained devices. Historically, edge‑assisted MVA offloads frames to an edge server that runs lightweight convolutional neural network (CNN) backbones, so system designers focus on reducing network transmission latency while assuming a relatively constant server‑side inference time. The emergence of vision transformers (ViTs) as high‑performance, general‑purpose backbones changes this landscape: ViTs excel at global context modeling and can handle arbitrary input resolutions, but their self‑attention mechanism incurs quadratic complexity with respect to the number of tokens. Dense‑prediction tasks (object detection, semantic segmentation) demand high‑resolution inputs; consequently, ViT‑based models such as ViTDet‑L experience inference latencies of ~281 ms on an RTX 5090 for a 1080p frame—far exceeding the latency budget for interactive MVA.

The paper identifies two fundamental obstacles: (1) the inference delay of ViT backbones dominates end‑to‑end (E2E) latency, and (2) dense‑prediction heads require full‑resolution, grid‑aligned feature maps, which are disrupted by naïve token reduction techniques (pruning, merging). To address these, the authors propose a dynamic mixed‑resolution inference strategy and build a complete offloading framework called ViTMAlis.

Dynamic mixed‑resolution inference works by partitioning the image into “decision regions” that are integer multiples of the ViT patch size (16 × 16). Each region is either kept at full resolution or down‑sampled by a factor d (e.g., 2×). The region size r is chosen as r = w·d, where w is the window size used by the ViT’s window‑attention blocks, guaranteeing that down‑sampled tokens still align perfectly with the window partitions. This yields a binary mask indicating low‑resolution regions; the number of tokens is reduced by d² for each down‑sampled region, directly cutting the quadratic attention cost.

Because mixed‑resolution tokenization destroys the regular 2‑D spatial layout required by dense heads, the authors introduce a flexible resolution restoration mechanism. The ViT backbone is divided into N subsets (default N = 4). Within each subset, the system can selectively up‑sample the low‑resolution tokens at configurable depths, reconstructing a full‑resolution feature map that can be fed into a Feature Pyramid Network (FPN) and downstream detection/segmentation heads. This design preserves the computational savings of early‑stage down‑sampling while still providing the multi‑scale, grid‑aligned features needed for accurate dense prediction.

ViTMAlis integrates these techniques into a runtime system that adapts per‑frame based on (a) network conditions (bandwidth, RTT), (b) video content (visual importance estimated on‑device), and (c) a performance model that predicts inference delay for a given token budget. An accuracy‑oriented down‑sampling region selector chooses which parts of the frame can be safely compressed, while a lightweight estimator predicts the resulting latency. The configuration engine then selects the optimal token count and restoration points to minimize E2E latency while keeping a target accuracy (e.g., mAP) above a threshold.

The authors implement a prototype on commodity smartphones (Snapdragon 8‑Gen2) and an edge server equipped with an RTX 5090 GPU. Extensive experiments across 4G, 5G, and Wi‑Fi networks, and across diverse video scenarios (AR overlays, drive‑by view, indoor robotics), compare ViTMAlis against three state‑of‑the‑art baselines: (i) accuracy‑centric (static high‑resolution), (ii) content‑aware (static compression based on scene complexity), and (iii) latency‑adaptive (dynamic frame skipping). Results show that ViTMAlis reduces average E2E latency by 30 %–45 % relative to the best baseline, while improving user‑perceived rendering accuracy by 2 %–4 % mAP. Even under constrained 4G bandwidth, ViTMAlis achieves roughly twice the responsiveness of prior systems. The overhead of on‑device importance estimation and configuration selection is under 5 ms per frame, confirming real‑time feasibility.

Key contributions include: (1) a novel, ViT‑compatible mixed‑resolution tokenization scheme that respects window‑attention constraints; (2) a flexible feature‑map restoration pipeline enabling dense prediction heads to operate on mixed‑resolution inputs without retraining the backbone; (3) a system‑level offloading framework that jointly optimizes transmission and inference based on live network and content signals; and (4) a thorough empirical validation demonstrating practical gains on real hardware.

Limitations are acknowledged: the current design assumes window‑attention ViTs (e.g., Swin‑Transformer, ViTDet) and may need adaptation for pure global‑attention models; importance estimation relies on simple heuristics and could miss subtle semantic cues; and multi‑edge collaboration is not explored. Future work may extend the approach to multimodal importance cues, incorporate cooperative edge scheduling, and generalize to larger ViT families.

In summary, ViTMAlis shows that by exploiting ViTs’ input‑agnostic tokenization and carefully aligning mixed‑resolution processing with the transformer’s architectural constraints, one can dramatically cut inference cost while preserving the high‑resolution spatial fidelity required for dense video analytics. This makes ViT‑backboned, latency‑critical mobile intelligence a practical reality.

Comments & Academic Discussion

Loading comments...

Leave a Comment