A Deterministic Framework for Neural Network Quantum States in Quantum Chemistry

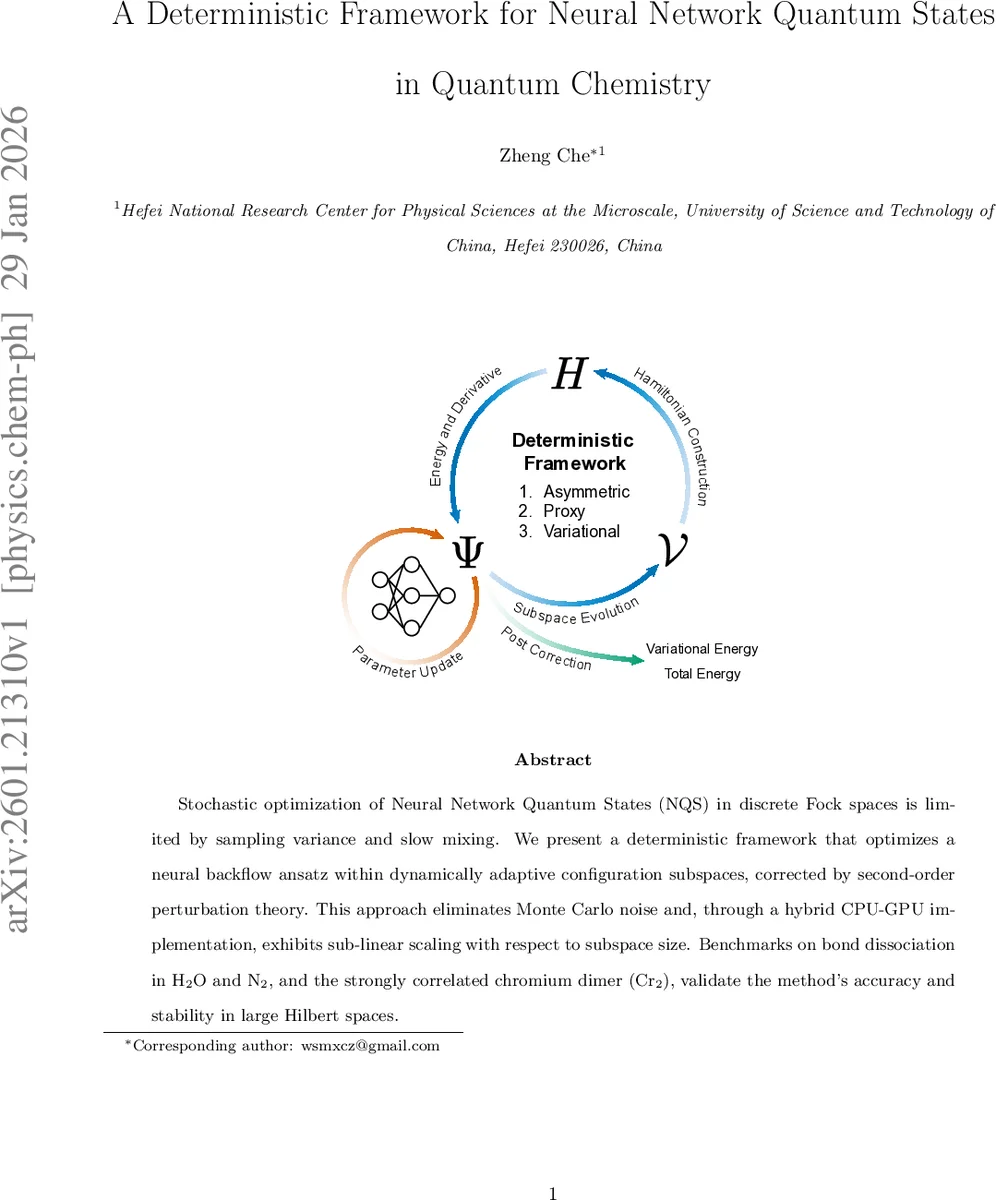

Stochastic optimization of Neural Network Quantum States (NQS) in discrete Fock spaces is limited by sampling variance and slow mixing. We present a deterministic framework that optimizes a neural backflow ansatz within dynamically adaptive configuration subspaces, corrected by second-order perturbation theory. This approach eliminates Monte Carlo noise and, through a hybrid CPU-GPU implementation, exhibits sub-linear scaling with respect to subspace size. Benchmarks on bond dissociation in H2O and N2, and the strongly correlated chromium dimer Cr2, validate the method’s accuracy and stability in large Hilbert spaces.

💡 Research Summary

This paper introduces a fully deterministic optimization framework for Neural Network Quantum States (NQS) applied to electronic structure problems, thereby eliminating the sampling noise and slow mixing that plague conventional variational Monte Carlo (VMC) approaches in discrete Fock spaces. The authors treat the neural network as a deterministic generator of wave‑function amplitudes rather than a stochastic sampler. At each outer iteration a variational subspace Vₖ of the most important Slater configurations is selected, and the set of configurations directly coupled to Vₖ by the Hamiltonian, Cₖ, is constructed. The union Tₖ = Vₖ ∪ Pₖ (where Pₖ = Cₖ \ Vₖ is the perturbative set) defines a target space on which all energy expectations are evaluated by exact summation, removing Monte‑Carlo variance.

Three deterministic energy functionals are defined:

-

Asymmetric mode – normalizes only over Vₖ but evaluates the local energy using the full Tₖ. The gradient is an approximation because it neglects the dependence of the perturbative amplitudes on the parameters, leading to a non‑conservative vector field.

-

Proxy mode – normalizes over the entire Tₖ and employs a sparse “proxy” Hamiltonian ˜Hₖ that retains exact off‑diagonal matrix elements involving Vₖ while approximating the Pₖ‑Pₖ block by its diagonal. This yields an exact Rayleigh quotient and a well‑defined gradient, providing feedback from the perturbative amplitudes and stabilizing optimization for strongly correlated systems.

-

Variational mode – restricts both the normalization and the Hamiltonian to Vₖ, reproducing the standard variational energy within the subspace. Because contributions from Pₖ are omitted, a post‑hoc second‑order Epstein‑Nesbet perturbation theory (PT2) correction is applied. The PT2 term accounts for both the residual error inside Vₖ and the coupling to Pₖ, ensuring that the final energy includes dynamic correlation missing from the variational step.

The neural‑network ansatz is a backflow architecture. Starting from a restricted Hartree–Fock reference orbital matrix Φ₀, a configuration‑dependent correction ΔΦ_θ(x) produced by a multilayer perceptron modifies the orbital basis: Φ_θ(x) = Φ₀ + ΔΦ_θ(x). For each occupation bit‑string x, the occupied rows of Φ_θ(x) form a square matrix whose determinant gives the wave‑function amplitude ψ_θ(x). By initializing the network near zero, the ansatz reduces to the Hartree–Fock determinant, guaranteeing a physically sensible initial sign structure while allowing the network to learn configuration‑dependent deformations that capture many‑body correlations.

Implementation is realized on a hybrid CPU‑GPU architecture. The combinatorial generation of the sparse Hamiltonian graph and the adaptive subspace selection are performed on the CPU using efficient bit‑wise operations. The high‑dimensional tensor contractions, forward passes, and automatic differentiation required for the neural‑network optimization are off‑loaded to GPUs. This separation enables sub‑linear scaling of both memory usage and computational cost with respect to the size of Tₖ, even when |Tₖ| reaches millions of configurations.

Benchmark calculations demonstrate the method’s accuracy and robustness. For the bond dissociation curves of H₂O and N₂ in the cc‑pVDZ basis, the deterministic framework achieves chemical accuracy (≤ 1 kcal mol⁻¹) while the variational subspace contains only ~0.1 % of the full FCI space. In the notoriously challenging chromium dimer (Cr₂), the “Variational + PT2” protocol yields total energies within 0.5 mHartree of high‑precision selected‑CI and DMRG reference data, and the energy curve remains smooth across the multireference region. These results confirm that the deterministic approach can exploit the expressive power of NQS without suffering from stochastic noise, and that the adaptive subspace together with PT2 correction recovers both static and dynamic correlation efficiently.

In summary, the authors present a novel deterministic paradigm for neural‑network‑based quantum chemistry. By coupling a backflow NQS with adaptive configuration subspaces, exact energy evaluation, and a second‑order perturbative correction, they achieve high accuracy, favorable scaling, and stable convergence for systems ranging from modest diatomics to strongly correlated transition‑metal complexes. The work opens avenues for further extensions such as multi‑reference backflow forms, real‑time subspace updates, and integration with emerging quantum‑computing hardware.

Comments & Academic Discussion

Loading comments...

Leave a Comment