Lossless Copyright Protection via Intrinsic Model Fingerprinting

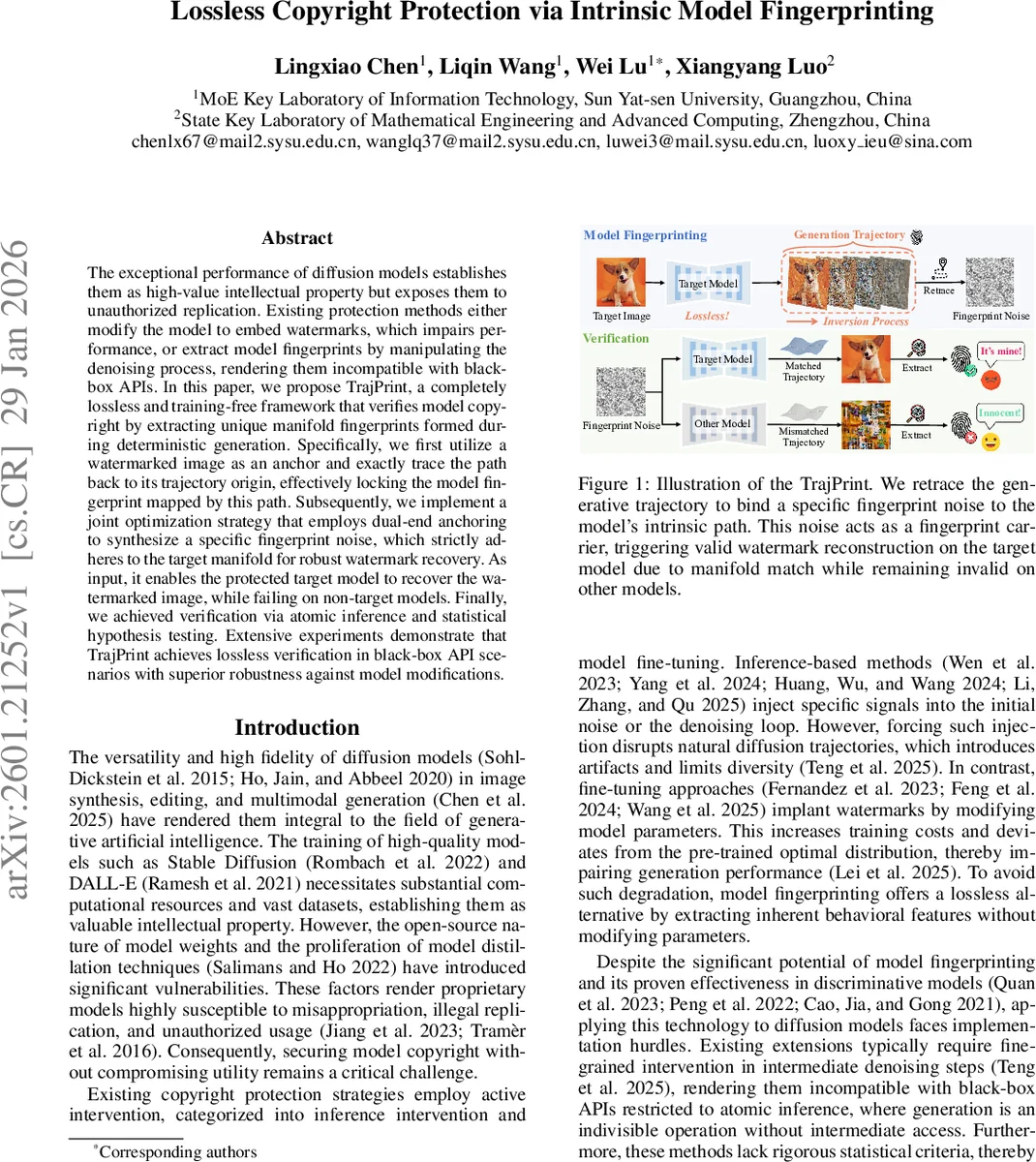

The exceptional performance of diffusion models establishes them as high-value intellectual property but exposes them to unauthorized replication. Existing protection methods either modify the model to embed watermarks, which impairs performance, or extract model fingerprints by manipulating the denoising process, rendering them incompatible with black-box APIs. In this paper, we propose TrajPrint, a completely lossless and training-free framework that verifies model copyright by extracting unique manifold fingerprints formed during deterministic generation. Specifically, we first utilize a watermarked image as an anchor and exactly trace the path back to its trajectory origin, effectively locking the model fingerprint mapped by this path. Subsequently, we implement a joint optimization strategy that employs dual-end anchoring to synthesize a specific fingerprint noise, which strictly adheres to the target manifold for robust watermark recovery. As input, it enables the protected target model to recover the watermarked image, while failing on non-target models. Finally, we achieved verification via atomic inference and statistical hypothesis testing. Extensive experiments demonstrate that TrajPrint achieves lossless verification in black-box API scenarios with superior robustness against model modifications.

💡 Research Summary

The paper “Lossless Copyright Protection via Intrinsic Model Fingerprinting” introduces TrajPrint, a novel framework for verifying the ownership of diffusion models without modifying the model or degrading its generation quality. The authors observe that deterministic DDIM sampling defines a bijective mapping Ψθ between the initial Gaussian noise space XT and the final latent image space X0. This mapping is uniquely determined by the model parameters θ and therefore constitutes an intrinsic high‑dimensional manifold that can serve as a model’s fingerprint.

TrajPrint proceeds in two stages. First, a watermarked anchor image Iw is created by embedding a binary copyright message m into a carrier image using a pretrained encoder Ew. The anchor is encoded into latent space (x0 = E(Iw)) and then exactly inverted through the deterministic DDIM inverse Ψθ⁻¹, yielding the unique initial noise xT that would generate Iw under the target model Gθ. This step “locks” the fingerprint by identifying the exact point on the model’s generative manifold that corresponds to the watermarked image. However, direct inversion suffers from discretization errors that can corrupt the watermark.

To overcome this, the second stage introduces an optimizable latent variable z initialized at xT and refines it via joint optimization under three complementary losses: (1) a watermark recovery loss Lw (binary cross‑entropy between the decoded bits D_w(D(Ψθ(z))) and the original message m), ensuring that the generated image contains the hidden watermark; (2) a reconstruction loss Lrec (L2 plus a perceptual term) that forces the output of Ψθ(z) to be visually and semantically identical to the watermarked anchor Iw, thereby anchoring the trajectory at the output end; and (3) a regularization loss Lreg that penalizes deviation of z from xT, anchoring the trajectory at the input end. The combined objective L = Lw + λrec Lrec + λreg Lreg yields an optimized “fingerprint noise” z* that, when fed to the target model, follows the exact intrinsic denoising path and reconstructs Iw with the embedded message intact. Non‑target models, whose manifolds differ, produce unrelated images that fail watermark decoding.

Verification is performed under strict black‑box constraints: the defender queries the suspect model with the single noise vector z* (atomic inference) and extracts the watermark from the output. The bit‑accuracy is measured and a one‑sample t‑test is applied to assess statistical significance, providing scientifically credible evidence of ownership.

Extensive experiments across multiple diffusion architectures (Stable Diffusion, DALL·E, Imagen, etc.) demonstrate near‑perfect verification rates. The method remains robust against common model‑modification attacks such as LoRA fine‑tuning, DreamBooth adaptation, quantization, and pruning; watermark recovery accuracy stays above 95% even after such alterations. Importantly, because TrajPrint does not alter model weights or inference pipelines, there is zero impact on image quality (no PSNR/SSIM loss) compared to the original model.

The contributions are threefold: (i) a completely lossless, training‑free copyright verification scheme compatible with black‑box APIs; (ii) a principled mapping of a model’s intrinsic generative manifold to a unique trigger‑watermark pair via trajectory inversion and dual‑end anchoring; and (iii) a rigorous statistical verification protocol that yields legally defensible proof of infringement. TrajPrint thus offers a practical, high‑fidelity solution for protecting valuable diffusion models in commercial and research settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment