MGSM-Pro: A Simple Strategy for Robust Multilingual Mathematical Reasoning Evaluation

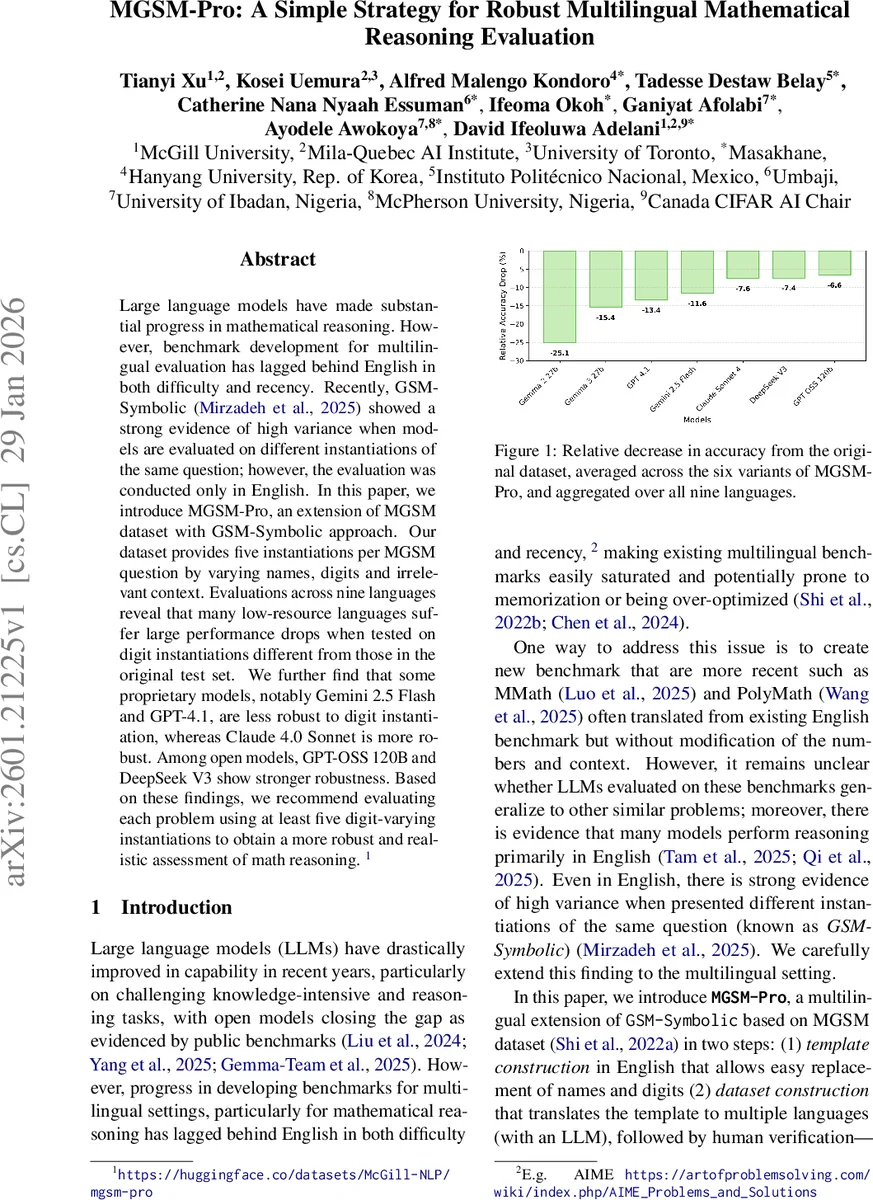

Large language models have made substantial progress in mathematical reasoning. However, benchmark development for multilingual evaluation has lagged behind English in both difficulty and recency. Recently, GSM-Symbolic showed a strong evidence of high variance when models are evaluated on different instantiations of the same question; however, the evaluation was conducted only in English. In this paper, we introduce MGSM-Pro, an extension of MGSM dataset with GSM-Symbolic approach. Our dataset provides five instantiations per MGSM question by varying names, digits and irrelevant context. Evaluations across nine languages reveal that many low-resource languages suffer large performance drops when tested on digit instantiations different from those in the original test set. We further find that some proprietary models, notably Gemini 2.5 Flash and GPT-4.1, are less robust to digit instantiation, whereas Claude 4.0 Sonnet is more robust. Among open models, GPT-OSS 120B and DeepSeek V3 show stronger robustness. Based on these findings, we recommend evaluating each problem using at least five digit-varying instantiations to obtain a more robust and realistic assessment of math reasoning.

💡 Research Summary

The paper introduces MGSM‑Pro, a multilingual extension of the MGSM benchmark designed to evaluate the robustness of large language models (LLMs) on mathematical reasoning tasks when faced with multiple instantiations of the same problem. While prior work such as GSM‑Symbolic demonstrated high variance in English when the same question is presented with different names, numbers, or irrelevant context, MGSM‑Pro expands this investigation to nine typologically diverse languages (English, Chinese, French, Japanese, Swahili, Amharic, Igbo, Yoruba, and Twi).

Dataset construction proceeds in two steps. First, adaptable English templates are created for 225 of the 250 MGSM problems, parameterizing only names and numeric values. Second, these templates are translated into the target languages using Gemini 2.0 Flash, followed by rigorous human verification and automated alignment checks. For each original problem, five distinct instances are generated, yielding a total of 1,125 evaluation items. The variations are organized into two series: Symbolic (SYM) – which includes name‑only (SYM_N), number‑only (SYM_#), and combined name‑and‑number (SYM_N#) changes – and Irrelevant Context (IC) – which mirrors the SYM changes but adds a distractor sentence (IC_N, IC_#, IC_N#).

The authors evaluate twelve state‑of‑the‑art LLMs (both open‑source and proprietary) in a zero‑shot setting, prompting the models to reason step‑by‑step in English (Chain‑of‑Thought) and to output only the final numeric answer. Each variation is sampled five times with different numeric values, and the mean accuracy across these repetitions is reported.

Key findings:

- Name changes have minimal impact, while adding irrelevant context causes a modest drop, especially for low‑resource languages (LRLs).

- Numeric variations are the primary source of performance degradation. Across all languages, the average accuracy loss in the numeric‑only setting (SYM_#) is at least 8 points; Gemma 3 27B and Gemini 2.5 Flash suffer the largest drops (‑18.2 and ‑15.2 respectively).

- High‑resource languages (HRLs) are more robust than LRLs. English, Chinese, and French typically lose fewer than 8 points, whereas Amharic, Igbo, Yoruba, and Twi can lose 15–20 points. Swahili behaves more like an HRL.

- Model size does not uniformly predict robustness. In the Gemma family, larger models degrade more, whereas GPT‑OSS shows the opposite trend, with the 120 B parameter version being more stable than smaller variants. Notably, the open‑source GPT‑OSS 120B outperforms the closed‑source GPT‑4.1 on robustness despite the latter’s larger, undisclosed parameter count.

- Leaderboard rankings are unstable under multi‑instance evaluation. Gemini 2.5 Flash tops the original (single‑instance) leaderboard, but when averaged over five instances (Avg‑5), Claude Sonnet 4 rises to first place, and the ordering of all models shifts consistently. Repeating with ten instances (Avg‑10) yields the same ranking, confirming that a single instance is insufficient for reliable assessment.

The paper also emphasizes cultural relevance: native annotators curated locale‑specific names, cities, and common entities to avoid phonetic transliteration artifacts that could bias results.

Limitations include the restriction to nine languages (due to annotator availability), evaluation of only twelve models (limited compute), exclusive use of English‑language reasoning prompts, and the omission of 25 MGSM items whose symbolic constraints could not be reliably regenerated. Future work aims to expand language coverage, incorporate more models (e.g., Qwen 3, GPT‑5), test non‑English reasoning prompts, and complete the full 250‑question set.

In conclusion, MGSM‑Pro provides a systematic, multilingual framework for probing LLM robustness to superficial variations in mathematical problems. The findings reveal pronounced vulnerabilities in low‑resource languages and under numeric perturbations, and they argue convincingly for adopting a minimum of five varied instances per problem (the Avg‑5 protocol) as a standard evaluation practice. This approach mitigates over‑fitting to a single test instance and yields a more realistic picture of a model’s true mathematical reasoning capabilities across languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment