FRISM: Fine-Grained Reasoning Injection via Subspace-Level Model Merging for Vision-Language Models

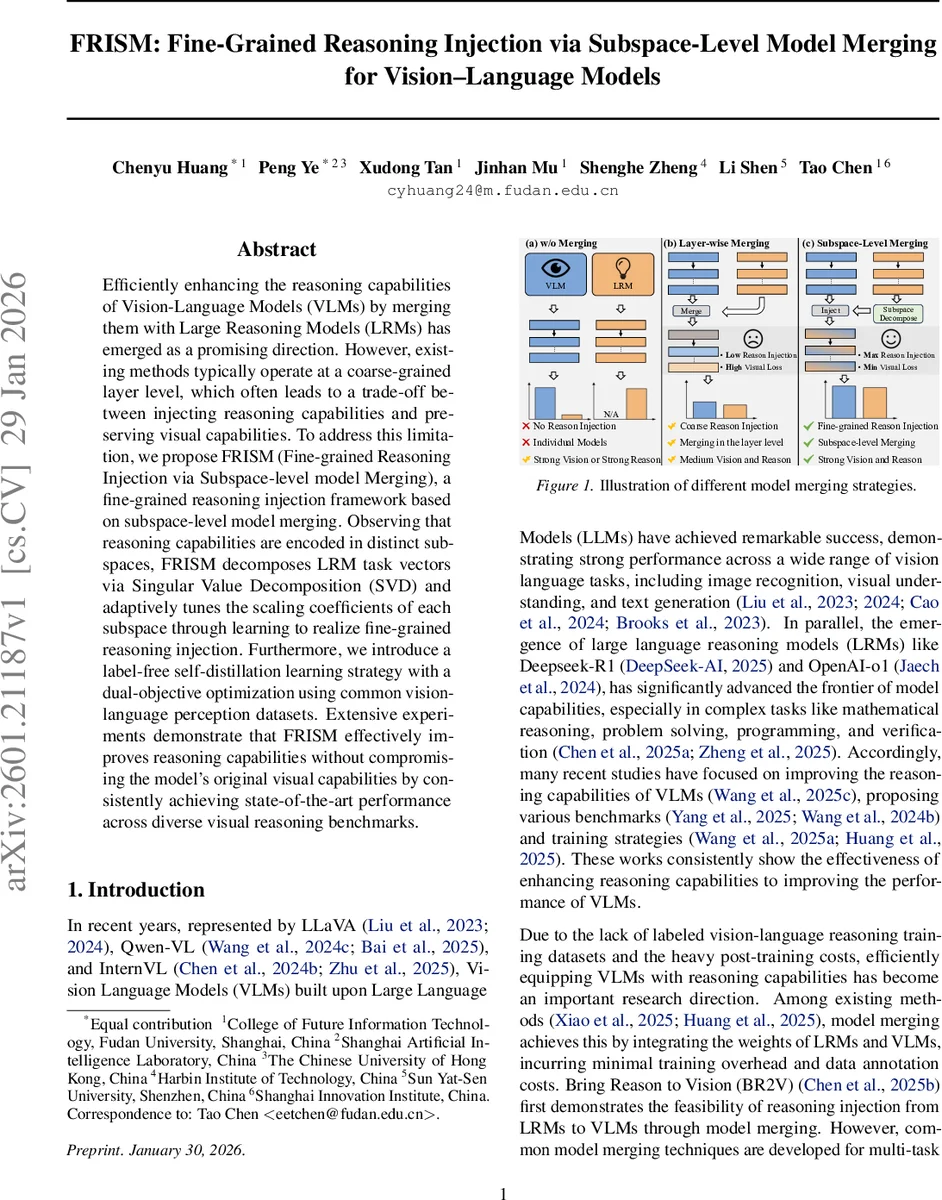

Efficiently enhancing the reasoning capabilities of Vision-Language Models (VLMs) by merging them with Large Reasoning Models (LRMs) has emerged as a promising direction. However, existing methods typically operate at a coarse-grained layer level, which often leads to a trade-off between injecting reasoning capabilities and preserving visual capabilities. To address this limitation, we propose {FRISM} (Fine-grained Reasoning Injection via Subspace-level model Merging), a fine-grained reasoning injection framework based on subspace-level model merging. Observing that reasoning capabilities are encoded in distinct subspaces, FRISM decomposes LRM task vectors via Singular Value Decomposition (SVD) and adaptively tunes the scaling coefficients of each subspace through learning to realize fine-grained reasoning injection. Furthermore, we introduce a label-free self-distillation learning strategy with a dual-objective optimization using common vision-language perception datasets. Extensive experiments demonstrate that FRISM effectively improves reasoning capabilities without compromising the model’s original visual capabilities by consistently achieving state-of-the-art performance across diverse visual reasoning benchmarks.

💡 Research Summary

The paper introduces FRISM (Fine‑grained Reasoning Injection via Subspace‑level Model merging), a novel framework for endowing Vision‑Language Models (VLMs) with the reasoning capabilities of Large Reasoning Models (LRMs) without sacrificing visual performance. Existing model‑merging approaches operate at the layer level, which inevitably couples visual and reasoning abilities and leads to a trade‑off: strengthening reasoning often degrades visual perception. FRISM addresses this by hypothesizing that reasoning knowledge resides in distinct low‑rank subspaces of the parameter space. To test the hypothesis, the authors compute the task vector of an LRM (the weight difference between the fine‑tuned LRM and a shared base model) and decompose it via Singular Value Decomposition (SVD) into orthogonal left/right singular vectors (U, V) and singular values (S). Each singular value corresponds to a subspace’s importance.

FRISM proceeds in two stages.

Stage 1 – Decomposition & Initialization: For each linear layer l, the LRM task vector τ_l^lrm is factorized as U_l S_l V_l^T. The orthogonal bases U_l and V_l are frozen, preserving the semantic direction of the reasoning update. A lightweight, learnable gating vector g_l ∈ ℝ^r (where r is the rank) is introduced; after a sigmoid, it modulates each singular value: S_eff = sigmoid(g_l) ⊙ S_l. The merged layer becomes θ_l^merged = θ_l^vlm + λ_lrm·U_l S_eff V_l^T, where λ_lrm is a global reasoning coefficient (often set to 1). This design allows fine‑grained control over how much of each subspace is injected, while keeping the number of trainable parameters negligible.

Stage 2 – Injection & Training: To preserve visual capabilities, FRISM employs a label‑free self‑distillation scheme. The original VLM serves as a frozen teacher; the merged model (parameterized by the gates) is the student. On large, publicly available vision‑language datasets (e.g., TextVQA, POPE), the student minimizes the Kullback‑Leibler divergence between its output distribution and the teacher’s, ensuring that visual perception remains aligned. Simultaneously, a “spectral injection strength” loss maximizes the sum of gated singular values, encouraging the model to absorb as much reasoning prior as possible. The total loss is a weighted sum of the KL term (visual preservation) and the spectral term (reasoning injection).

Experiments merge DeepSeek‑R1‑Distill‑Qwen‑7B (LRM) into Qwen2.5‑VL‑7B‑Instruct (VLM). By varying the scaling of individual subspace ranks, the authors demonstrate that different ranks achieve peak performance at distinct scaling levels, confirming that reasoning knowledge is not uniformly distributed. Compared with prior methods—BR2V, FRANK (layer‑wise Taylor‑derived merging), and IP‑Merging (similarity‑based selective merging)—FRISM consistently improves reasoning benchmarks (MMStar, R1‑OneVision) by 2–4 percentage points while keeping vision benchmarks (TextVQA, POPE) virtually unchanged. The approach adds less than 0.1 % extra parameters, making it highly efficient and plug‑and‑play across various VLM architectures and scales.

Key insights:

- Subspace‑level merging provides a much finer granularity than layer‑wise merging, allowing selective transfer of reasoning knowledge.

- SVD offers an optimal low‑rank approximation, revealing the latent structure of task vectors and enabling precise gating.

- Label‑free self‑distillation effectively safeguards visual performance without requiring costly multimodal reasoning annotations.

- The method’s parameter efficiency (only the gating vectors are learned) makes it scalable to large models.

Limitations include the focus on linear layers (extension to non‑linear Transformer blocks remains open) and reliance on SVD approximations, which may introduce errors for extremely high‑dimensional updates. Nonetheless, FRISM establishes a compelling paradigm for rapid, low‑cost reasoning augmentation of vision‑language systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment