MAD: Modality-Adaptive Decoding for Mitigating Cross-Modal Hallucinations in Multimodal Large Language Models

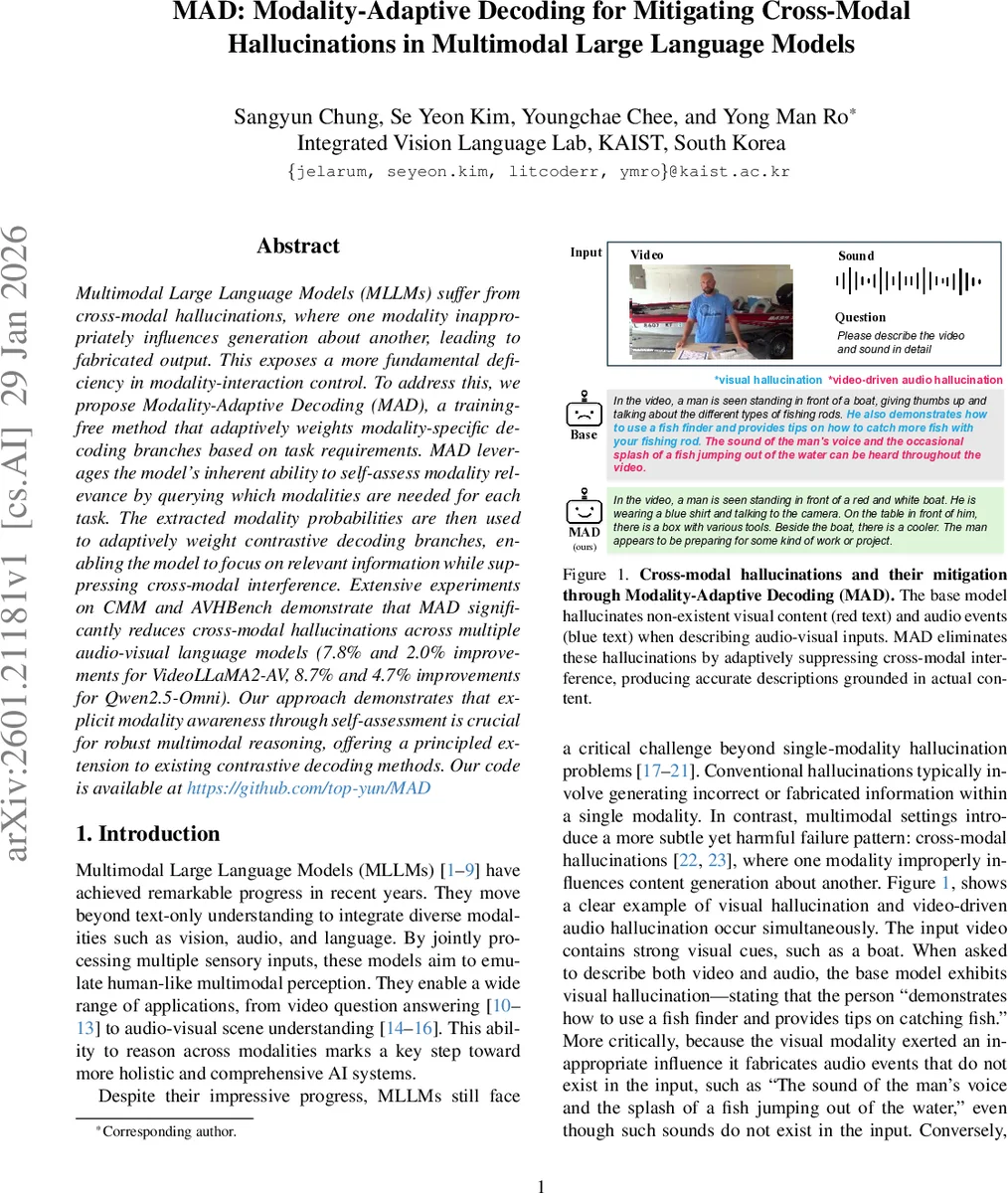

Multimodal Large Language Models (MLLMs) suffer from cross-modal hallucinations, where one modality inappropriately influences generation about another, leading to fabricated output. This exposes a more fundamental deficiency in modality-interaction control. To address this, we propose Modality-Adaptive Decoding (MAD), a training-free method that adaptively weights modality-specific decoding branches based on task requirements. MAD leverages the model’s inherent ability to self-assess modality relevance by querying which modalities are needed for each task. The extracted modality probabilities are then used to adaptively weight contrastive decoding branches, enabling the model to focus on relevant information while suppressing cross-modal interference. Extensive experiments on CMM and AVHBench demonstrate that MAD significantly reduces cross-modal hallucinations across multiple audio-visual language models (7.8% and 2.0% improvements for VideoLLaMA2-AV, 8.7% and 4.7% improvements for Qwen2.5-Omni). Our approach demonstrates that explicit modality awareness through self-assessment is crucial for robust multimodal reasoning, offering a principled extension to existing contrastive decoding methods. Our code is available at \href{https://github.com/top-yun/MAD}{https://github.com/top-yun/MAD}

💡 Research Summary

Multimodal large language models (MLLMs) have achieved impressive capabilities by jointly processing text, vision, and audio. However, a critical failure mode—cross‑modal hallucination—remains largely unaddressed. In this phenomenon, information from one modality improperly influences the generation about another, leading to fabricated visual or auditory details that are not present in the input. Existing mitigation strategies, such as contrastive decoding (CD), focus on a single modality (typically vision) and apply a uniform perturbation‑based penalty regardless of the task’s modality requirements. Consequently, they cannot dynamically suppress the influence of irrelevant modalities, and may even degrade performance on tasks that rely on a different sense (e.g., audio‑only queries).

The paper introduces Modality‑Adaptive Decoding (MAD), a training‑free inference technique that equips an MLLM with the ability to self‑assess which modalities are needed for a given question and to adaptively weight contrastive decoding accordingly. MAD operates in two steps. First, a fixed “modality query” prompt—“To answer this question, which modality is needed (audio, video, or both)?”—is appended to the original multimodal inputs. The model’s response yields a probability distribution over the three modality options; these probabilities are interpreted as task‑specific modality weights wᵥ, wₐ, wₐᵥ in the range

Comments & Academic Discussion

Loading comments...

Leave a Comment