Breaking the Reasoning Horizon in Entity Alignment Foundation Models

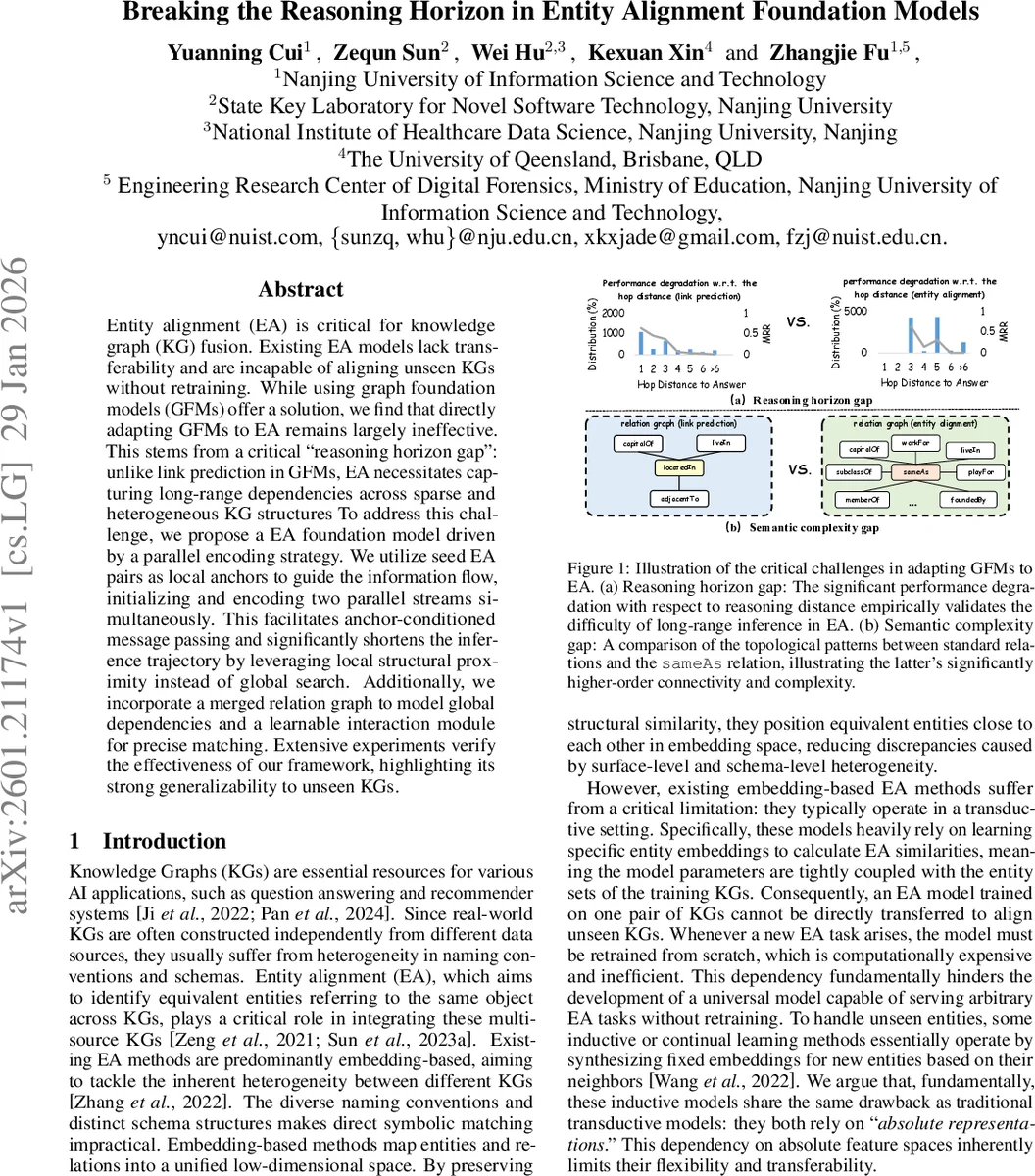

Entity alignment (EA) is critical for knowledge graph (KG) fusion. Existing EA models lack transferability and are incapable of aligning unseen KGs without retraining. While using graph foundation models (GFMs) offer a solution, we find that directly adapting GFMs to EA remains largely ineffective. This stems from a critical “reasoning horizon gap”: unlike link prediction in GFMs, EA necessitates capturing long-range dependencies across sparse and heterogeneous KG structuresTo address this challenge, we propose a EA foundation model driven by a parallel encoding strategy. We utilize seed EA pairs as local anchors to guide the information flow, initializing and encoding two parallel streams simultaneously. This facilitates anchor-conditioned message passing and significantly shortens the inference trajectory by leveraging local structural proximity instead of global search. Additionally, we incorporate a merged relation graph to model global dependencies and a learnable interaction module for precise matching. Extensive experiments verify the effectiveness of our framework, highlighting its strong generalizability to unseen KGs.

💡 Research Summary

The paper tackles the fundamental problem of Entity Alignment (EA) for knowledge graph (KG) fusion by introducing a novel foundation model, EAFM, that directly addresses the “reasoning horizon gap” inherent in adapting graph foundation models (GFMs) to EA tasks. Traditional EA approaches rely on transductive embeddings tied to specific entity sets, making them incapable of handling unseen KGs without costly retraining. While recent KG‑foundation models achieve transferability through relative, structure‑based representations, they are primarily designed for intra‑graph tasks such as link prediction. When applied to EA, these models must bridge two disjoint graphs, often requiring long‑range message passing that exceeds their receptive field, leading to severe performance degradation as demonstrated by hop‑distance analyses.

EAFM resolves this by (1) exploiting the commonly available seed EA pairs as local anchors, (2) initializing and encoding two KG streams in parallel, and (3) guiding message passing with anchor‑conditioned features. The anchor‑conditioned initialization assigns a unit vector to any node that lies within a k‑hop neighborhood of a seed pair and zero otherwise, thereby encoding relative structural coordinates instead of absolute IDs. This design eliminates the need for pre‑trained entity embeddings, enabling zero‑shot inference on completely new KG pairs.

To handle relational schema heterogeneity, the authors construct a merged relation graph that unifies all relations from both KGs. Nodes are relations, and edges capture five interaction types (head‑head, head‑tail, tail‑head, tail‑tail, and inverse) derived from incidence matrices built on a virtual unified entity space created via the seed anchors. A dedicated Relation‑aware GNN (RelGNN) processes this graph, using query‑conditioned initialization (only relations adjacent to the query receive a one‑vector) and attention‑weighted message passing to produce global relation embeddings. These embeddings serve as a schema‑level prior that conditions the Entity‑aware GNN (EntGNN) operating on the two KGs in parallel.

The EntGNN shares parameters across both graphs and performs anchor‑conditioned message passing, allowing the model to focus on local structural proximity rather than exhaustive global traversal. After obtaining entity representations, a learnable interaction module replaces static similarity measures. This module is trained with a bidirectional classification loss, enabling fine‑grained discrimination of candidate alignments.

Training proceeds in two stages: (i) pre‑training on source KG pairs with seed anchors, jointly optimizing RelGNN, EntGNN, and the matcher; (ii) inference on unseen KG pairs, where the frozen RelGNN and EntGNN are directly applied using the same anchor‑conditioned initialization, requiring no fine‑tuning. Experiments on standard benchmarks (FB15K‑237, DBP15K, OpenEA) show that EAFM outperforms state‑of‑the‑art EA methods by a substantial margin in MRR and Hits@1. Zero‑shot transfer experiments demonstrate that the model retains high alignment accuracy on completely new KG pairs, confirming its practical transferability. Ablation studies reveal that each component—anchor‑conditioned initialization, merged relation graph, and learnable matcher—contributes significantly to overall performance.

In summary, the paper provides a comprehensive analysis of why existing GFMs fail for EA, introduces a principled parallel encoding framework that bridges the reasoning horizon gap, and validates the approach with extensive empirical evidence. The work opens avenues for extending anchor‑driven parallel encoding to other cross‑graph tasks such as domain adaptation, multimodal alignment, and integration with large language models for richer semantic matching.

Comments & Academic Discussion

Loading comments...

Leave a Comment