Learning to Advect: A Neural Semi-Lagrangian Architecture for Weather Forecasting

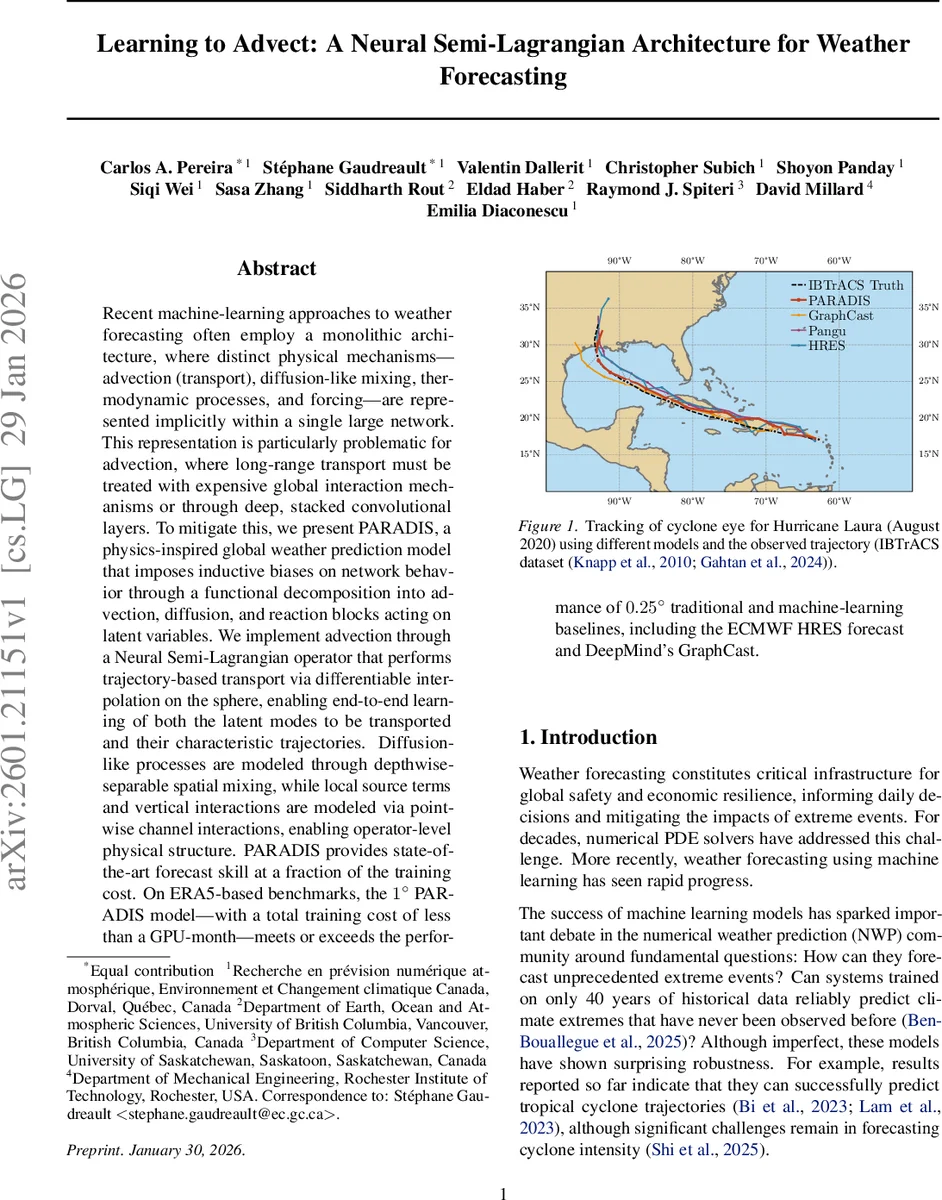

Recent machine-learning approaches to weather forecasting often employ a monolithic architecture, where distinct physical mechanisms (advection, transport), diffusion-like mixing, thermodynamic processes, and forcing are represented implicitly within a single large network. This representation is particularly problematic for advection, where long-range transport must be treated with expensive global interaction mechanisms or through deep, stacked convolutional layers. To mitigate this, we present PARADIS, a physics-inspired global weather prediction model that imposes inductive biases on network behavior through a functional decomposition into advection, diffusion, and reaction blocks acting on latent variables. We implement advection through a Neural Semi-Lagrangian operator that performs trajectory-based transport via differentiable interpolation on the sphere, enabling end-to-end learning of both the latent modes to be transported and their characteristic trajectories. Diffusion-like processes are modeled through depthwise-separable spatial mixing, while local source terms and vertical interactions are modeled via pointwise channel interactions, enabling operator-level physical structure. PARADIS provides state-of-the-art forecast skill at a fraction of the training cost. On ERA5-based benchmarks, the 1 degree PARADIS model, with a total training cost of less than a GPU month, meets or exceeds the performance of 0.25 degree traditional and machine-learning baselines, including the ECMWF HRES forecast and DeepMind’s GraphCast.

💡 Research Summary

The paper addresses a fundamental limitation of current deep‑learning weather models: they treat advection, diffusion, and reaction as implicit patterns learned by a monolithic network. Because convolutional and attention mechanisms are inherently local, representing long‑range transport requires deep stacks of layers, which introduces diffusive bias, smears high‑frequency features, and leads to poor physical consistency. To overcome this, the authors introduce PARADIS (Physically‑inspired Advection, Reaction And DIffusion on the Sphere), a neural architecture that explicitly mirrors the advection‑diffusion‑reaction decomposition of the atmospheric governing equations.

PARADIS operates in a latent space. An encoder projects the physical state q (e.g., temperature, wind, humidity) into a high‑dimensional latent tensor h with many channels (C_lat). The latent tensor is then split into three functional blocks:

-

Neural Semi‑Lagrangian (NSL) Advection – A learned velocity field V_net (implemented as a depthwise‑separable convolution) defines characteristic trajectories on the sphere. For each grid point, the departure point is computed by backward integration (x_d = x – Δt·u(x)). Because x_d is generally off‑grid, bicubic interpolation samples the latent features at that location. This operation is fully differentiable, allowing gradients to flow back into V_net. Crucially, the model first projects h onto a smaller set of “transportable” channels (C_adv) via a pointwise convolution, performs the semi‑Lagrangian transport on this compressed representation, and then projects back to the full latent space. This forces the network to discover which combinations of atmospheric variables should be advected as coherent modes, dramatically reducing the computational cost from O(N_grid²) (as in global attention) to O(N_grid).

-

Diffusion – Implemented as depthwise‑separable spatial mixing, each channel is smoothed independently, approximating physical diffusion without inter‑channel coupling. This design preserves the locality of diffusion while keeping parameter count low.

-

Reaction – Realized with pointwise 1×1 convolutions that act locally on each grid point, learning source terms such as radiative heating, phase changes, and other sub‑grid processes.

The three blocks are stacked repeatedly, effectively forming a sub‑stepping scheme that can handle larger time steps while maintaining the stability properties of classical semi‑Lagrangian methods (no CFL restriction, only a Lipschitz condition on the velocity field).

Training uses a multi‑stage curriculum. Initially, a reversed Huber loss mitigates the impact of large errors, encouraging the network to learn robust transport patterns. In later stages, a spectral fine‑tuning loss explicitly aligns the amplitude and phase of the forecasted fields with the target, counteracting the “double penalty” effect of pure MSE that pushes predictions toward a conditional mean and suppresses extremes.

Experiments are conducted on ERA5 reanalysis data at 1° resolution. The model is trained for less than one GPU‑month, a fraction of the resources required by competing approaches. Evaluation against several baselines—including a 0.25° traditional NWP model, the ECMWF HRES operational forecast, and DeepMind’s GraphCast—shows that PARADIS matches or exceeds their skill across standard metrics (RMSE, ACC) for forecasts up to 10 days. A case study on Hurricane Laura demonstrates superior tracking of the cyclone eye compared with the baselines, highlighting the model’s ability to preserve sharp, high‑frequency structures.

In summary, PARADIS demonstrates that embedding physically motivated operators—particularly a differentiable semi‑Lagrangian advection—into neural networks yields a model that is both computationally efficient and physically consistent. By separating what to transport (latent modes) from how to transport it (characteristic trajectories), the architecture avoids the need for deep, parameter‑heavy networks to learn advection implicitly. The approach offers a scalable pathway toward high‑resolution, global, data‑driven weather forecasting that can complement or even surpass traditional numerical weather prediction systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment